Israeli SQL query acceleration startup NeuroBlade has set up a US HQ in Silicon Valley from where its co-founder and CTO will evangelise hyper compute for analytics to the masses.

The business came out of stealth in October, having pulled in $83 million of B-round funding. NeuroBlade says the datasets used in analytics have been growing so fast, to multi-100TB levels, that IT infrastructure hasn’t been able to keep up. Analytical possibilities are being strangled because it takes too long to extract data from data lakes and warehouses and get it to existing servers. New hardware and software is needed.

CTO and co-founder, Eliad Hillel, says in a statement: “The reality is that the infrastructure for analytics has not kept up with the pace of data creation and the need to analyze large data sets that go into the hundreds of terabytes. The software layer has enjoyed immense innovation over the years. Yet, little has been done to recreate such creativity within the hardware layer.

“If you look across the industry today, you’ll see emerging solutions that aim to bring compute closer to processing, but no one is addressing it systems-wide, end-to-end, across compute, memory, storage and network.”

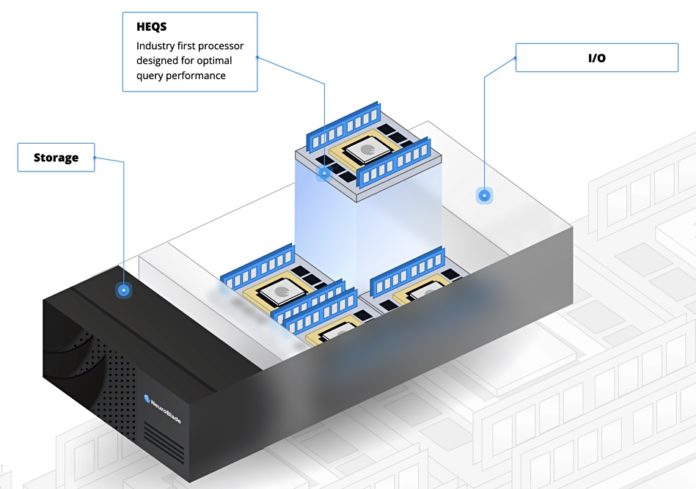

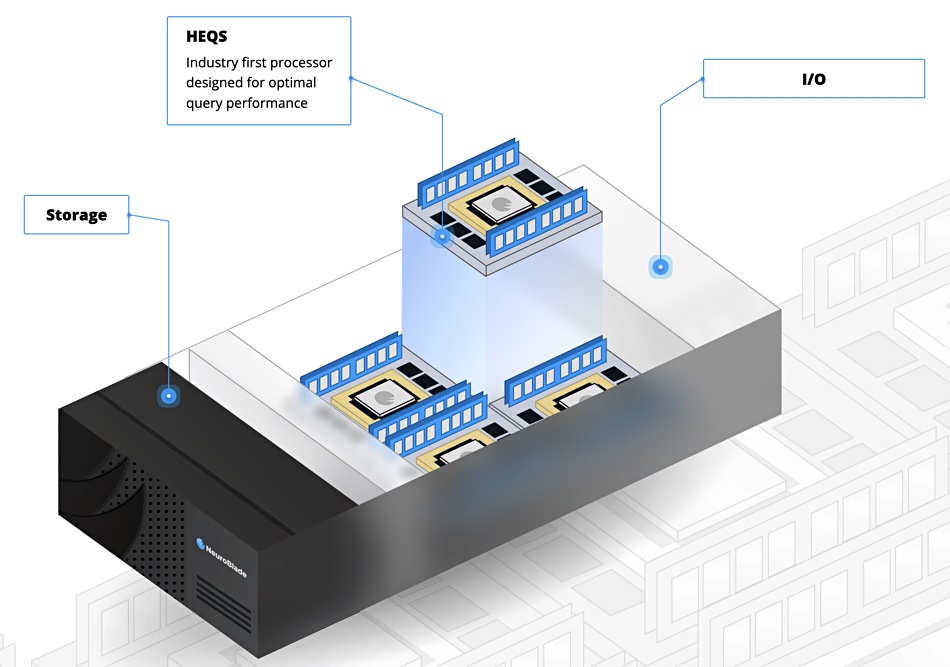

He says Neuroblade is a SW+HW systems company with its open Hardware Enhanced Query System (HEQS) technology. This, the company claims, can accelerate analytics on top of what software alone can do by a factor of 10x. This 10x or more speed is why, he adds, NeuroBlade is introducing hyper compute for analytics .

The company is shipping its Xiphos compute-in-storage appliance, a box with an X86 controller running Linux, and up to 32 x NVME SSDs connected by multiple 26-lane PCIe bus links to 4 Intense Memory Processing Units IMPUs). Each contains DRAM memory banks connected by wide IO buses to an XRAM Processor: a multi-1,000 core chip acting as an inference engine and running neural networks. System throughput is in the TB/sec area.

The concept is to avoid shipping hundreds of terabytes of data to server CPUs or GPUs for analysis through a network or fabric links. Have the analytics work done in the storage array using a closely-linked XRAM processing unit and DRAM, which is a processing-in memory (PIM) or processing-near-memory approach.

Having processing elements built directly into a DRAM die limits the size, scope, instruction set and performance of such processors. Building a specialised analytical processing unit, like NeuroBlade’s XRAM unit, is meant to provide a much more powerful processor with a broader instruction set.

SAP is one prospective customer evaluating the Xiphos system. NeuroBlade will be at the Flash Memory Summit next week and attendees should hear more from the company then.