Amazon Web Services (AWS) has integrated Hammerspace data orchestration to imrove its globally distributed render farm capabilities through AWS Thinkbox Deadline.

According to an AWS blog, AWS Thinkbox Deadline serves as a render manager that allows users to use a mix of on-premises, hybrid, or cloud-based resources for rendering tasks. The platform offers plugins tailored for specific workflows. The Spot Event Plugin (SEP) oversees and dynamically scales a cloud-based render farm based on the task volume in the render queue. This plugin launches render application EC2 Spot Instances when required and terminates them after a specified idle duration.

SEP supports multi-region rendering across any of the 32 AWS regions. With a single Deadline repository, users can launch render nodes in a preferred region. This offers regional choices to optimize costs or prioritize energy sustainability. For effective distributed rendering, the necessary data for a region’s render farm must be appropriately positioned.

Along with Hammerspace, AWS has introduced an open source Hammerspace event plugin for Deadline. This plugin utilizes Hammerspace’s Global File System (GFS) to ensure data is transferred to its required location.

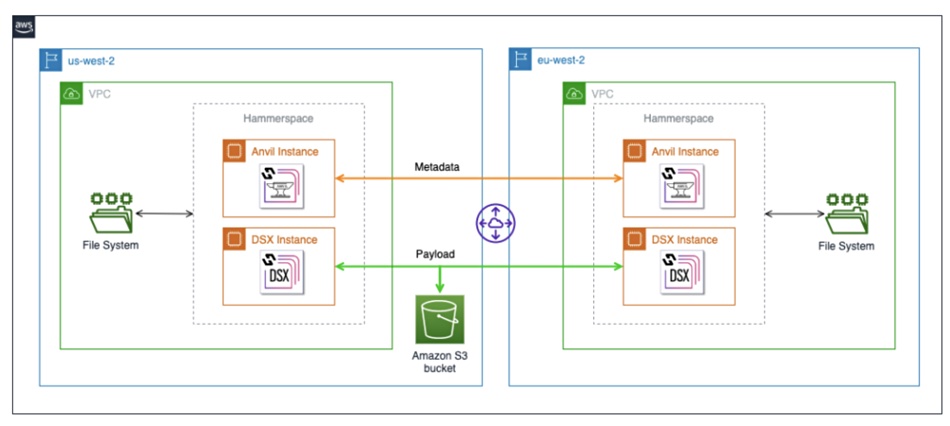

The data is categorized into render farm content data and associated metadata. Hammerspace’s GFS Anvil Nodes consistently synchronize the metadata, which is typically a few kilobytes for larger files, across the involved regions. However, the content or payload data is synchronized by Hammerspace Data Service Nodes (DSX) when needed.

The nodes operate as EC2 instances, with DSX nodes using AWS S3 for staging. By default, payload data remains in its originating region. If required in another region, the primary DSX node transfers it to the destination node. Hammerspace’s directives and rules can be configured to establish policies for this process, ensuring files are pre-positioned as required.

The AWS blog has information about how Hammerspace and Deadline handle file:folder collisions to ensure a single version of file truth.

This is an example of how using Hammerspace can avoid the network traffic load of continuous file syncing and gives Hammerspace something of an AWS blessing.