Optimizing the digital data supply chain for SQL query processing is what drives the NeuroBlade founders and their hyper compute for analytics technology. Co-founders CEO Elad Sity, CTO Eliad Hillel, and also CMO Priya Doty, told us at FMS 2022 that they wish to optimize every stage of the data supply chain from storage drive to processor when executing a SQL query.

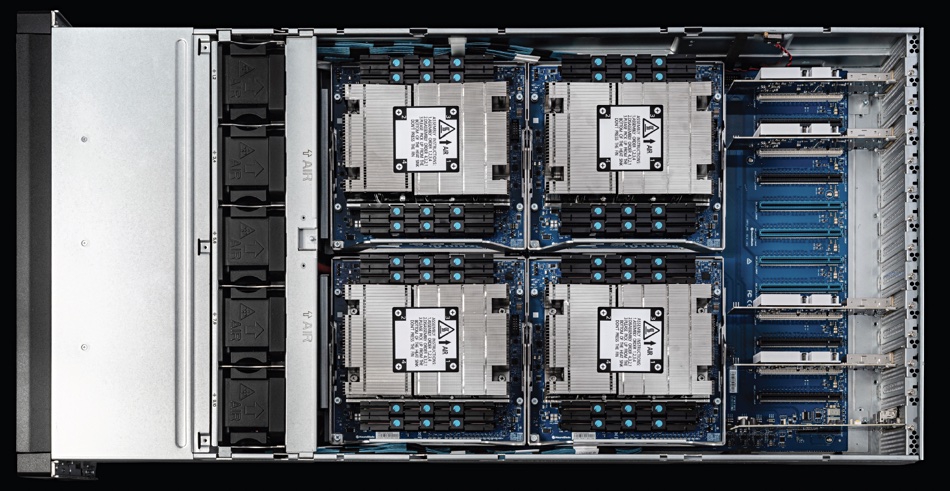

We discussed NeuroBlade’s Xiphos hardware using XRAM processing-in-memory technology here. This interview allowed us to explore why NeuroBlade was founded and how it is coming to market.

Sity and Hillel are two ex-SolarEdge execs. Doty is a former VP of product marketing for IBM. SolarEdge is an Israeli company building photovoltaic inverters and battery monitoring equipment. It IPO’d on NASDAQ in 2015, raising $126 million.

We asked Sity what the trigger was for starting NeuroBlade.

He said: “SolarEdge is taking off. Good company, great company. All of us are friends for many years. All of us knew each other from the army. I can tell you from my perspective, I was in New York [for] the IPO for SolarEdge. Five minutes after that we [went] from the NASDAQ building to the bar across the street. I asked myself, ‘How do I get here again? How do we do this again?’

“And we came back from New York. I [went] to the SolarEdge CEO and told him this is it. Like, it’s going to take six months, a year, but I’m starting my own company.

“I was playing around with stock trading. Like every good real-time engineer, I thought if it doesn’t run fast, you try to realize why. And what we figured out is CPUs for general purpose, GPU for compute-intensive [graphic] workloads. We said we’re just going to build a video [graphics] processor for data.

“When we said we are going to build the next video for data we were kind of crazy because Nvidia didn’t build in five years. They built it in 30. And they started from gaming. It took us a little bit of time to find what is going to be our ‘gaming,’ the use case that we are going to crush.”

And that core application was SQL-accessed analytics.

The memory key

Sity said the core issue was memory. “When you try to run data analysis, join tables together, and do data crunching, we saw that the memory is far from being optimized enough to support the IOs that come from drives and you don’t get to the full potential of your IOs, streaming from drives, terabytes and petabytes of data.

“You don’t get to the full IOPS of the system, getting bogged down either by memory or network, sometimes compute. And this is why we started on technology… the problem with a gap between NAND and the actual processor. You couldn’t get data from the NAND into the processor fast enough to keep all the cores busy. So there had to be a way to build new processors.”

We suggested it had to be a parallel design.

Doty said: “To be parallel, it had to be very memory-efficient. For us, it started from the memory. So how do we untie the memory bottlenecks in the system?”

Sity said his previous experience “was always about bringing a full solution that is mixing up different technologies and solving for the entire system.”

“I can say that, originally, we are not the best silicon people, not the best hardware people, not the best algorithms people. We learn how to bring all the best people to work together. Our expertise is how you look at it all from a system perspective and bring it all together. So in the case of problems like data analytics, or actually every problem that has a lot of data, you need to look at the storage, the memory, the networking, the computer itself.”

Supply chain optimization

B&F notes that there is a digital supply chain between the NAND drive and the processor, and asked NeuroBlade if it wants to optimize that digital supply chain, not just one component in it?

Sity said: “Entirely, we never intended to be a component company. We figured out very, very early on this is a game of compatibility and ease of use, which means it’s a lot of software. So we never wanted to be a component company.”

“This is also one other thing that we realized; if you optimize just in networking, if you optimize just the storage 10 percent,” you are just pushing the bottleneck somewhere else. “But if you’re looking at the entire system, as a whole, trying to optimize all the path from the storage all the way back to give the user an answer. Yes. If you also go through the entire software stack, then you can get to the 10x, 100x improvement.”

Doty said: “If you don’t handle the physical aspects of the actual infrastructure, then you can’t actually accelerate everything. You’re still leaving a lot on the table. And that’s our premise.”

Talking about their processor, Sity said: “Right now it’s based on FPGAs,” with an intent to move to ASICs. The whole system is modular so changes can be swapped in at each layer to improve performance even more; PCIe 4 for PCIe 5, for example.

Although the hardware and its firmware is proprietary there is a software stack to interface it to standard analytics applications. Doty said: “The idea being that you can go from analytics workloads down to our analytics engines [and] people don’t have to make any changes to what they’re doing.

“If you’re in your own datacenter, put it in your system, basically connect our devices to the network and install the plugin. And for end users, nothing changes. It’s on the infrastructure level… as long as you have a disaggregated storage and compute cluster, we plug in.”

The software stack can be extended, Doty said. “We’ll have a connector based on Spark in the download center.”

Customers

NeuroBlade’s early customers are akin to design partners, helping to improve the product’s design and usability, and they pay for the privilege – because the technology is promising.

We asked what kind of customers is NeuroBlade looking at?

Doty said it’s not a mid-market solution: “Primarily, we’re going after two different types of companies. We’re going after cloud providers; tier one and tier two. We have a two pronged approach. One is that a cloud provider puts the NeuroBlade system into their datacenter and resells it as an analytics accelerator on top of their existing solutions. Then the other is direct to customers who do have a datacenter. It’s mostly Fortune 1000 in terms of the end customers. And there doesn’t seem to be any specific industry that doesn’t need it.

“It’s almost like a silent-suffer problem where, if you actually go talk to people who do analytics, they’re like, ‘Oh, yeah, it’s a huge issue. I can’t keep up with my infrastructure to support the needs of my analytics users.’ But they just kind of suffer silently. This is the way it is.

“They’re focused on the data layer, the database, and the data warehouse in the data lake layer, but they’re not focused on the underlying infrastructure,” said Doty.

Which is where NeuroBlade comes in as a data analytics infrastructure technology and product play.

In effect, any enterprise with data and compute-intensive SQL queries can use NeuroBlade’s system to speed up their SQL queries by up to 100x. Doty showed a slide with an analytics company quote: “I have 1,000 cores for analytics and another 1,000 for ETL and still can’t meet the needs of 200 users.”

Doty introduced one NeuroBlade customer, CMA, a healthcare Medicaid administrator which handles a billion claims a year. It is building its third-gen analytics service with NeuroBlade, to leapfrog the limitations of RDBMS by using NVMe and software-defined storage to reduce IO bandwidth bottlenecks.

CMA said: “NeuroBlade is a game-changer: it allows CMA to run thousands of queries scanning hundreds of terabytes daily, delivering queries in less than 1 minute. Also allows CMA to eliminate commercial RDBMSes and 30 years of feature set ‘bloat’ to address the query IO bandwidth bottleneck, and there’s a significant price/performance advantage. NeuroBlade eliminates end-to-end the bottlenecks, accelerates processing, and reduces datacenter footprint.”

Summary

What CMA and other NeuroBlade customers have done is to go against conventional thinking that software can drive commodity server, storage, and networking components fast enough, and introduced a GPU-like approach with specific analytical processing hardware, designed with thousands of cores; a domain-specific processing approach. Store your hundreds of terabytes of analytics data in its Xiphos box, send it SQL query requests, and get more rapid responses back.