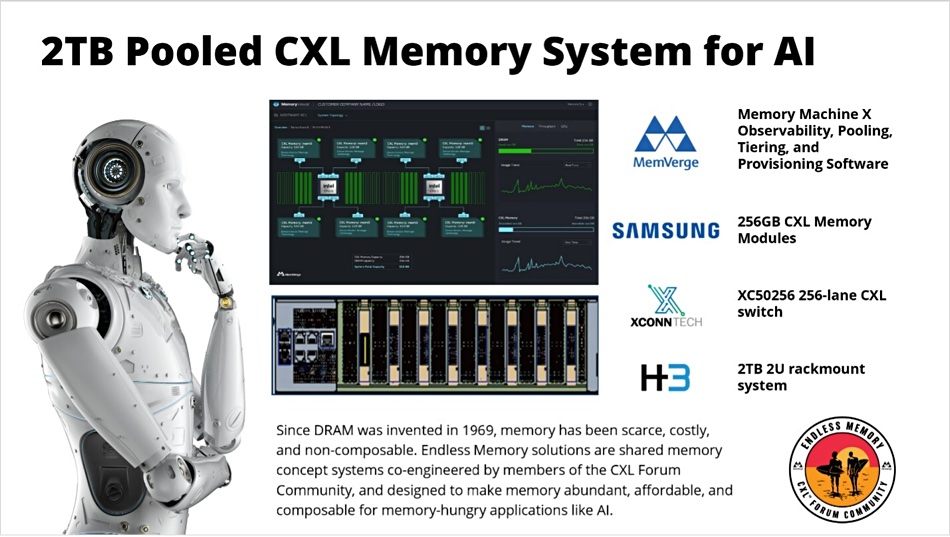

Suppliers are showcasing a 2TB pooled CXL memory concept system at the Flash Memory Summit 2023 in Santa Clara.

H3 Platform has developed a co-engineered 2RU chassis that houses 8 x Samsung CXL 256GB memory modules. These are connected to an XConn XC50256 CXL switch and use MemVerge Memory Machine X software to manage the CXL memory – both pooling and provisioning it to apps in associated servers. The Computer eXpress Link (CXL) is a sophisticated PCIe gen 5 bus-based connectivity protocol. Its v2.0 version supports memory pooling and facilitates cache coherency between a CXL memory device and systems accessing it, such as servers.

JS Choi, Samsung Electronics’ VP of its New Business Planning Team, said: “The concept system unveiled at Flash Memory Summit is an example of how we are aggressively expanding its usage in next-generation memory architectures.”

Unlike conventional DRAM DIMMs that are sold directly to server OEMs, CXL pooled memory requires CXL switches, enclosures, and management software. Industry leaders like Micron, SK hynix, and Samsung view CXL pooled memory as a method to diversify the datacenter memory market beyond the typical server chassis. Their goal is to foster a comprehensive ecosystem comprising CXL switches, chassis, and software suppliers to propel this emergent market.

XConn’s XC50256 is recognized as the inaugural CXL 2.0 and PCIe Gen 5 switch ASIC. It has up to 32 ports, which can be subdivided into 256 lanes, a total switching capacity of 2,048GBps and minimized port-to-port latency.

According to H3 Platform, its concept chassis provides connectivity to eight hosts, with the MemVerge Memory Machine X software dynamically allocating it. Additionally, an H3 Fabric Manager facilitates the management interface to the chassis.

This MemVerge software mirrors the version used in Project Endless Memory in collaboration with SK hynix and its Niagara pooled CXL memory hardware in May. If an application requires memory beyond what’s available in its server host, it can secure additional allocation from the connected CXL pool through MemVerge’s software. A Memory Viewer service provides insight into the physical topology and displays a heatmap of memory capacity and bandwidth usage by application.

The DRAM producers are all interested in CXL because it enables them to sell DRAM products beyond the confines of x86 CPU DIMM socket. Micron, for example, is sampling its CXL 2.0-based CZ120 memory expansion modules to customers and partners. They come in 128GB and 256GB capacities in the EDSFF E3.S 2T form factor, with its PCIe Gen5 x8 interface, obviously competing head on with Samsung’s 256GB CXL Memory Modules.

Micron’s CZ120 can deliver up to 36GBps memory read/write bandwidth. Meanwhile, Samsung has introduced a 128GB CXL 2.0 DRAM module with a bandwidth of 35GBps. Although the bandwidth for Samsung’s 256GB module remains undisclosed, they have previously mentioned a 512GB CXL DRAM module, suggesting a variety of CXL Memory Module capacities in the pipeline.

B&F anticipates that leading server OEMs such as Dell, Lenovo, HPE, and Supermicro, will inevitably roll out CXL pooled memory chassis products. These will likely be complemented by supervisory software that manages dynamic memory composability functions in tandem with their server products.