Quantum has written a unified and scaleout file and object storage software stack called Myriad, designing it for flash drives and using Kubernetes-orchestrated microservices to lower latency and increase parallelism.

The data protector and file and object workflow provider says Myriad is hardware-agnostic and built to handle trillions of files and objects as enterprises massively increase their unstructured data workloads over the next decades. Quantum joins Pure Storage, StorONE and VAST Data in having developed flash-focused storage software with no legacy HDD-based underpinnings.

Brian “Beepy” Pawlowski, Quantum’s chief development officer, said : “To keep pace with data growth, the industry has ‘thrown hardware’ at the problem… We took a totally different approach with Myriad, and the result is the architecture I’ve wanted to build for 20 years. Myriad is incredibly simple, incredibly adaptable storage software for an unpredictable future.”

Myriad is suited for emerging workloads that require more performance and more scale than before, we’re told. A Quantum technical paper says that “for decades the bottleneck in every system design has been the HDD-based storage. Software didn’t have to be highly optimized, it just had to run faster than the [disk] storage. NVMe and RDMA changed that. To fully take advantage of NVMe flash requires software designed for parallelism and low latency end-to-end. Simply bolting some NVMe flash into an architecture designed for spinning disks is a waste of time.”

Software

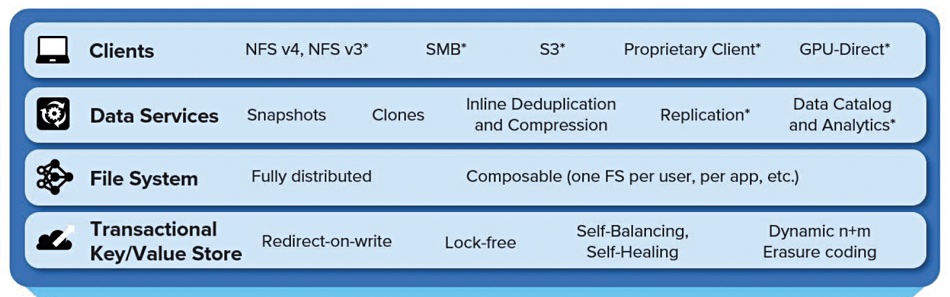

The Myriad has four software stack layers:

Using NFS v4, incoming clients access a data services layer providing file and object access, snapshots, clines, always-on deduplication and compression and, in the future, replication, a data catalog and analytics. These services use a POSIX-compliant filesystem layer which is fully distributed and can be composed (instantiated) per user, and per application.

Linux’s kernel VFS (Virtual File System) layer enables new filesystems to be “plugged in” to the operating system in a standard way and enables multiple filesystems to co-exist in a unified namespace. Applications talk to VFS, which issues open, read and write commands to the appropriate filesystem.

Underlying this is a key/value (KV) store using redirect-on-write technology, not overwriting, which enables the snapshot and clone features. The KV store is lock-free, saving computational overhead and accessing client process time, as well as being self-balancing and self-healing. Files are stored as whole objects or, if large, split into smaller separate objects. This KV store uses dynamic erasure coding (EC) to protect against drive failures.

Metadata is stored in the KV store as well. Generally metadata objects will not be deduplicated or compressed but users’ files will be reduced in size through dedupe and compression. The KV store provides transaction support needed for the POSIX-compliant filesystem.

The access protocols will be expanded to NFS v3, SMB, S3, GPU-Direct and a proprietary Quantum client in the future.

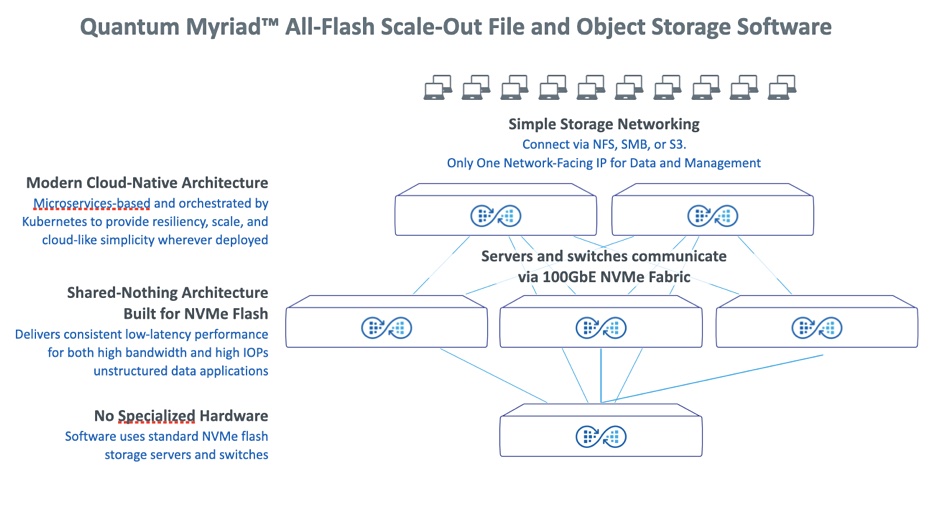

These four software layers run on the Myriad data store, which itself is built from three types of intelligent nodes interconnected by a fabric.

Hardware

The Myriad architecture has four components, as shown in the diagram above and starting from the top:

- Load Balancers to connect the Myriad data store to a customer’s network. These balance traffic inbound to the cluster, as well as traffic within the cluster.

- 100GbitE network fabric to interconnect the Myriad HW/SW component systems to each other and provide client access.

- NVMe storage nodes with shared-nothing architecture; processors and NVMe drives. These nodes run Myriad software and services. Every storage node has access to all NVMe drives across the system essentially as if they were local, thanks to RDMA. Incoming writes are load balanced across the storage nodes. Every node can write to all the NVMe drives, distributing EC chunks across the cluster for high resiliency.

- Deployment node which looks after cluster deployment and maintenance, including initial installation, capacity expansion, and software upgrades. Think of it as the admin box.

The servers and switches involved used COTS (Commercial Off The Shelf) hardware, x86-based in the case of the servers.

Drive and node failure

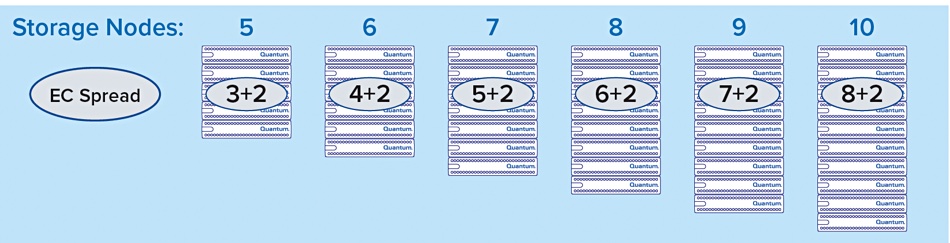

The dynamic erasure coding provides protection against two drives or node failures; a +2 safety level. The “EC Spread” equals the number of drives plus 2. Data is written into zones, parts of a flash drive, and zones across multiple drives and nodes are grouped into zone sets. In a five-node system there will be a 3+2 EC spread scheme while in, say, a nine-node system there will be a 7+2 EC spread.

As the number of nodes increase, say, from 9 to 11, new zone sets are written using the new 9+2 scheme. All incoming data is stored in the new 9+2 zone sets. In the background, and as a low-priority task, all the existing 7+2 zone sets are converted to 9+2. The reverse happens if the node count decreases. If a drive or node fails then its data contents are rebuilt using the surviving zone sets.

In the future the EC safety level will be selectable.

Myriad management

Myriad is managed through a GUI and a cloud-delivered portal featuring AIOps capabilities. A Myriad cluster of any size can be accessed and managed via a single IP address.

The Myriad software has several management capabilities:

- Self-healing, self-balancing software for in-service upgrades that automatically rebuilds and repairs data in the background while rebalancing data as the storage cluster expands, shrinks and changes.

- Automated detection, deployment and configuration of storage nodes within a cluster so it can be scaled, modified or shrunk non-disruptively, without user intervention.

- Automated networking management of the internal RDMA fabric so managing a Myriad cluster requires no networking expertise.

- Inline data deduplication and compression to reduce the cost of flash storage and improve data efficiencies.

- Data security and ransomware recovery with built-in snapshots, clones, snapshot recovery tools and “rollback” capabilities.

- Inline metadata tagging to accelerate AI/ML data processing, provide real-time data analytics, enable creation of data lakes based on tags, and automate data pipelines and workflows.

- Real-Time monitoring of system health, performance, capacity trending, and more from a secure online portal by connecting to Quantum cloud-based AI Operations software

Traditional monitoring via SNMP is available, as well as sFlow. API support is coming and this will enable custom automation.

Myriad workload positioning

Quantum positions Myriad for use with workloads such as AI and machine learning, data lakes, VFX and animation, and other high-bandwidth and high-IOPS applications. These applications are driving growth in the market for scale-out file and object storage, which is expected to grow to be a $15.7 billion market by 2025, according to IDC.

We understand that, over time, existing Quantum services, such as StorNext, will be ported to run on Myriad.

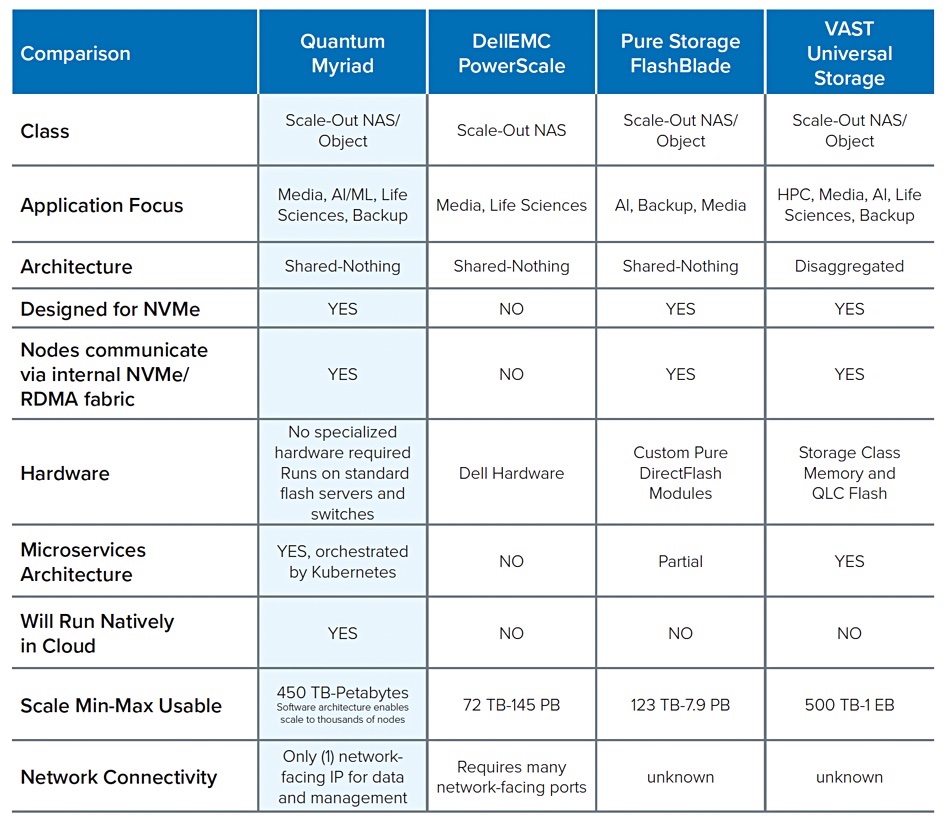

We have not seen performance numbers for Myriad or pricing. Our understanding is that Myriad will be generally competitive with all-flash systems from Dell (PowerStore), Hitachi Vantara, HPE, NetApp, Pure Storage, StorOne and VAST Data. A Quantum competitive positioning table confirms this general idea:

As the Myriad software runs on commodity hardware and is containerized, it can, in theory, run on public clouds and so give Quantum a hybrid on-prem/public cloud capability.

Myriad is available now for early access customers and is planned for general availability in the third quarter of this year. Get an architectural white paper here, a competitive positioning paper here, and a product datasheet here.

Bootnote

Quantum appointed Brian “Beepy” Pawlowski as chief development officer in December 2020. He came from a near three-year stint as CTO at composable systems startup DriveScale, bought by Twitter. Before that he spent three years at Pure Storage, initially as a VP and chief architect and then as an advisor. Before that he ran the FlashRay all-flash array project at NetApp. FlashRay was canned in favor of the SolidFire acquisition. Now NetApp has pulled back from SolidFire and Beepy’s Myriad will compete with NetApp’s ONTAP all-flash arrays. What goes around comes around.

Beepy joined NetApp as employee number 18 in a CTO role and was at Sun before NetApp.