The Storj network has increased in size by a third in four months and the company is expanding into India, it says, adding that under some conditions, Storj’s decentralized storage can be faster than content distribution networks (CDNs) and standard AWS for generative AI model data distribution.

The company provides cloud storage using spare capacity in datacenters around the world, with more than 24,000 points of presence in over 100 countries. Storj treats this spare capacity as a globally distributed store, sharding incoming files and objects, typically into 80 x 64MB fragments, and spreading them plus error correcting erasure codes code around the centers. It competes with Amazon, Azure, Google, Backblaze and other CSPs as a cloud storage provider, offering, it says, lower costs and higher speed retrievals. Customers’ data is retrieved by a subset of the data centers nearest to the customer holding the file fragments, operating in parallel to have the rebuilt file delivered to the customer.

COO John Gleeson told us: “Storj’s network can be up to three times faster than a CDN for software distribution.”

He said the network is also being used to hold the data for Generative AI models, open source ones in particular. Storj says this data is needed in 100GB to 10TB amounts for model creation and file tuning, and in 1MB or smaller amounts for model execution. Data sets and models are transferred between storage and training environments. Ultimately any output of AI model workloads is delivered over a private network or the public internet.

We understand Storj has won a 6PB deal against a major object storage player.

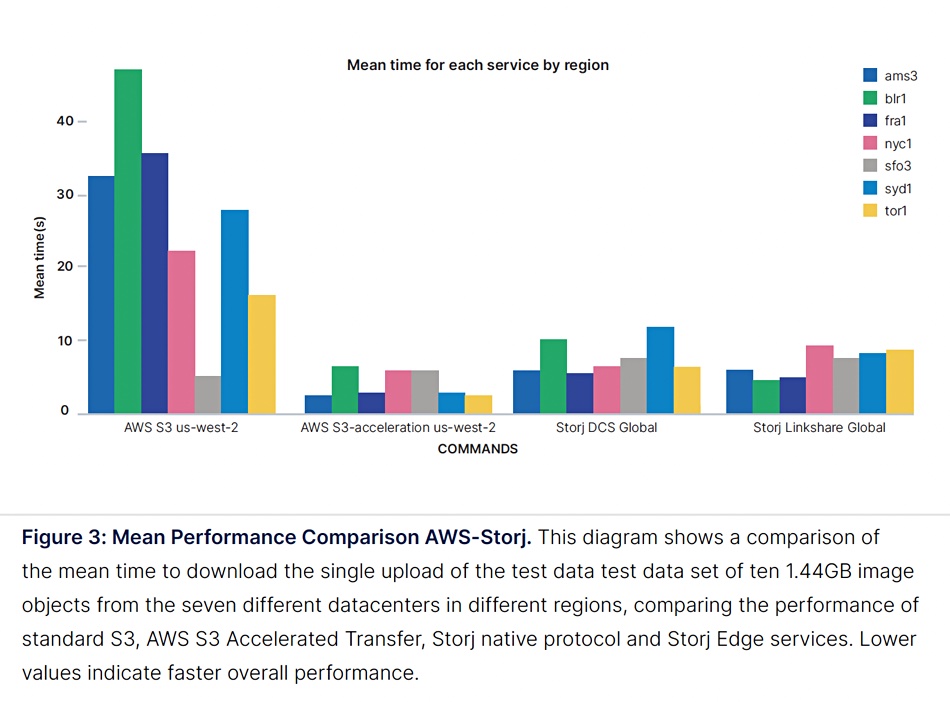

Storj says it is is a viable alternative to the hyperscaler clouds for model and training data set storage and distribution. A test performed as part of Storj’s own white paper appeared to show that Storj performance is better than standard Amazon S3, although slower than S3 Accelerated Transfer:

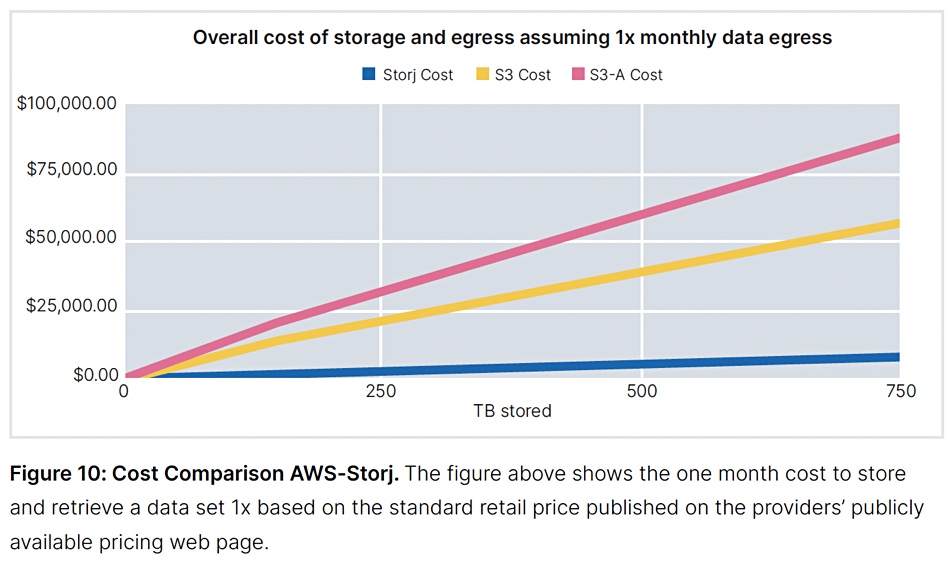

Storj storage costs less than Amazon S3, another of its own charts appears to indicate:

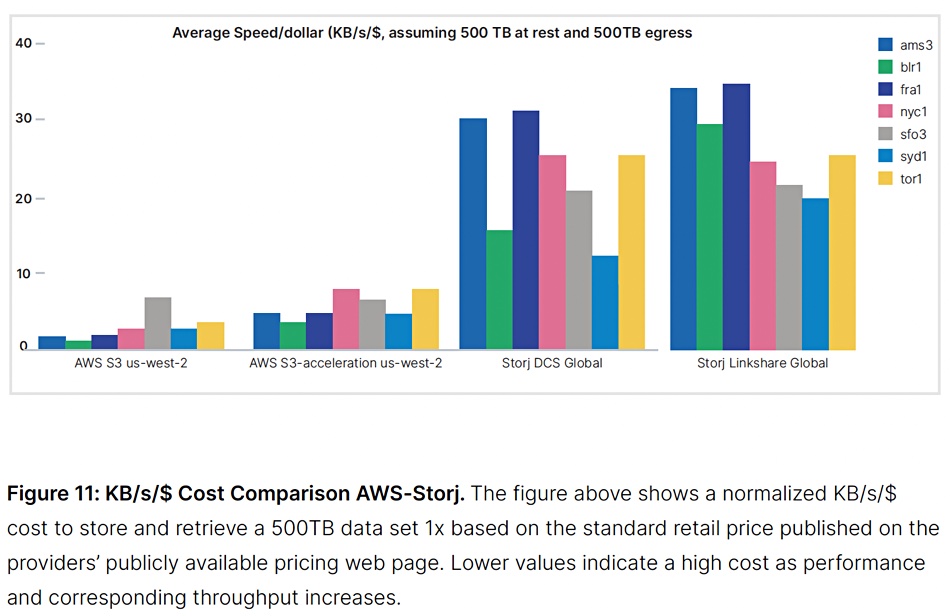

This means, it says, that when the mean download time is combined with cost to provide a download speed per dollar measure, Storj outperforms Amazon:

Its channel is an important growth driver, with one new channel partner reporting a 500 percent revenue increase from one year to the next. The firm said Storj can add capacity to its network faster than a big CSP could buy, install and deploy the same amount of capacity, according to the company.

A white paper describing Storj’s AT model training data storage capabilities is available here (registration required). The charts above were sourced from this paper.

Comment

The decentralized storage concept, shorn of its prior crypto-currency payment terms and fiat currency abolition ambitions, is becoming recognized as a realistic cloud storage technology. Suppliers like Storj, Cubbit, and Impossible Cloud are demonstrating that it is reliable, fast, and secure, and can be used without application software changes.

Their big advantage over the tier 1 and 2 CSPs is that they don’t have to build and operate datacenters. AWS, Azure, Google and others, who do bear that cost, could respond to this disruptive innovation to their business by setting up their own decentralized storage tier using spare capacity in third-party datacenters. Indeed they could use spare capacity in their own existing customer’s datacenters, offering them lower price cloud storage in return instead of a cash payment.