IBM has introduced a 3500 model to its Spectrum Scale Enterprise Storage Server (ESS) portfolio with a faster controller CPU and more throughput – up to 91GB/sec sequential read.

The ESS 3000 series chassis are 2U x 24-bay server storage boxes tailored for running Spectrum Scale, IBM ’s scale-out parallel access file system. They use NVMe SSDs and have dual active:active controllers with either 100Gbit Ethernet or 200Gbit HDR InfiniBand ports. The ESS 3000 was introduced in October 2019 with the 3200 announced in April 2021. The 3500 has come along just over 12 months later.

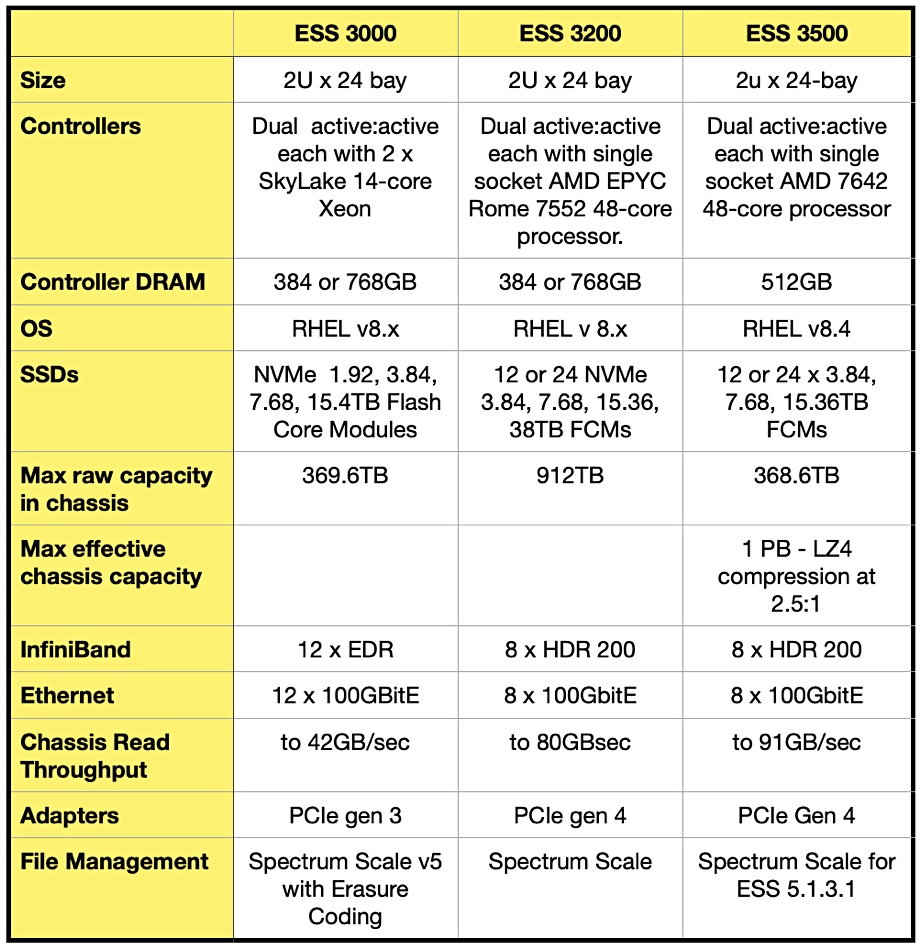

There is no formal IBM announcement, but a 3500 datasheet provides details which we’ve used to build a table comparing the 3000, 3200, and 3500 ESS boxes:

One obvious change is that the 3500 has less than half the raw capacity of the prior ESS 3200 because it only supports 3.84, 7.68 or 15.36TB SSDs, IBM’s proprietary Flash Core Modules (FCM). The 3200 supports these capacities and a larger 38TB FCM, taking its total chassis capacity to 912TB versus 3500’s 368.6TB. IBM does say that, with LZ4 compression, the 3500’s effective capacity is 1PB.

Another change is that the controller CPU and DRAM have changed, with the 3500 having less DRAM, 512GB, than the 3200’s 768GB maximum. But the 3500 has a faster CPU, an AMD EPYC 7642.

This 7642 processor has 48 cores and runs at 2.30GHz versus the EPYC 7552 used in the ESS 3200, with its 48 cores running at a slightly slower 2.20GHz. The 7642 also has an all-core turbo frequency of 2.80GHz versus the 7552’s 2.50GHz, and boasts a 256MB L3 cache, 64MB larger than the 7552’s 192MB.

Our thinking is that IBM is optimizing the 3500 for throughput, and its maximum 91GB/sec sequential read rating is 14 per cent faster than the 3200’s 80GB/sec rating. An IBM insider told us it basically has “more horsepower for GPU computing.”

With the ESS 3200 being used in an Nvidia SuperPOD AI system configuration, we can see that a faster 3500 would improve the SuperPOD’s performance beyond that of a 3200 system, and get machine learning models trained faster.