Kioxia claims direct-attached performance from network-attached devices is no longer a thing of storage architects’ dreams.

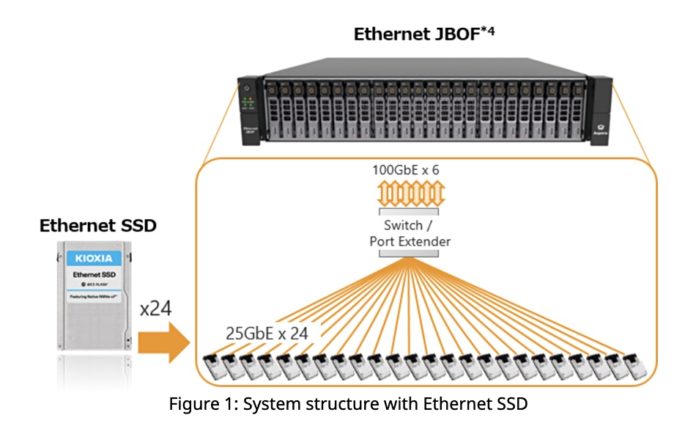

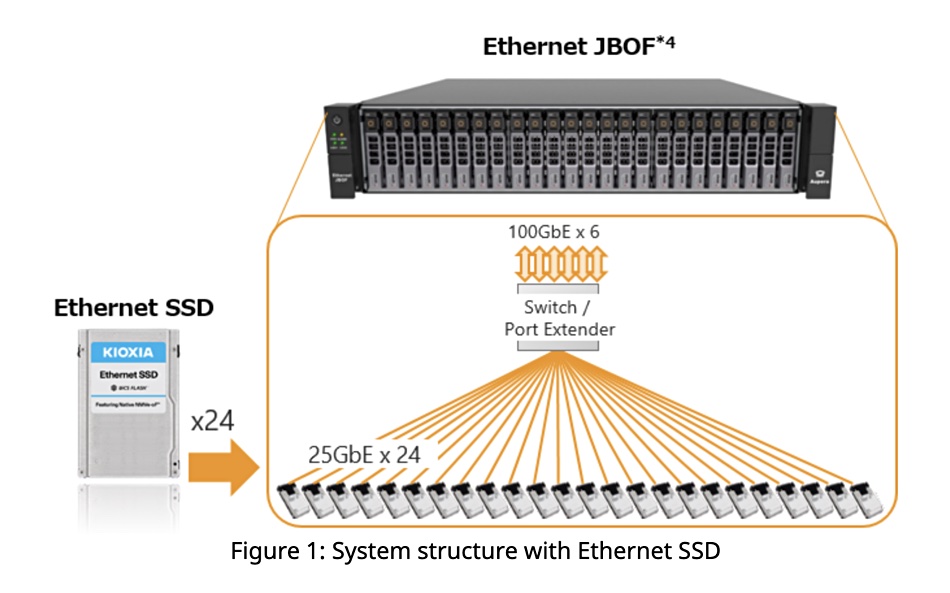

The company has planted a Marvell Ethernet controller directly onto an SSD and fitted 24 of these drives into an EBOF (Ethernet Bunch of Flash drives) chassis.

The drives are accessed as Ethernet devices, with an NVMe twist. An EBOF is simpler to deploy than a JBOF (just a bunch of flash) platform, Kioxia argues, because it needs an integrated Ethernet switch only. A JBOF, by contrast, requires a box-controlling CPU, DRAM and Fibre Channel host bus adapters.

The Ethernet SSD and EBOF systems are intended for applications and workloads that need disaggregated low-latency, high bandwidth and highly available storage. Kioxia is positioning the EBOF as an affordable, well-performing box – as opposed to a high-performance system – for edge computing, enterprise and cloud data centres.

Thad Omura, VP for marketing at Marvell’s Flash Business Unit, supplied a quote: “The native Ethernet SSD combined with our switches and controllers offer data centres an EBOF solution that lowers their total cost of ownership, increases performance and reduces power as compared to alternative JBOF solutions.”

Alvaro Toledo, VP of SSD marketing and product planning at KIOXIA America, said: “The Ethernet-attached storage ecosystem is an idea whose time has come. … We are enabling the true potential of NVMe over Fabrics. This opens up a new world of possibilities for cloud data centre operators, software-defined storage providers, and server and storage system OEMs.”

Kioxia needs the EBOF to help build demand for its Ethernet SSDs and is collaborating with Foxconn-Ingrasys and Accton to bring EBOF systems to market. We suspect NVMe over TCP/IP will be supported in the future, enabling the use of cheaper ordinary, non-converged Ethernet.

Ethernet SSD

In the Kioxia set up, Ethernet SSDs are addressed as NVMe devices using RoCE (Remote Direct Memory Access – RDMA over Converged Ethernet). Any NVMe-oF-capable host server can use them.

Information about the Ethernet SSD is sketchy. The devices are supplied in 1.92TB, 3.84TB and 7.98TB capacities and output 670,000 random read IOPS – equivalent to 16 million-plus IOPS per chassis.

The SSD has single or dual 25GbitE links and supports RoCE v2 RDMA, NVMe-oF 1.1 and NVMe 1.4. The drive has a Marvell 88SN2400 controller and supports IPv4 and IPv6 architecture plus Redfish and NVMe-MI storage management specifications.

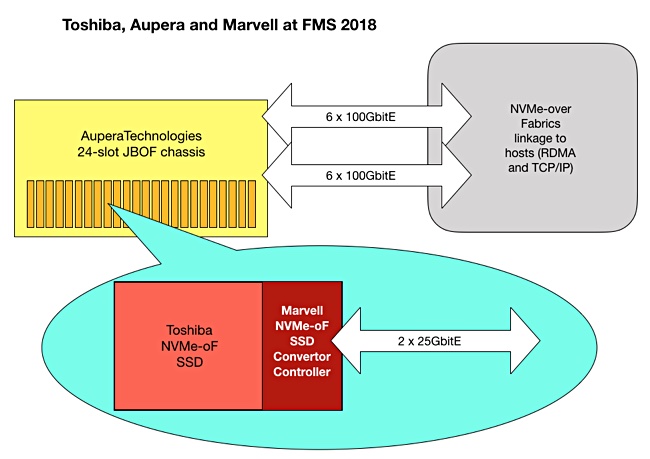

We suspect it has a 20µs latency but do not know what type of NAND it uses, but suspect it’s 64-layer3D NAND in TLC (3bits/cell) format because this was the case in a demo at the FMS 2088 event by Toshiba; Kioxia’s precursor company.

The chassis is a 2U x 24-slot box supporting 2.5-inch form factor drives. Each chassis supports 2.4 Tbit/s of connectivity throughput which can be split between network connectivity and daisy chaining additional EBOFs.

Kioxia, formerly called Toshiba Memory, promoted direct Ethernet-addressed drives and an Ethernet-accessed chassis in 2018. Each drive was rated at 666,666 IOPS and the chassis achieved 16 million 4K random read IOPS from its 24 drives – claimed at the time to be the fastest random read IOPS rate recorded by an all-flash array.

Two years later that performance level looks good-ish but not great, especially when compared to Kioxia’s own CD6 NVMe SSDs using its 96-layer 3D NAND in TLC format. These have the same capacities as well as a 960GB entry-level and 15.3 TB and 30.72TB upper levels. They operate at up to 1.4 million random read IOPS across their PCIe 4.0 interface, more than twice the Ethernet SSD’s speed.

We have seen the Ethernet-addressed storage drive concept before; notably with Seagate’s Kinetic disk drive concept with the drives implementing an object storage scheme using Gets and Puts to read and write data. This technology failed to take off, partially because the drives required host application software changes.

Kioxia is sampling the Ethernet SSD with customers. There is no word on general availability for the component or the EBOF.