Implementing Memcache with NAND or 3D XPoint produces near-DRAM cache performance at a much lower cost, IBM claims.

Memcache is an open source distributed memory system launched in 2003. These days databases are much larger and DRAM has remained expensive – indeed prices increased 47 per cent from 2016 to 2017, according to IBM

More than 700 applications use Memcache and many public clouds offer a managed Memcache service. For instance, LinkedIn, Airbnb and Twitter use Memcache to avoid accessing databases on storage and so reduce speed query response times.

IBM drives memory to uDepot

IBM Zurich researchers have built Memcache implementations using NVMe flash and also Optane (3D XPoint). They say it could provide close-to-DRAM performance for less money – and retain its contents over a power-loss.

They have built a key:value store called uDepot which is tailored for NVMe flash, and also Optane. Users can expect 20x lower costs than DRAM (c$10/GiB) when using flash ($0.4/GiB) and 4.5x lower hardware costs when using 3D XPoint ($1.25/GiB) without sacrificing performance, and gaining higher cloud caching capacity scalability, the IBMers say.

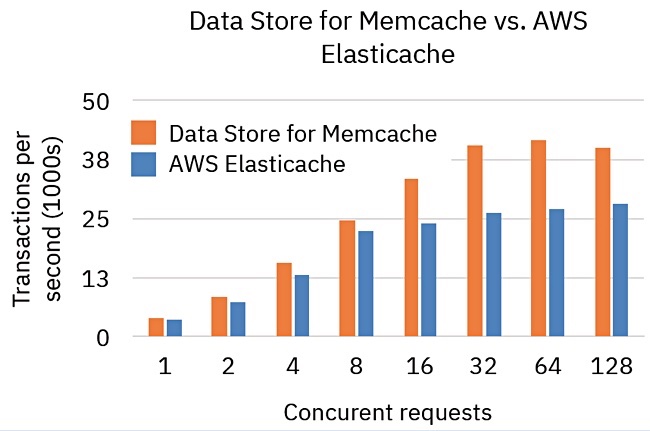

They implemented uDepot with NVMe flash SSDs as an IBM Cloud service, calling it Data Store for Memcache, and benchmarked it using the memaslap test, against the free version of Amazon’s AWS Elasticache, which uses DRAM.

They found Data Store for Memcache is 33 per cent faster on average (across all concurrent request data points) as a transactions per second chart shows:

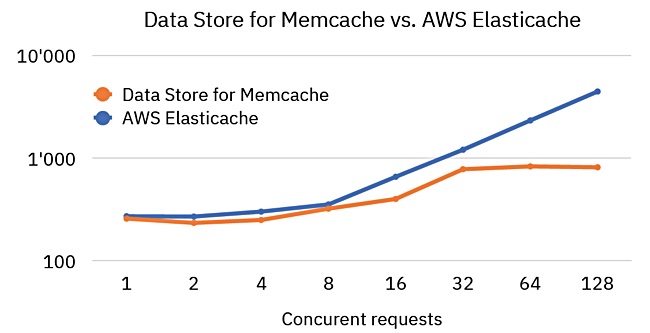

A latency comparison chart shows DataStore for Memcache is close to Elasticache latency:

DataStore for Memcache is available as a no-charge beta offering from the IBM Cloud.

Memcache for questions

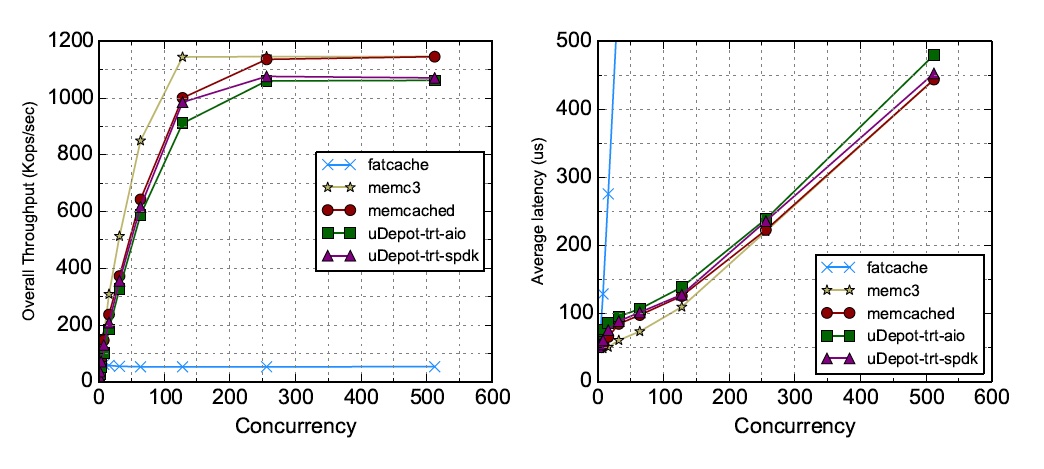

IBM has also implemented uDepot using two Intel Optane 3D XPoint drives – Intel P4800X 375GB – and compared that to DRAM and flash Memcache implementations, again using the memaslap test. The company compared five alternative memcache implementations:

- uDepot Optane with trt-spdk backend

- uDepot Optane with trt-aio backend

- memcached with DRAM

- MemC3 – a newer Memcache implementation with DRAM

- Fatcache – Memcache implementation coded for SSDs but implemented here with Optane media

The results show uDepot getting close to memcached and MemC3 performing better than memcached in throughput terms (left-hand chart). Fatcache, with its SSD-based code, lags far behind on the throughput test.

In latency terms (right-hand chart) Fatcache is not so good either. It caches data in DRAM, getting low latency at low queue depths and then latency rapidly increases with the number of concurrent requests from clients.

The memcached and MemC3 DRAM, and uDepot Optane caching alternatives are closely aligned in latency terms.

For 128 clients, the actual latency and throughput numbers are:

- MemC3 – 110μs and 1,145kops/s

- memcached – 126μs and 1,001kops/s

- uDepot trt-spdk – 128μs and 985kops/s

- uDepot trt-aio – 139μs and 911kops/s

- Fatcache – 2,418μs and 53kops/s

The IBM researchers conclude that memcached on DRAM can be replaced by uDepot on Optane with negligible impact on performance.

How does uDepot Optane throughput compare to that of uDepot flash? The uDepot Flash throughput at 128 clients is 40,000, reading from the first chart, and it is around 140,000, reading off the right-hand uDepot Optane chart above – 3.5 times better.

These numbers suggest that NVMe Optane drives could be a worthwhile replacement for DRAM in memcache applications.