A Databricks-sponsored MIT Tech Review reports says businesses adopting generative AI will become 25 percent more efficient.

Databricks pushes the idea that AI processing needs access to a single, all-encompassing data repository; its lakehouse combining data warehouse and data lake attributes, and with support for streaming data needed for real-time analytics. The MIT Technology Review Insights “Laying the foundation for data-and AI-led growth” report surveyed 600 CIOs, CTOs, CDOs and tech leaders for large public and private sector organizations.

It declares: “Enterprise adoption of AI is ready to shift into higher gear. The capabilities of generative AI have captured management attention across the organization, and technology executives are moving quickly to deploy or experiment with it.”

The report’s exec summary says organizations are sharply focused on retooling for a data and AI-driven future. Everything from data architecture to AI-enabled automation is on the table, as technology executives strive to find new efficiencies and new sources of growth. At the same time, the pressure to democratize the power of data and AI creates renewed urgency to bolster data governance and security.

It found that 88 percent of surveyed organizations are using generative AI, with 26 percent investing in and adopting it, and another 62 percent experimenting with it. The majority (58 percent) are taking a hybrid approach, using vendors’ large language models (LLMs) for some use cases and building their own models for others when IP ownership, privacy, security, and accuracy requirements are tighter.

Some 81 percent of survey respondents expect AI to boost efficiency in their industry by at least 25 percent in the next two years. A third say the gain will be double that. Every organisation surveyed will boost spending on modernising data infrastructure and adopting AI during the next year, and for nearly half (46 percent), the budget increase will exceed 25 percent.

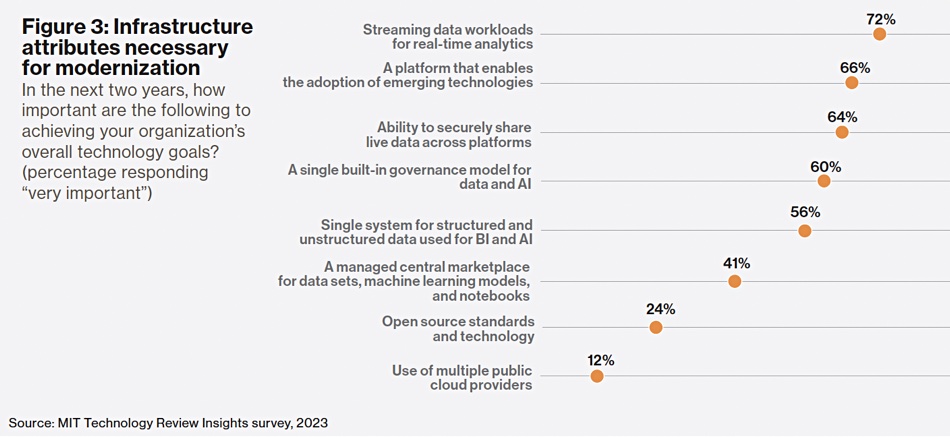

Reassuringly for Databricks, nearly three quarters of surveyed organizations have adopted a lakehouse architecture, and almost all of the rest expect to do so in the next three years. Survey respondents say they need their data architecture to support streaming data workloads for real-time analytics (a capability deemed “very important” by 72 percent), easy integration of emerging technologies (66 percent), and sharing of live data across platforms (64 percent). Ninety-nine percent of lakehouse adopters say the architecture is helping them achieve their data and AI goals, and 74 percent say the benefits are “significant.”

As for data governance, 60 percent of respondents say a single governance model for data and AI is “very important.”

Samuel Bonamigo, SVP and GM EMEA at Databricks put out a statempt: ’”These findings indicate that investments in generative AI are no longer optional for business success – and leaders across the globe have firmly taken notice.”

He said: ‘‘Particularly in EMEA, we are not only seeing increased lakehouse adoption across the board, but also a high degree of AI optimism from executives – for instance, 63 percent of EMEA CIOs were “very optimistic” about the business value that AI could bring to their organisations within two years. 88 percent of organisations are already investing and adopting generative AI. It’s clear that this momentum is showing no signs of slowing.’’

Comment

This Databricks MIT Tech Review report basically tells undecided businesses to get on board the gen AI train or be left behind by those already aboard. This is a good thing for Databricks whose own momentum needs to continue; it’s hoping punters will get a Databricks lakehouse before they start using their data to train gen AI models.

It’s not alone in its lakehouse pitch. Dell’s multi-faceted gen AI activities include supplying a lakehouse as well and that strengthens the idea that lakehouse tech is the best way of storing, managing and arranging data access for AI model training.