Nvidia’s BlueField-3 DPU is being added to the network stack in Oracle’s public cloud – OCI (Oracle Cloud Infrastructure) – to improve its application performance.

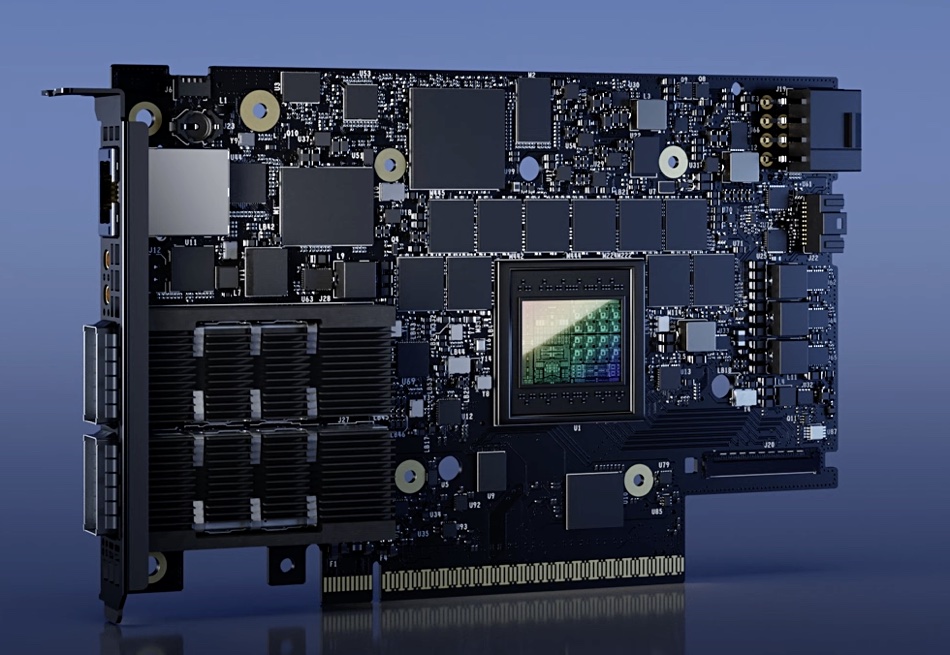

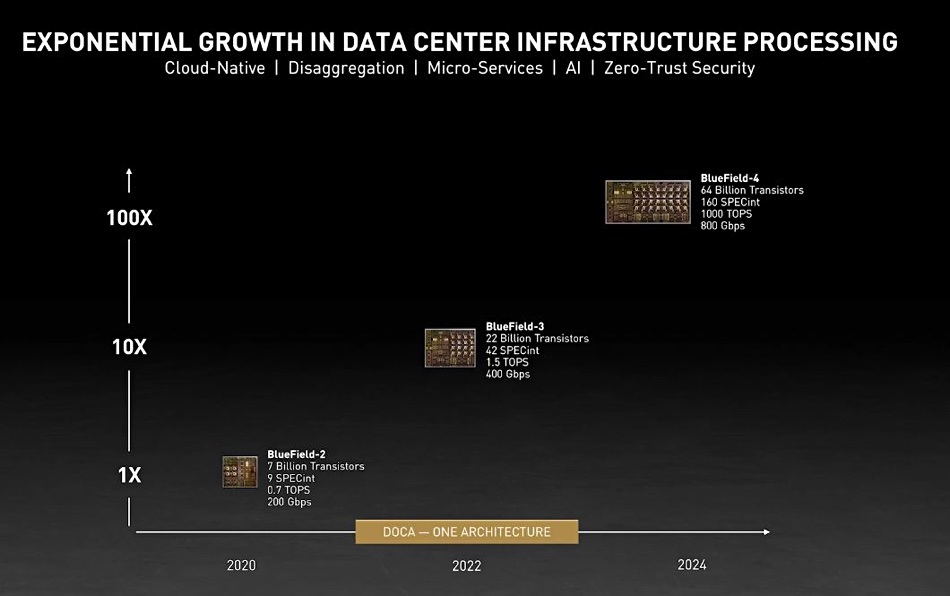

BlueField-3, with 22 billion transistors, is Nvidia’s third generation SmartNIC or processor-enhanced network interface card. Such SmartNICs, also called DPUs (Data Processing Units), have embedded computer systems that offload low level network and other functions from a host CPU or GPU system, enabling them to be applied to more of their core applications or graphics processing work. It is a system-on-chip (SoC) device and supports 400Gbps bandwidth, PCIe gen 5 and has 16 x Arm A87 cores, twice as many as the preceding BlueField-2 product, with its 7 billion transistors and 200Gbps bandwidth. BlueField-3 includes accelerators for software-defined storage, networking, security, streaming, and line rate TLS/IPSEC cryptography.

The news was revealed at Nvidia’s GPU Technology Conference in San Jose, CA, this week. Nvidia CEO and founder Jensen Huang, put it in an AI context, saying: “The age of AI demands cloud data center infrastructures to support extraordinary computing requirements. Nvidia’s BlueField-3 DPU enables this advance, transforming traditional cloud computing environments into accelerated, energy-efficient and secure infrastructure to process the demanding workloads of generative AI.”

Oracle EVP Clay Magouyrk had his say too: “Nvidia BlueField-3 DPUs are a key component of our strategy to provide state-of-the-art, sustainable cloud infrastructure with extreme performance.”

The OCI RDMA Supercluster apparently scales up to 32,000 GPUs. Oracle has highlighted potential future scalability to 100,000s of clustered GPUs, built from clusters of up to 512 Nvidia GPUs using 200Gbps RDMA network (using RDMA over Converged Ethernet (RoCE) across Nvidia ConnectX RDMA NICs.

In his GTC presentation, Huang said: “In a modern software-defined datacenter, the operating system doing virtualization, network, storage, and security can consume nearly half of the datacenter’s CPU cores and associated power. Datacenters must accelerate every workload to reclaim power and free CPUs for revenue-generating workloads. Nvidia BlueField offloads and accelerates the datacenter operating system and infrastructure software.”

An Nvidia blog mentions “power reductions up to 24 percent on servers using Nvidia BlueField-2 DPUs. In one case, they delivered 54x the performance of CPUs.” Since BlueField-3 is twice as powerful as BlueField-2 we might expect larger savings with its use. Nvidia says BlueField-3 has 4x more compute power, up to 4x faster crypto acceleration, 2x faster storage processing and 4x more memory bandwidth than BlueField-2.

The third generation of Nvidia’s OVX computing system is based on its Omniverse Enterprise system for creating and operating metaverse applications such as digital twin simulations. Each OVX server combines BlueField-3 SoCs with four of Nvidia’s L40 GPUs, two ConnectX-7 SmartNICs and Nvidia’s Spectrum Ethernet offering. BlueFIeld-3 is said to offload, accelerate and isolate CPU-intensive infrastructure tasks. Nvidia says that, for deploying Omniverse at datacenter scale, BlueField-3 provides a foundation for running the data center control-plane, enabling higher performance, limitless scaling, zero-trust security and better economics.

While vendors such as Qumulo are busy singing the praises of COTS (Commercial off-the-shelf) hardware, meaning multi-vendor supply of standardized components such as X86 CPUs, Ethernet NICs, Fibre Channel HBAs, disk and solid state drives, etc, vendors such as Nvidia are pushing their own proprietary components into this mix. We are seeing the COTS ideal being whittled away, hollowed out.

Nvidia has a BlueField-4 device on its roadmap, with 64 billion transistors and twice the bandwidth, at 800Gbps, of BlueField-3.