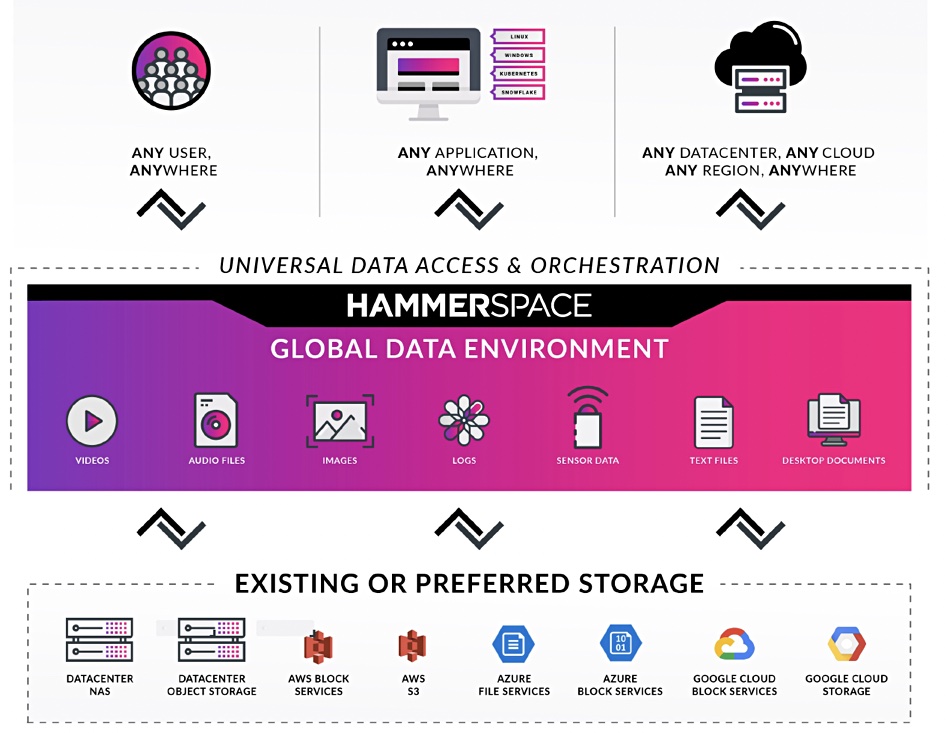

Take a deep breath. Hammerspace, the unstructured data silo-busting supplier, has raised its game to provide a Global Data Environment (GDE) across file, block and object storage on-premises and in public clouds with policy-driven automated data movement and services.

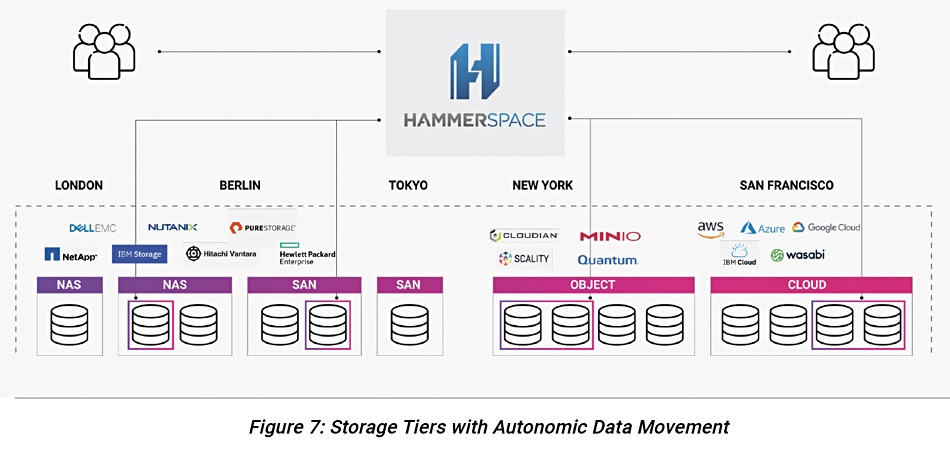

That’s quite a mouthful, and it means what it says. It builds on Hammerspace’s existing file and object metadata-based technology that unified distributed file and object silos into a single network-attached storage (NAS) resource. This can give applications on-demand access to unstructured data in on-premises private, hybrid or public clouds. The idea is to keep data access points updated with the GDE metadata so that all data is visible and can be accessed from anywhere.

CEO and founder David Flynn told Blocks and Files: “Data Gravity is central to the story. In effect, what we’re doing is elevating data to exist in an antigravity field able to access any infrastructure.”

Molly Presley, SVP marketing, said: “For years, I have spoken to customers in Media & Entertainment, Life Sciences, Research Computing and Enterprise IT that constantly struggled with sharing their file data with distributed users. They have tried to build their own solutions, they have tried to integrate multiple data movers, storage solutions, and metadata management solutions and never been satisfied with the results. Hammerspace took on this really tough innovation challenge and created an elegant, efficient, integrated solution.”

Global DataEnvironment

Hammerspace’s GDE can use existing storage resources, assimilating them, taking them over in effect.

An organisation’s stored data is then accessed, as it were, through a logical Hammerspace front end or gateway.

It’s this concept that lay behind its notion of storageless storage, espoused in December last year. Data, Flynn believes, should be free to move between block, file and object silos, wherever they are. We note that GDE is based on file metadata technology and how it interacts with block storage and volumes will be interesting to find out. For example, if files or objects are moved to block storage, do they become one volume or several? We can ask a similar question about block to file or object conversion.

Setting that aside, GDE supports persistent data in Kubernetes environments and treats clouds as regions, with cross-region access supported.

A layer of file-granular data services exists above the storage silo abstraction layer and includes snapshots, replication, file versioning, tiering with policy-driven data movement, deduplication, compression, encryption, WORM, delete and undelete. Policies can be created for any metadata attribute including entity type, name, creation and modification date/time, and owner. Snapshots can be recovered at file level and also at the fileshare level

Hammerspace will dedupe and compress when replicating or moving data over the WAN when data is stored on object storage. The Hammerspace software can run on bare metal servers, in virtual machines (ESX, Hyper-V. KVM and Nutanix AHV) and in the three main public clouds.

Access a Hammerspace GDE white paper here.

Universal and fast data access

Gradually more and more single store-type environments are emerging. Ceph covers block, file and object. Unified block and filesystems get object support through S3. Suppliers, led by pioneering NetApp, are erecting data fabric structures covering the on-premises and public cloud environments. Public cloud suppliers are establishing on on-premises beachheads, such as Amazon’s Outposts and Azure Stack.

Komprise and others are building multi-vendor, multi-site file lifecycle management systems. And now Hammerspace emerges with its comprehensive offering. As Flynn says: “We are talking about universal access to the data.”

He asks: “How can you have [data] local to this user, local to this application, local to this datacentre? To each and every one of them simultaneously, without ever copying the data, without it being a copy of the data? It’s a different instance of the same piece of data. And that’s in essence what we are doing by by empowering metadata to be the manager of the data.”

Flynn aims to change the relationship between data and infrastructure: “Changing the relationship is done through intent-based orchestration, through granular orchestration, through live data orchestration. And nobody else does that, where you can have statements of objective, and have the system pre-position the data where you’re going to need it.”

Access has to be fast. “Your system has to be able to serve data, once it is positioned onto a specific locale. It has to be able to serve it in parallel, in the highest performance fashion.”

Flynn does not like the notion of an actual central data store. “If you look at other attempts to address globalising data and its access, invariably, all of them use the notion of a central silo — a big central object store. Then you stretch and put file access to where you use a central file or you stretch and you put caches on front of it. It almost makes [things] worse now, because you’re not only dependent on a centralised [store] but now each of your decentralised points become critical points of failure as well.”

Spinning up Hammerspace GDE

Flynn told us: “Hammerspace can be spun up at the click of a mouse through APIs. As matter of fact, we have one of the major cloud vendors — actually, they did the work to automate the spin-up of Hammerspace. And have been positioning Hammerspace as the way to do multi-region, even within their cloud.” He wouldn’t say which public cloud this was.

”This, the ability to simply turn on Hammerspace, and have it stretch your data from on-prem into the cloud, or from one region to the cloud to another, through an API and in a matter of mere minutes, is extremely powerful.

“So this ends up being super simple to spin up through … scripting, a presence of your data, and you don’t even have to wait for the data to get there. Because the system replicates metadata, and the data comes granularly. And based on policy, so you’re not waiting for whole datasets to come.”

Customers

We suggested Hammerspace was asking a lot of its customers to trust Hammerspace with their data crown jewels.

Flynn agreed. “Yes, we are asking our clients to trust us to be their data layer. So in the end, they are subjugating all of the storage infrastructure to Hammerspace. And using that to automate, arguably, one of the most manual processes in the IT world — the placement and movement of data across different storage infrastructure. It’s kind of a sin. There’s nothing more digital than data. And yet, something in the most desperate need of digital automation is the, how we manage the mapping of data to the infrastructure.

Customers have responded. “This is something where the company has made major strides in the past year [with] some very large organisations that have gone into production. And even in the seven figure deals, million dollar-plus range. And even at that, it’s still just a fraction of how far they want to grow. So we have three of the world’s largest telcos, some of the largest online game manufacturers, media and entertainment companies.”

How did Flynn know this was the right time to make a big push into the market?

“When we had one of these telcos I was talking about in production for nine months, continuous operation, zero downtime, running mission-critical applications, 24/7, 365 days a year, over 3,000 metadata ops per second. So you look [at that] as a as a startup guy, you’re looking for signs, and how do you know when you’re ready? It’s hard, right? Because if you go too soon, you can stub your toe. If you go too late you miss opportunity.

“As soon as I had not just that, but other proof points similar to it, we were there. … The first thing that we decided that we want to go after [is] the enterprise NAS world, and the need to move enterprise NAS workloads to the cloud.”

Partners and execs

So the software is ready, customers are interested and the timber is ripe for launch. What next? Hammerspace is ramping up its go-to-market operation, having recently hired:

- Jim Choumas VP channel sales;

- Chris Bowen as SVP global sales;

- Molly Presley as SVP marketing.

There’s a mass of related activity. Sales teams have been hired for the southern California media and entertainment market, high tech companies in northern California, and life sciences in the Boston area.

It’s set up a new partner program, Partnerspace, which integrates with the channel for 100 per cent of its customer engagements.

DataCore sells Hammerspace software as its vFilo product. We expect many more channel partners will be recruited.