NVIDIA, Dell and VMware have started a Project Monterey Early Access Program so customers can explore whether servers offloaded with NVIDIA’s BlueField-2 SmartNIC can run applications faster. If this goes well, other hypervisor and server suppliers will look to start Monterey-me-too programs.

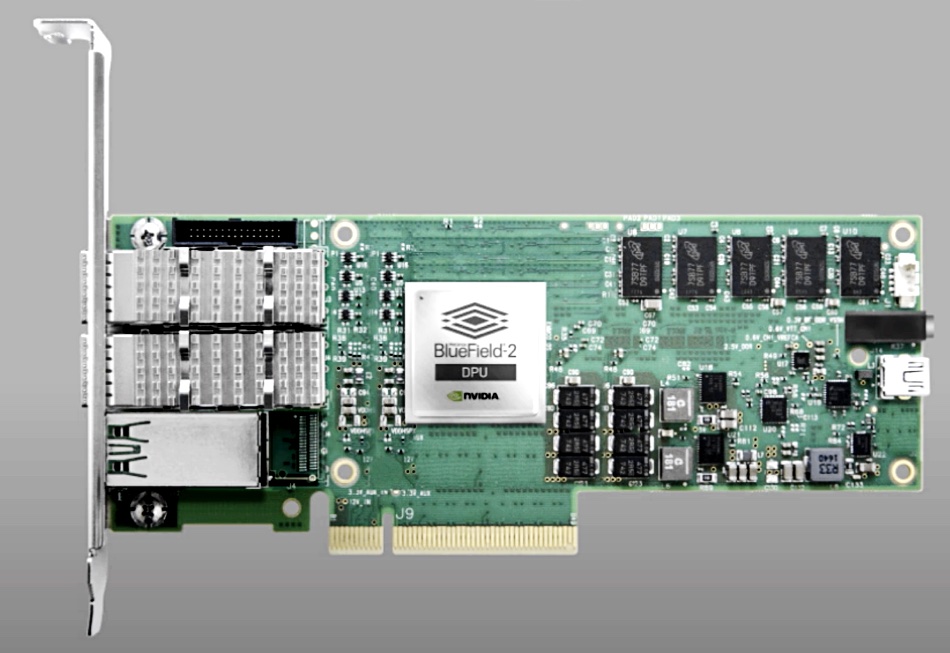

The EAP is based on Dell R750 PowerEdge servers fitted with BlueField-2 SmartNICs running VMware’s ESXi hypervisor on their Arm CPU cores. The idea is that low-level data-centric tasks to do with hypervisors, networking, security and storage are executed on the BlueField-2 card.

A blog by Motti Beck, Senior Director of Enterprise Market Development at NVIDIA Networking Mellanox, announced the EAP: “AI and other compute-intensive workloads require real-time data streaming analysis, which, along with growing security threats, puts a heavy load on server CPUs. The increased load significantly increases the percentage of processing power required to run tasks that aren’t an integral part of application workloads. This reduces data center efficiency and can prevent IT from meeting its service-level agreements.”

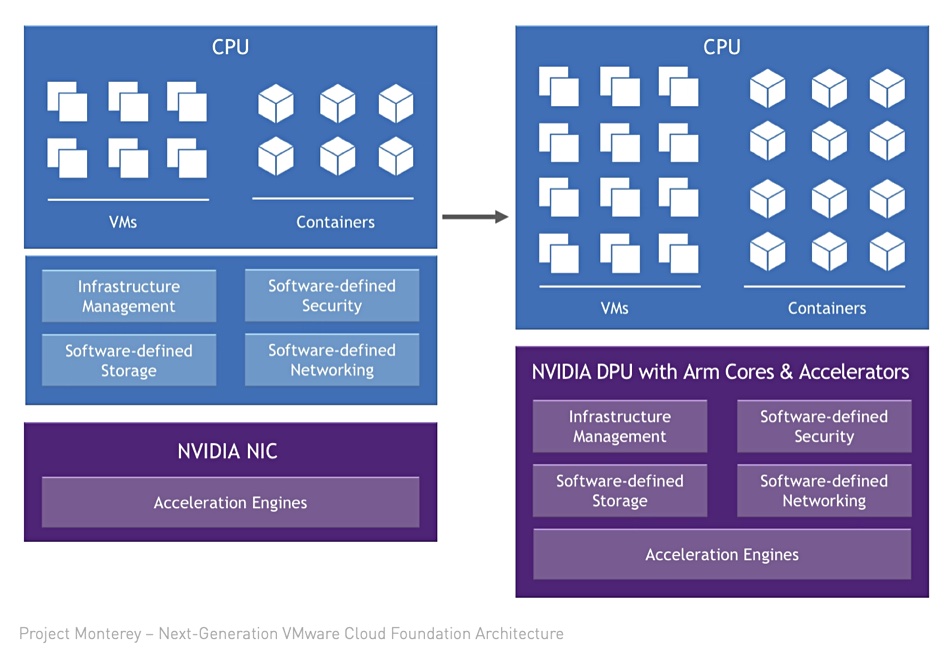

He provided a diagram showing a straightforward transfer of infrastructure management and SW-defined security, storage and networking to BlueField-2, called a DPU (Data Processing Unit):

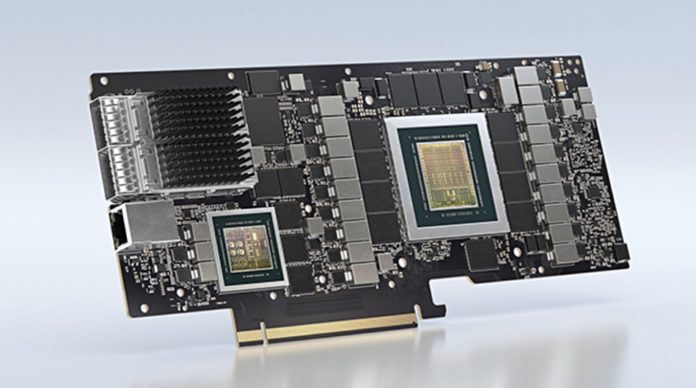

BlueField-2 is a Mellanox system-on-chip (SoC) card, featuring an array of eight-core, 64-bit Arm processors, a ConnectX-6 Dx ASIC network adapter, PCIe Gen-4 x16 lane switch, and two 25/50/100 GbitE or one 200GbitE ports. This acceleration engine hardware provides a crypto engine for IPsec and TLS cryptography, integrated RDMA and NVMe-oF acceleration, and data deduplication and compression.

EAP customers are being invited to reinvent a software-defined data centre architecture based around BlueField-2 and VMware.

When it was announced in September last year, four application areas were mentioned:

- Virtualizing disaggregated remote storage and presenting it as local composable storage pools;

- Provisioning bare metal servers for cloud service providers;

- End-point application isolation using micro-segmentation;

- Multiple application-specific firewalls for enhanced security.

Beck’s blog cites four general and somewhat vague benefits:

- Improved performance for application and infrastructure services;

- Enhanced visibility, application security and observability;

- Offloaded firewall capabilities;

- Improved data center efficiency and cost for enterprise, edge and cloud, with reduced deployment downtime.

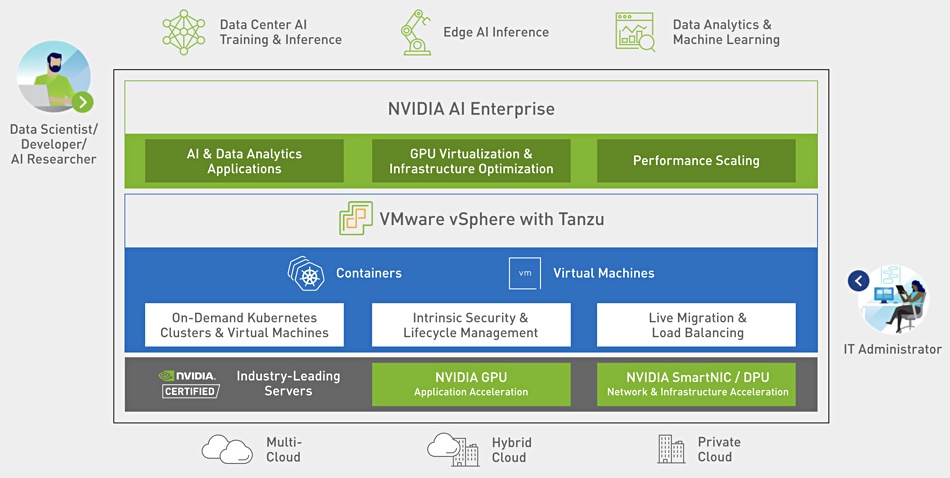

VMware’s role in this is partly to be a data and function on-ramp for NVIDIA’s GPUs and BlueField-2, as an NVIDIA graphic shows:

Interested parties can apply to join this EAP technical preview program on the NVIDIA Project Monterey web site.

Comment

It will be interesting to see if VMware starts working with Fungible’s DPUs in a similar way. It will also be most interesting to see if other hypervisor suppliers check out if BlueField has possibilities for them too — with the obvious examples being Nutanix with AHV, KVM and Red Hat Virtualization. If the offloading of server-based infrastructure management, networking, security and storage tasks to a SmartNIC-cum-DPU works for VMware, Dell and NVIDIA, then it should equally well work for other hypervisors and other server suppliers. Not to mention other SmartNIC/DPU suppliers — such as Fungible and Pensando.

A problem area is getting software loaded onto the SmartNIC and having the SmartNIC interoperate with host servers. An API library is probably being developed by NVIDIA and VMware to accomplish this.