Newly emerged Stellus Technologies has developed a new and different high-performance data storage and processing system involving arrays of compute-in-storage SSDs providing real-time access across a key:value over NVMe fabric to hundreds of terabytes of unstructured data.

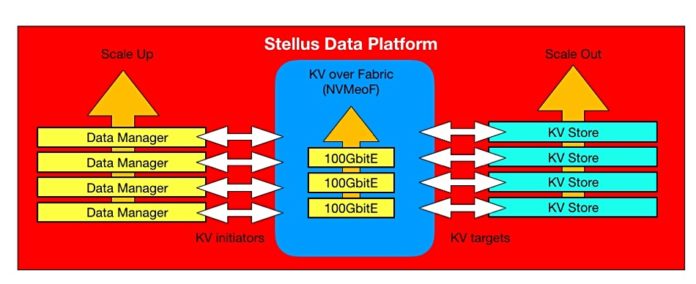

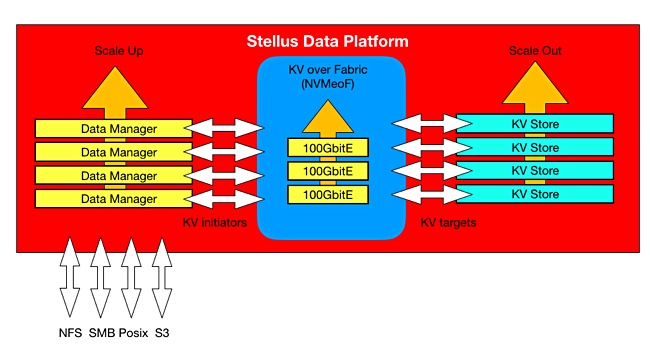

The firm said its Data Platform is a scale-up and out system. Scaling up can increase capacity while scaling out adds additional storage server devices, meaning more throughput. Scaling both at the same time is called scaling through by Stellus.

A Stellus system, a software-defined, commodity off-the-shelf hardware storage system, can reach a guaranteed 80GB/sec on both reads and writes with near parity of read and write performance, and a latency not exceeding 500μs.

The company’s plan is to address those parts of the market that need such a high-performance file-based storage and processing system, because there is a data-generating explosion happening and legacy file systems can’t keep up. They were developed when files were stored on disk, not flash, and file populations much smaller.

Stellus technology framework

Stellus said its memory-native file system uses SSDs to hold key:value stores. Its technology includes:

- M.2 SSDs,

- Memory-Native File System built on many key:value stores,

- Global namespace,

- NVMe-over Fabrics is used to provide key:value over fabrics (KVoF) access

- Algorithmic data locality with Stellus claiming its memory-native architecture removes performance limitations of global maps at scale,

- Stellus File system runs on-premises or in the cloud with no reliance on custom hardware and kernels,

- Multi-tenancy,

- Cloud connectivity,

- Security features,

- Erasure coding.

KV stores

A key is number or string. In the Stellus product it is converted to a hash value which maps to a storage address where the value is stored.

The value can be a string or a structured object; a list, a JSON object, an XML description, or something else, even keys to other values,

Key-value stores use get, put, and delete IO operations; object storage operations. Stellus SVP and chief revenue officer Ken Grohe writes that a KV store is a database containing KV pairs. The key identifies the value and the value is stored as a Binary Large Object (BLOB).

Any existing filer basically takes in files, breaks them up into chunks (if they’re big), and stores the chunks across a range of blocks in SSDs or disks. The fast ones all use flash for fast access now. The filer controller keeps a map that indexes logical file data to physical SSD block data.

Stellus inserts a KV store layer between the logical file data and the SSD blocks. It is betting its scale-out farm that this results in faster access to hundreds of terabytes of file data than the traditional filer controller-drive shelf method. ESG testing and pre-release customer evaluations have shown that it is faster than legacy filer systems.

Data locality is worked out using algorithms rather than having a global map across the cluster. This removes the need to keep the clustered nodes consistently synchronised with such a map, saving CPU and DRAM resources. Such synchronisation becomes more and more difficult as the number of nodes increases.

The Stellus software runs in user space and needs no Linux kernel hacking/customisation or customised hardware.

Accelerated performance

The firm’s proposition is that key-value stores replace traditional block storage for unstructured data. They remove the need for complex global maps and caching and deliver consistently fast performance. Stellus is betting its scale-out farm that this results in faster access to hundreds of terabytes of file data than the traditional filer controller-drive shelf method. ESG testing and pre-release customer evaluations have shown that it is faster Han legacy filer systems.

A Stellus spokesperson said: “We can provide really great performance with variable size objects with no need for things like multi-level caches.” The company talks of an up to 800 per cent performance boost compared to existing systems, which we understand to mean Isilon systems using capacity nodes.

Hardware

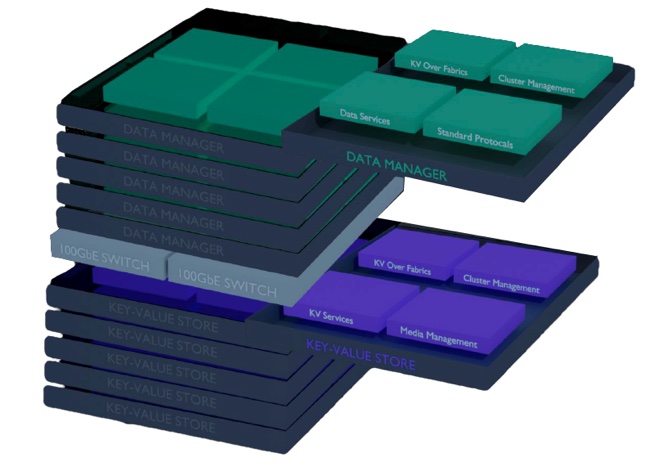

The first Stellus Data Platform systems, the 220, 420, 440, and 880, are distributed, share-everything clusters, with two types of node; Data Managers and KV Stores, plus 100Gbit/s Ethernet switching interconnecting them.

Data Manager nodes are 1U in size, stateless and form a protocol layer. They are rack enclosures, each with four components:

- Data Services,

- Standard Protocols,

- KV over Fabrics (KVoF),

- Cluster Manager.

Key Value Store (KV stores) nodes, also 1U rack enclosure with M.2 format SSDs inside, again have four functional components;

- KV services,

- Media Management,

- Cluster Management,

- KVoF.

Each Data Manager can see each KV store. There can be up to 4 KV stores per SSD and up to 32 x M.2 8TB hot-swap SSDs in a node, which provides, Stellus says, massive parallelism, taking advantage of NVMe queues.

The base 220 system is 5U in size with 2 x Data Managers and 2 x KV stores 924 x 8TB M.2 drives) plus Ethernet switch. It has 184TB of raw capacity. The 420 has 4 x Data Managers and offers184TB raw. A 440 has 4 x Data Managers and 4 x KV Stores (369TB). An 880 has eight of each node type and 1.475PB of raw capacity in its 17U of rack space.

The largest 420 is 17U in size with 8 x Data Manager nodes and 8 x KV Store nodes plus a 100GbitE 1U switching shelf. It has 1.475PB of capacity.

Stellus provides a cloud-based management facility and its SW can run in the cloud, providing a hybrid on-premises-public cloud workspace.

This is a software-defined system and it is not tied to any branded hardware instantiation. Over time Stellus will provide a hardware compatibility list (HCL). It also supports U2 format drives.

We can relate this set-up to a standard dual-controller array by likening the Stellus Data Platform to a multi-controller (scale-up Data Managers) array with scale-out capacity shelves (nodes – KV stores) accessed across an internal NVMe fabric.

The data services include data placement, protection (snapshotting), erasure coding and the initiator side of the KVoF. The standard external access protocols are POSIX, NFS 3 and 4, SMB, S3 and streaming protocols.

This standard protocol support should make it straightforward for Stellus kit to fit into customers’ existing workflows.

Drive development

Stellus said the data path uses standard hot-swap M.2 SSDs today, and is ready for new drive types, meaning key:value SSDs and then Ethernet-connected KV-SSDs

A Grohe blog states: “Samsung … has developed an open standard prototype key-value SSD and is working to help productize it. A key-value SSD implements an object-like storage scheme on the drive instead of reading and writing data blocks as requested by a host server or storage array controller. In effect, the drive has an Object Translation Layer, which converts between object key-value pairs and the native blocks of the SS

What this means is the KV Stores will be able to move intelligence down to the component drives. It is our understanding that Samsung, and possibly other SDD suppliers, are sampling or about to sample KV SSDs.

We could envisage a future iteration of the Stellus product with tiers of solid state drives. For example, storage-class memory, fast SSD, medium speed SSD, capacity SSD. Particular virtual KV stores could be optimised for, and placed on, specific types of drive to use them optimally.

ESG performance validation

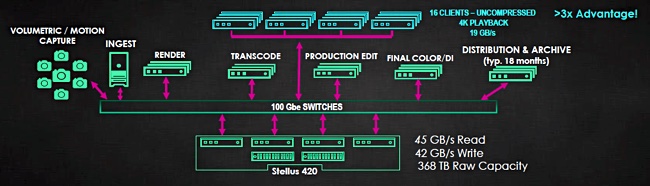

ESG has validated the Stellus system’s performance, with a report stating: “We started up 32 simultaneous uncompressed 4K video streams. … the video was playing smoothly on all clients without stutter, jitter, or dropped frames. The playback rate was 34.6GB/sec from a 420 system comprising 4 x Data Managers and 2 x KV Stores plus Ethernet switch.

A 420 system supported 45GB/sec reads to a single client with multiple threads running (FIO benchmark.) A 440 system (4 x Data Managers + 4 x KV Stores) supported 41.3GB/sec writes to the same client with a 100 per cent write workload. ESG said: “Both the read and write performance in this test exceeded Stellus’ guidance for performance of the SDP-420 and 440 which is 40GB/sec.”

ESG said: “Response time across all tests never exceeded 500μs,” and: “Delivering 41.3GB/sec write and 45.1GB/sec read throughput [is] the fastest we have ever seen for a file storage system.”

Application examples

A Stellus diagram shows a 420 system supporting an entertainment and media environment, covering all stages from initial motion-capture and camera ingest through to distribution and archive:

A customer could be working on multiple projects at once, with the Stellus system receiving IO requests from each stage in the workflow across the projects at the same time.

A Stellus spokesperson said Isilon can support typically 4 x concurrent 4k streams. Grohe said: “We aim to do to Isilon what Isilon did to NetApp.”

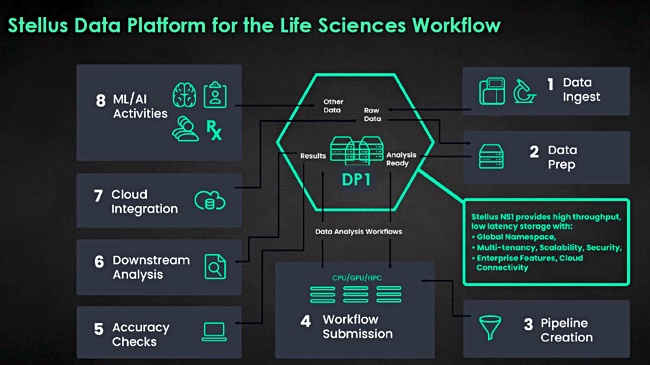

A life sciences environment and workflow uses a Stellus system covering workflow stages from data ingest, data preparation, pipeline creation, workflow submission, accuracy checks, downstream analysis, cloud integration and AI/ML activities.

Stellus said its system can sequence 150 to 500 genomes/day. It said Isilon-based systems can sequence up to150 genomes a day, giving it a claimed better than 3x advantage over the Dell EMC system.

Stellus factfile

Stellus is a Samsung arm’s-length subsidiary based in San Jose. It was initiated in 2015 with Samsung viewing it as an internal startup, and providing funding; we don’t know how much. Its CEO is Jeff Treuhaft, ex-Fusion-io where he was an EVP for products. The thinking was that Samsung couldn’t develop such a HW/SW flash array system inside itself; a fabrication business focused on manufacturing millions of smallish devices/month, meaning DRAM and NAND chips and SSDs.

So it funded Stellus Technologies as a separate company with its own board. There are some 90 employees, mostly in California, and it has been careful to stay in stealth mode since 2015. Some 17 patent applications have been made. Technology eco-system partners include Nvidia (GPUs) and Arista (network switches). Several very large companies have been looking at and evaluating the product: Apple, Disney, DreamWorks, the Federal Government, Optum, and Technicolor.

Ex-Sun boss Scott McNealy is on the board as is ex-NetApp CTO Jay Kidd.

Blocks & Files view

As one NAND chip and SSD manufacturer Western Digital exits the data storage array business having failed to achieve a successful outcome, Samsung, via Stellus, is trying to enter it. And instead of building a general-purpose fast-action filer, it’s building one specifically for use in the complex unstructured data workflows that are found in entertainment and media, life sciences and the like.

It is not looking at Big Data analytics nor at general high-performance computing (HPC).

Stellus is taking on the usual fast filer suspects – Isilon, NetApp, Quantum (StorNext), Qumulo and WekaIO, and also Excelero plus IBM GPFS systems, and ones using Intel DAOS to the extent that they are used in the target markets. It is clearly going to need a channel of vertical market-aware partners and all of the potential partners will already be selling the competing suppliers’ kit. Channel recruitment will be an important activity.

To make progress, the Stellus hot box product has to be significantly faster and more cost-efficient than the competition, which all use flash too, and as reliable. The performance and efficiency of the KV stores will be … key.

Chief Revenue Officer Ken Grohe and his team have a real job of work to do now, in establishing a new product, new technology and new vendor in a hotly-contested market. Turning Stellus into a sales star is a big ask.