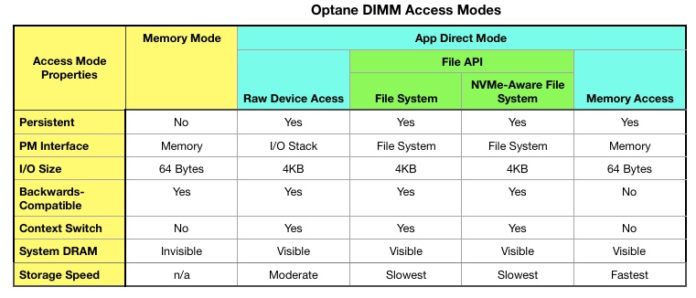

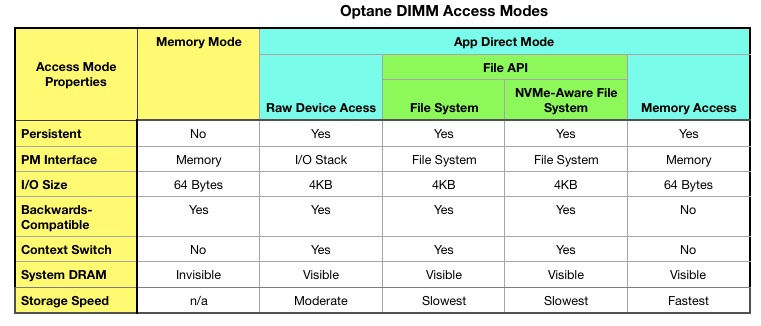

Intel’s Optane DIMMs can be accessed in five different ways, each of which have their advantages and disadvantages. We paint a broad brush picture here of the five to show what’s involved and how the modes differ.

Th five modes makes the decision of whether or not to use Optane DIMMs in a server far more complex than a binary yes:no choice.

Optane can also be implemented as a faster-than-NAND SSD, so that gives ix Optane Access methods in total. This article is largely based on four articles by Jim Handy of Objective Analysis, which start with an overview. For more detailed information study the four Handy blog posts.

But first, an Optane recap.

Optane

Optane is Intel’s brand name for devices built using 3D XPoint media. This is an implementation of Phase-Change Memory in which an electrical current is used to change the state of a Chalcogenide glass material from crystalline to amorphous and back again. The two states have different resistance levels and these are used to signal binary ones and zeroes. Each state is persistent or non-volatile.

This is why Optane is called persistent or storage-class memory (SCM).

The XPoint media is fabricated in cells which are laid out in a 2-layer crosspoint array. Access time is faster than NAND flash but slower than DRAM, with writes taking about three times longer than reads.

Optane can be implemented as a memory bus-connected DIMM or as an NVMe-connected SSD.

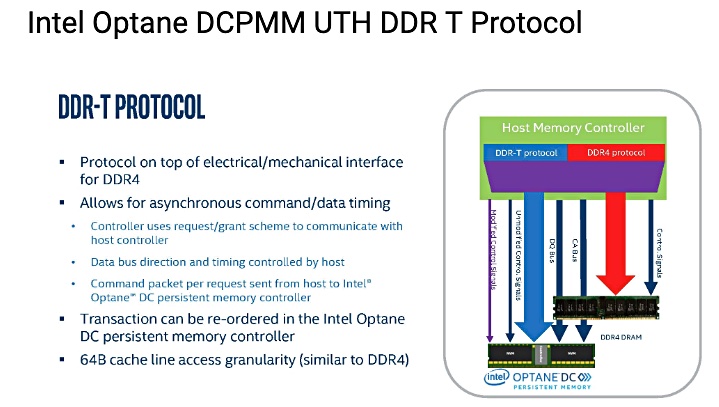

When connected as an Optane DIMM only certain Intel 2nd-Generation Intel Xeon Scalable processor can support it; ones using Intel’s proprietary DDR-T protocol. This complies with standard DDR4 but adds Optane DIMM management commands.

Speed limits

An Optane DIMM read can take 350 nanoseconds on average with the write latency averaging 1,050ns. For comparison and using generic example numbers, we present this list:

- DDR4 memory accesses – 14ns

- Optane DIMM – 350ns

- NVMe Optane SSD access can take 10,000ns (10μs)

- NVMe NAND SSD write – 30,000ns (30μs)

- NVMe NAND SSD read – 120,000ns (120μs)

- SATA NAND SSD read – 500,000ns (500μs or 0.5ms)

- SATA NAND SSD write – 3,000,000ns (3,000μs or 3ms)

- Disk drive seek – 100,000,000ns (100,000μs or 100ms)

The access times for DIMMs can involve operating System (OS) IO software stacks while those for SSDs will have added latency from the interconnect type and device controller.

There are different ways to access an Optane DIMM and each mode has its own speed. Intel has not published the numbers but the relative speeds can be inferred from the access mode characteristics.

We’ll look at the different access modes and try to position them against one another.

A complicating factor is that different parts of an Optane DIMM address space can be set apart and accessed in different modes. We’ll save that discussion for another article.

Optane SSD Access

The Optane SSD is accessed in the same way as any other NVMe SSD but returns read data or accepts write data faster than NVMe-connected NAND SSDs. There’s no need to say anything else and we can move straight on to the DIMMs.

Optane DIMM Access

An Optane DIMM can be accessed in five different ways, starting with either Memory Mode or App Direct Mode, also known as DAX for Direct Access Mode. Memory Mode is block-addressable whiles DAX is byte-addressable.

DAX has three options; Raw Device Access, via a File API, or Memory Access. The File API method has two sub-options; via a File System or via a Non-volatile Memory-aware File System (NVM-aware).

Memory Mode

In Memory Mode the Optane DIMM is paired with a DRAM cache and the host system gets to indirectly use the Optane DIMM’ s capacity as memory, with the front-end cache providing DRAM speed for both reads and writes. Since Optane writes are 3x slower than Optane reads this is important in keeping system speed high.

Optane DIMM capacity is cheaper than DRAM DIMM capacity so Ithis is an effective way to increase the effective memory capacity of a server.

However, DRAM cache contents are lost if system power is lost. The host OIS does not know what cache contents were written to the Optane DIMM from the DRAM cache, and so the entirety of the Optane DIMM’s data contents are viewed as unreliable.

So, althought Optane is persistent memory, Memory Mode writes are not persistent, while all the DAX modes are persistent.

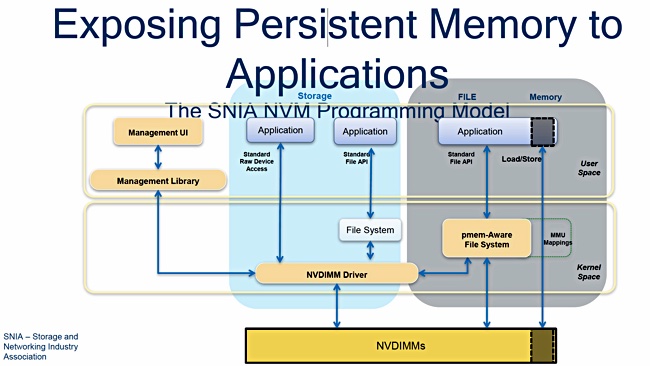

The DIMM application access modes, apart from Intel’s Memory Mode, are positioned in an SNIA Persistent Memory programming model diagram.

The other, application direct, modes involve application code being re-written to use Optane DIMMs.

App Direct Mode – Raw Device Access

In this mode the application program reads and writes directly to the Optane DIMM driver in the host OS, which, in turn, accesses the Optane DIMM. This is faster than going through a file system interface. It is not as direct, or therefore as fast, as Memory Access in which application reads and writes go straight to the DIMM without any interruption.

As we understand it, in Raw Device Mode, the Optane DIMM address space is arranged into blocks of 512b or 4KB in size. Reads and writes are at the block level and pass through an NVDIMM driver. This mode works with current file systems.

App Direct Mode – File System

In this mode the Optane DIMM address space is accessed by an application issuing file IO calls using a filesystem API. These are dealt with by the filesystem code which then talks to the NVDIMM driver and, via that, to the Optane DIMM. It takes time for the file system to do its work and thus this kind of access is slower than both raw device access and memory access.

App Direct Mode – NVM-aware File System

This modifies the file system access to involve an NVM-aware file system; pmem-aware in the SNIA diagram above. According to Handy, such NVM-aware file systems are designed to run faster than a traditional file system.

There is no benchmark data available to demonstrate this.

App Direct Mode – Memory Access

With this mode an application uses memory semantics; load and store instructions, to directly access the Optane DIMM’s address space with no intervening entities getting in the way. This is the fastest possible way an application can use Optane.

A Handy table

Handy suns up the access methods in a table, which we have reformatted slightly;

Concerning the “Backward compatibility” row – Memory Mode operates with all legacy software, as do all of the App Direct Mode access types except direct memory access.

Developers can access an Intel Persistent Memory Developers’ Kit to dig deeper into Optane DIMM access.

My head hurts

Does Optane DIMM use have to be so complicated? The short answer is yes, because storage can be accessed at block level and at file level. As Optane combines both memory and storage attributes it offers both memory-level and storage-level accesses.

Other forms of storage-class memory will, we understand, offer the same broad group of access modes, unless their supplier artificially limits then in some way.

Hopefully, any supplier offering SCM apart from Intel does so using open interfaces or, at least, provides interfaces for both AMD and ARM. Micron, for example, could enable QuantX 3D XPoint DIMMs to be usable on both AMD and Intel X86 processors as well as ARM CPUs.

That should prompt an immediate Intel Optane DIMM price cut, and also widen the SCM developer ecosystem and make more SCM-capable software available.