SoftIron has announced three Ceph-based storage systems – an upgraded performance storage node, an enhanced management system and a front-end access or storage router box

Ceph is free source storage software that supports block, file and object access and SoftIron builds scale-out HyperDrive (HD) storage nodes for Ceph. These can outperform dual-Xeon commodity hardware (see below) and are 1U enclosures, with an Arm64 CPU controlling a set of disk drives or SSDs. There are Value, Density and Performance versions.

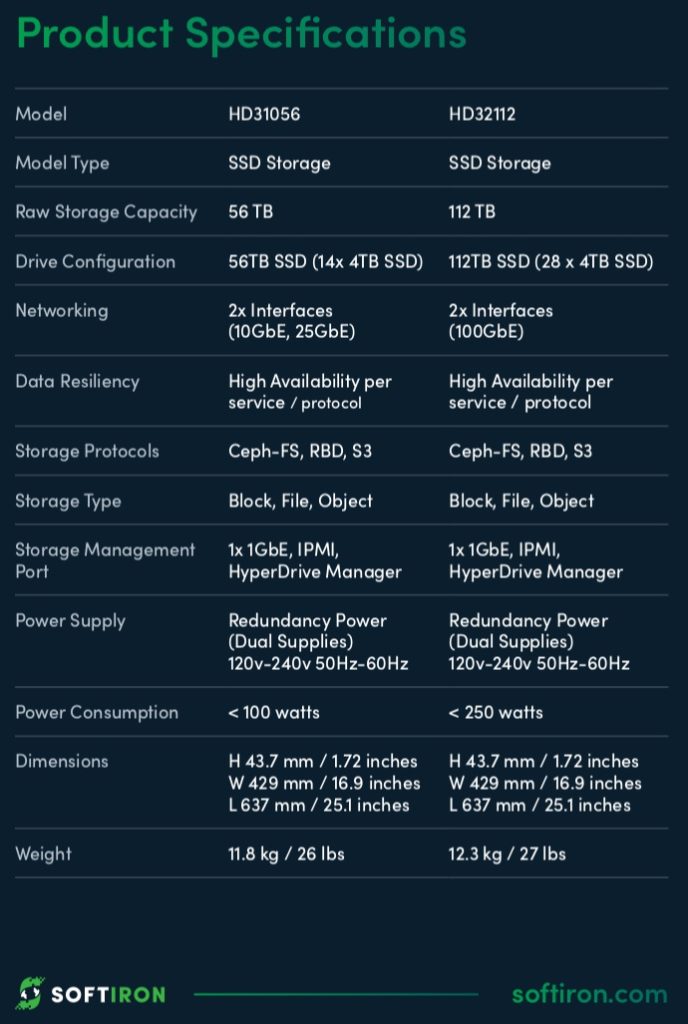

The Value system (HD11048) has 48TB of disk-based storage using 8 drives. The Density system (HD11120) has 120TB of disk capacity while the Performance variant has 56TB of SSD capacity (HD31056), using 14 x 4TB SSDs.

SoftIron’s existing Performance node is a single processor system. The new HD32112 Performance Multi-Processor node has two CPUs and 28 x 4TB SSDs, totalling 112TB. This is twice the capacity of the first Performance system.

The new HD Storage Manager appliance simplifies the complex Ceph environment for sysadmins. The GUI enables:

- Centralised management of HyperDrive and Ceph

- Update, monitor, maintain and upgrade HyperDrive nodes

- Manage file shares SMB (CIFS) and NFS, object stores (S3 and Swift), and block devices (iSCSI and RBD – RADOS block devices)

- Single HD product management facility

- Insight into Ceph storage health, utilisation and predicted storage growth

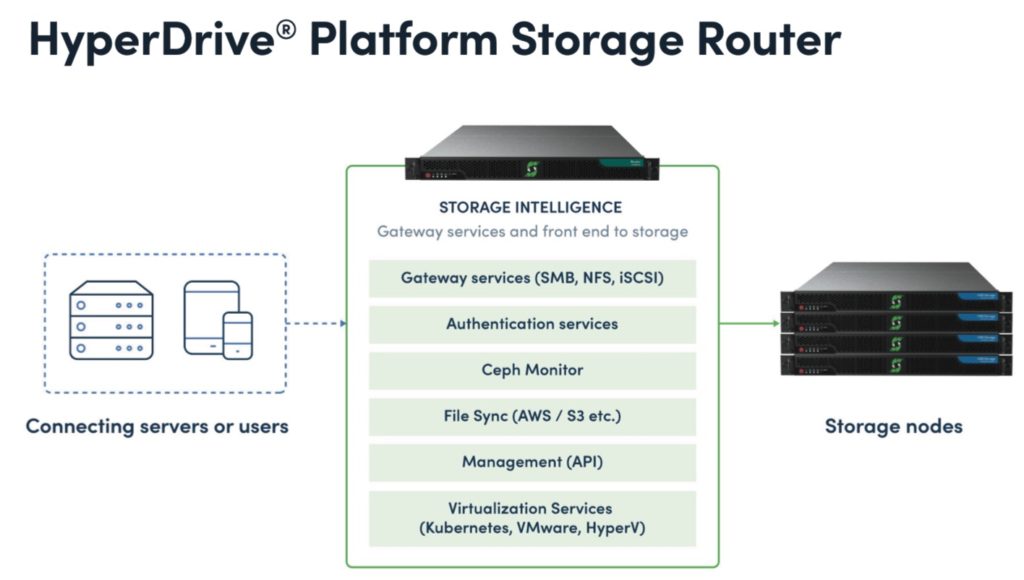

The HD Storage Router centralises front-end client access protocols to the storage nodes. It is a 1U system which provides Ceph’s native block (RBD), file (Ceph-FS) and object (S3) access, adding iSCSI block and NFS and SMB/CIFS. The iSCSI block and file protocols can operate simultaneously.

The NFS facility enables support of Linux file shares and virtual machine storage. SMB and CIFS enable support of Windows file shares.

Performance

SoftIron’s products have skinny ARM processors compared to commodity storage boxes with beefier Xeon CPUs. However SoftIron claims its integrated hardware and software systems outperform Ceph running on commodity dual-Xeon hardware.

With sequential reads and writes a SoftIron entry-level system (8-core ARM CPU, 10 x 6TB 7,200rpm disk drives, 256GB SSD for write journalling) achieved 817MB/sec peak bandwidth, with SoftIron’s HyperDrive being 26 per cent faster.

A cluster of three systems achieved 650MB/sec. Each node used 2 x 8-core Xeon E5-2630 v3 processors, 12 x 1.8TB SAS disk drives and 4 x 372GB SSDs.

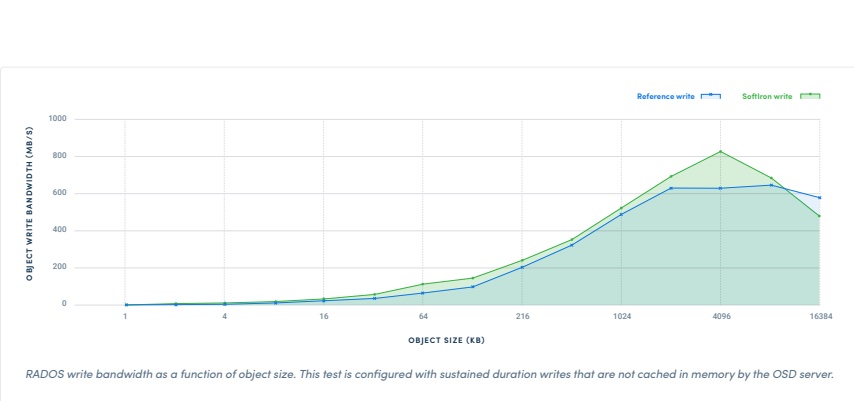

With cached random object write performance the SoftIron system peaked at 606MB/sec with the dual Xeon box going faster at 740MB/sec.

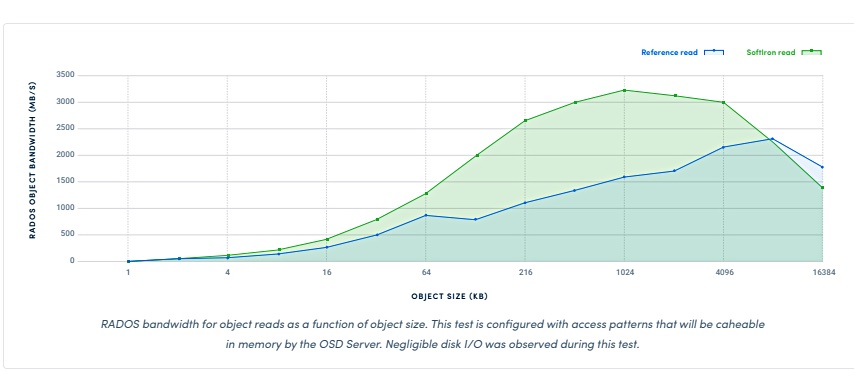

But supremacy was regained with cached random object read performance. The Xeon system reached 2,294MB/sec while SoftIron’s box went 44 per cent faster at 3,300MB/sec.

The term ‘OSD’ on the charts stands for Object Storage Device, a storage node in Ceph terms.

Summary

The three new SoftIron products bring flash capacity almost up to disk-based capacity (112TB NAND vs 120TB disk), add improved and simplified management and extend Ceph usage by adding iSCSI and NFS gateway functionality. This means that an enterprise lacking Ceph-skilled admin staff can envisage using it for the first time with a variety of potential storage use cases.

However, the SoftIron system performs poorly on Ceph object writes compared to a dual-Xeon CPU system. On object reads it’s faster.

We have no information about the performance of SoftIron Ceph systems on block or file access by the way.