DPU startup Fungible has rowed back from its data center composability ambitions, cancelling products to concentrate on its storage cluster technology using all-flash arrays controlled by its DPUs with no x86 involvement.

Blocks & Files was briefed by Fungible chief scientist Jai Menon (pictured above) and chief revenue officer Brian McCloskey on Fungible’s new strategy. The pivot was necessary in order to grow the company as the composability market is slow in developing and lacks standards, which limits its expansion.

The scale-out Fungible storage cluster product is significantly faster, more power efficient, and space efficient in delivering storage capacity to applications than competing SANs , we are told.

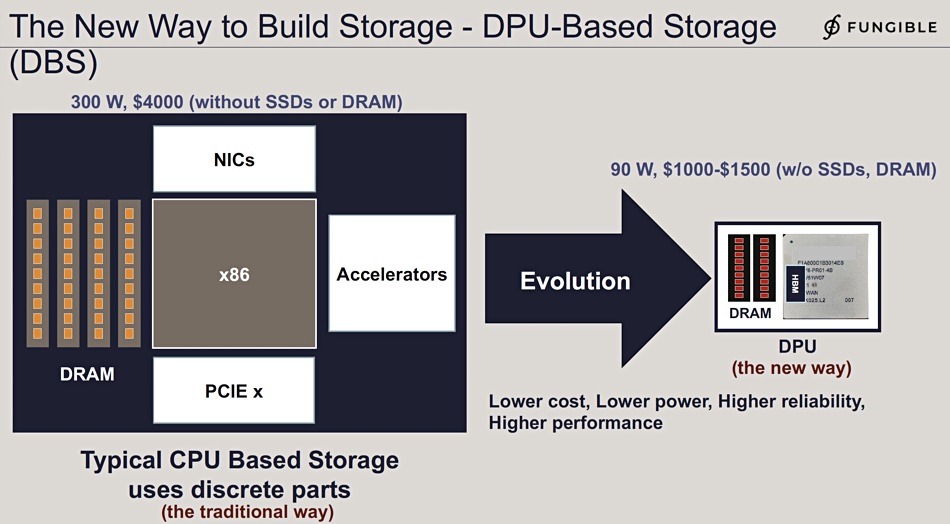

This is because its controller, based on Fungible’s F1 DPU chip, is domain-specific and not a general-purpose CPU like Intel and AMD x86 processors, and Arm CPUs. It takes the many discrete components in an x86-based storage controller and integrates their functionality in a single chip.

Fungible’s FS1600 NVMe array storage node product does not append a SmartNIC to a dual-x86 controller array; it replaces the x86 controllers with its own DPU chips. It is, Menon said, DPU-based storage (DBS) versus traditional CPU-based storage (CBS).

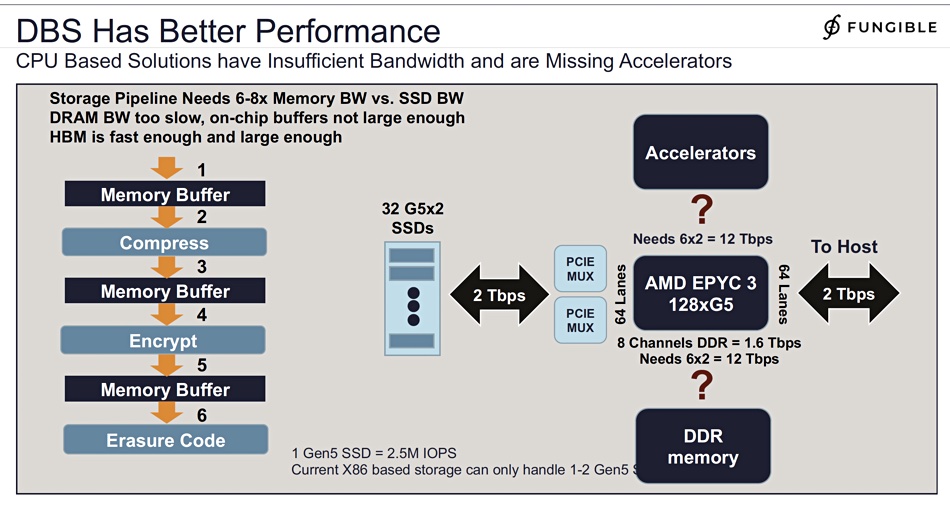

Menon showed a company slide he said demonstrates Fungible storage node’s superiority over competing, legacy CBS systems. It has a 10x speed advantage over existing SAN storage, and 20x on the TPC-H 6 benchmark.

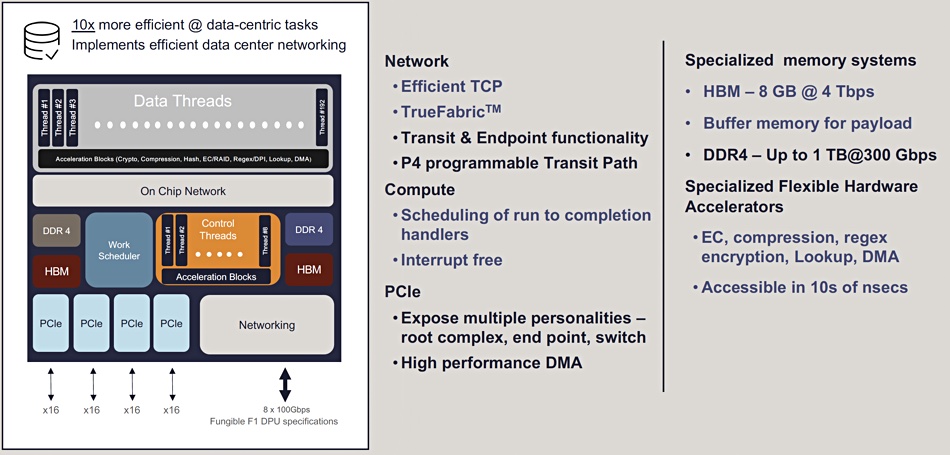

A prime aspect of the performance boost over x86-based controllers is that there is, Fungible says, no need to repeatedly transfer data from a hardware device to and from DRAM in Fungible’s F1 chip with its on-chip High Bandwidth Memory (HBM) memory.

Menon told us: “Storage stresses the memory systems of storage controllers, stored systems … as the data comes in, what you have to do is you have to move it into memory, then you have to take it out, you have to compress it, move it back into memory, you have to encrypt it, you have to erasure code it, you might have to add CRC on it, all of this puts a lot of stress on the bandwidth of the memory system.”

Traditional storage controllers’ “speed to memory is of the order of half a terabit per second. And, you know, the best I’ve seen is maybe one and a half terabits per second. But in our case, we have this HBM (High Bandwidth Memory) on the package on the DPU. That gives me four terabits per second.”

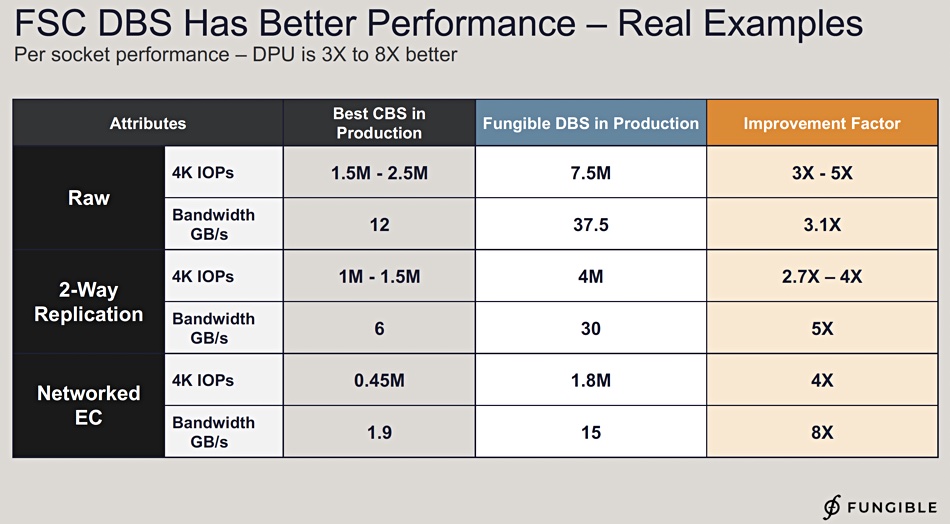

He provided a company slide showing the overall performance advantage of Fungible’s DBS storage cluster node vs traditional x86 controller SAN storage on a per-CPU-socket basis:

It indicates there are 3 to 5x improvements in raw IOPS, a 3.1x improvement in raw bandwidth and increases in replication and networked error control speed, both in IOPS and bandwidth terms.

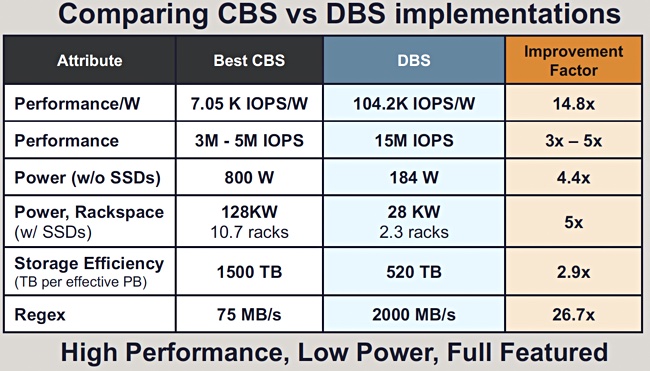

He then showed a chart comparing CBS and Fungible’s DBS storage on an implemented system basis, with power efficiency taking a lead role

Menon claimed that compared to x86-based storage controllers, “this is our new way; very integrated, lower cost, better performance. I’ve tried to summarize everything on one table … look at performance, and you do 3 to 5x, you can look at performance per watt and it gets even better. You can look at power and rackspace, I can give you 5x there, you will look at storage efficiency, you’re gonna look at regular expression (Regex) performance.”

This company slide also focuses on on performance per watt, with Fungible having a 14.8x advantage, as well as its IOPS performance advantage. Menon said datacenter racks have a 12kW limit. A CBS system needing 10.7 racks (128kW) could be replaced by a Fungible system needing just 2.3 racks and 28kW of electrical power, he told us.

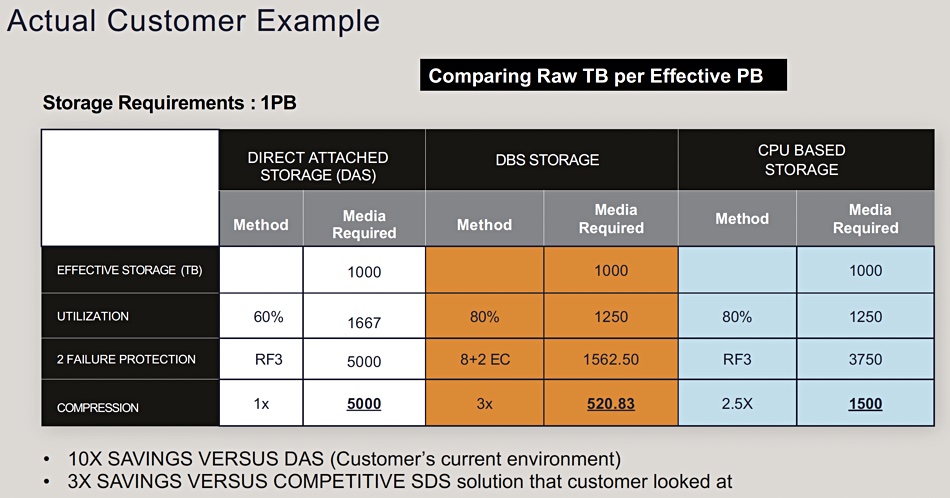

These benchmarks from Fungible may interest people deploying large numbers of storage nodes in a SAN cluster at petabyte levels. The cost efficiency numbers will also interest, as a further chart Menon showed illustrates:

This compares Fungible’s DBS with direct-attached storage and external x86 SAN storage with a 1PB effective storage capacity requirement. Fungible claims that because it has better utilization, less protection overhead, error correction code, and better compression, it needs less raw capacity (520.8TB) to meet the 1PB requirement than either DAS (5PB) or CBS (1.5PB).

Menon said: “This is an industry that’s somewhat conservative. And we’re going in and saying, look, there’s a new way to do this, there’s a new way to build storage. The message is resonating, we are getting large customers, we’re focusing on a certain kind of customer.”

“Our customers, especially our largest customers, are buying it for other reasons … It’s the one fifth of rackspace needed. It’s the enormous millions of dollars that they are saving per year. So we have other strengths that customers are seeing beyond the performance part.”

Talking about competition from the likes of NetApp, Pure, Pavilion, and others, Menon said: “Once some of these big customers come out, and we actually make some announcements, then that will be so powerful as to really, really change the tide, and help us fight off the big guys.”

McCloskey said: “There’s some customer validation, external stuff that that we’re working on, that we hope to be able to share in the coming months that I think will blow your socks off when you see it.”

Fungible has a direct sales channel with no known partnerships at present. Blocks & Files thinks it could partner with Weka as that company uses NVMe SSDs with its scale-out, parallel Matrix filesystem.

Fungible has a very fast 2×24 slot all-flash SAN node because of its hardware design and software. It looks to have a story to tell on power and space efficiency and should appeal on these three bases to customers looking for multi-petabye SAN deployments with NVMe SSDs.

PCIe gen 4 SSDs will stress storage controllers more than gen 3 drives, and gen 5 SSDs will up the stress further. If Fungible is correct in its analysis of x86 controller memory limitations, the tide of SSD development is flowing its way.