A study of Kubernetes storage supplier performance has revealed that efficiency of storage code is exposed in cloud-native environments. This has a knock-on effect on performance.

In a recently updated review, Jakub Pavlík of Volterra determined that Portworx and MayaData’s OpenEBS Mayastor performed best in delivering block storage IO to containers.

It is not immediately intuitive that the efficiency of different Kubernetes storage performers should differ. A cloud-native server has a hypervisor/operating system core running containers. Containers should be efficient users of hardware resource. There is little or no OS duplication – unlike a virtual server, which has a hypervisor running guest virtual machines, each with an operating system inside them as well as application code.

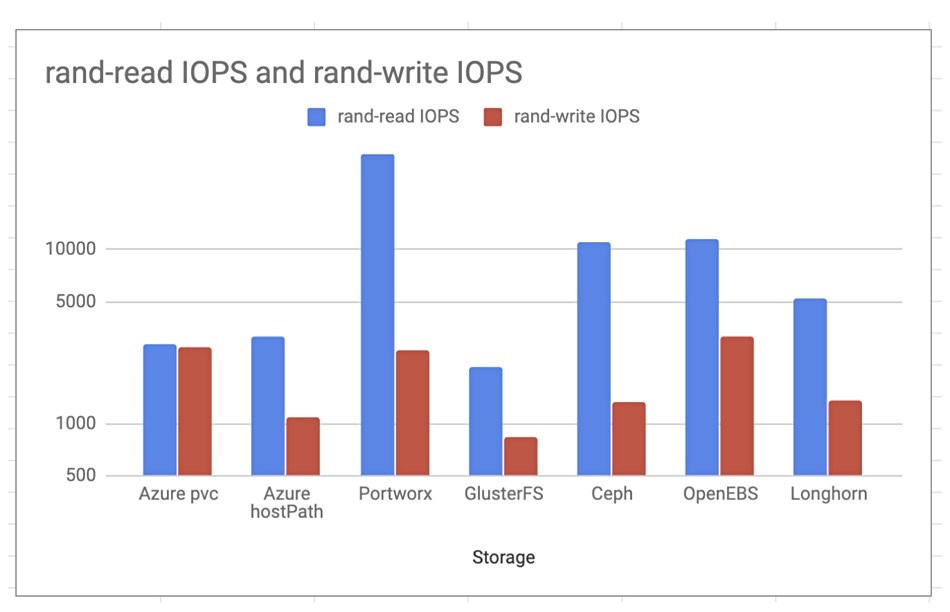

Pavlik’s evaluation of block storage for containers also included Ceph Octopus, Rancher Labs Longhorn, Gluster FS and Azure PVC. Ceph was third fastest in container storage speed for the Azure Kubernetes Service (AKS).

Portworx was ahead of every other supplier with random reads.

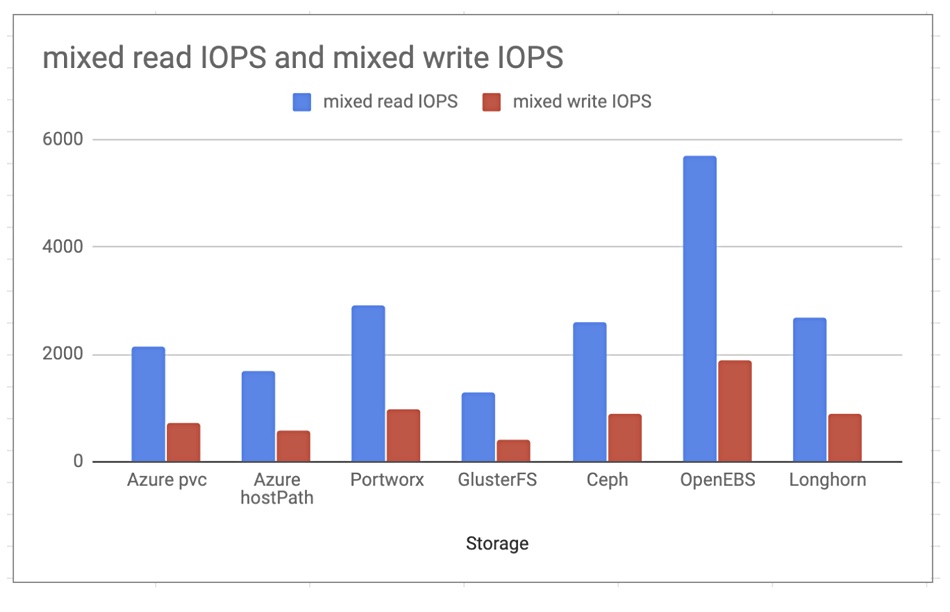

Mayastor was ahead with mixed read and writes.

Mayastor benchmarks

MayaData’s Mayastor is based on OpenEBS, an open source CNCF project that it created. OpenEBS is a foundational storage layer that enables Mayastor and others to abstract storage in a way that Kubernetes abstracts compute.

The company earlier this month published some storage performance benchmarks of OpenEBS Mayastor working in tandem with NVMe and Intel Optane SSDs.

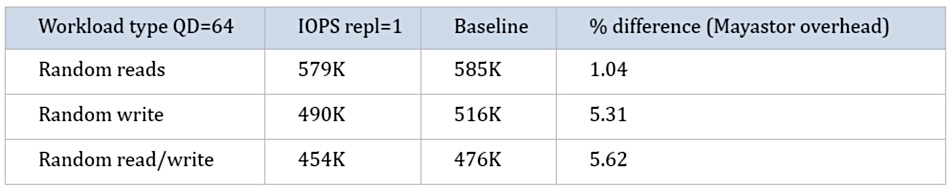

Mayadata established baseline Optane performance using the Fio Flexible IO tester from Github to obtain 585K, 516K and 476K random read, write and 50/50 mixed read/write performance from an Optane SSD with an NVMe interface.

Then OpenEBS had Mayastor provide storage to containers by reading from and writing to the Optane drive using NVMe-oF as the data transport method across a network. It measured the delivered IOPS and found little difference (1 – 5.6 per cent).