Storj briefed B&F on its decentralized storage offering, revealing why Web3 storage decentralizers use cryptocurrency and blockchain, and how Storj makes decentralized storage fast.

Decentralized storage (dStorage) takes the virtual equivalent of an object storage system and stores data fragments on globally distributed nodes owned by independent operators who are paid using cryptocurrency, with storage I/O transactions recorded using blockchain. You realise how odd this is when you notice that the nodes in an on-premises object storage system don’t use blockchain to record their transactions and don’t require any cryptocurrency payments. But it’s Web3, so, cool?

Storj offers decentralized cloud object storage (DCS) software, previously known as Tardigrade, which uses MinIO and is S3-compatible. John Gleeson is Storj’s COO and he answered the cryptocurrency query: “It is all about cross-border payments. The cryptocurrency allows us to pay what is currently about 9,000 operators, operating 16,000 storage nodes in 100 different countries in a way that I can’t think of any other way that we would do that. Really, the cryptocurrency component isn’t innovation.”

If Storj paid its storage node providers – its operators – in fiat currency then it would have to manage foreign exchange rates and transactions. Using cryptocurrency lets Storj avoid foreign exchange complications – the operator gets paid within the cryptocurrency regime and each one deals with conversion to their local real money.

Blockchain

The use of blockchain is inherent in cryptocurrencies but is also generally used in dStorage to confirm and verify that data I/O has taken place and that storage capacity is present and available in the dStorage network.

A blockchain is a decentralized ledger of transactions across a peer-to-peer network and is only needed because the peers in the network cannot natively be trusted to be present and performing as they should. In an on-premises multi-node storage system the nodes can be physically seen and the system controllers know of their presence and activity. Indeed, if a node goes down systems can detect this, via a missing heartbeat signal say, and fail-over to a working node.

That is not the case in a general peer-to-peer network, where there are no controllers. A node in Guatemala can go down with no other peer system realizing it. Blockchain technology is used to verify that operating nodes are active and performing correctly. For example, in the Filecoin storage network, blockchain verification is based on operators providing proof of replication and proof of space-time (PoSt).

Storj only uses blockchain for its internal cryptocurrency transactions, as Gleeson said: “We use blockchain only for the payment. Our token is an ERC-20-compatible token built on the Ethereum blockchain. And that is the extent of the blockchain technology in our in our product.”

Technology versus philosophy

Several dStorage providers believe that the world wide web has become too centralized in the hands of massive corporations, such as Amazon, Google and Microsoft. They believe in the wisdom of crowds, the computation-supported ability of providers and users to interact with and operate an internet that is not dominated by large corporations. For them blockchain is the technology – the golden doorway – opening the internet to freedom, with self-policed users and operators working within a cryptocurrency environment safeguarded by the technological magic that is blockchain.

But businesses want to store data – safely and cost-effectively. They have no philosophical desire to overturn Amazon because Amazon’s existence is somehow just wrong. Indeed, most would like to be Amazon. For them the storage of data has to be fast – I/O performance matters, and dStorage is generally only fast enough for storing archival data. It’s slow, in other words.

For example, in the Filecoin network, it can take five to ten minutes for a 1MiB (1.1MB) file from the start (deal acceptance) to the end of the upload process (deal block chain registration, aka appearance on-chain).

Storj characteristics

Gleeson said Storj is different and does not use blockchain for storage I/O transactions – the deals in which operators make storage capacity available to clients, accept and store and then retrieve the clients’ data.

He said: “Blockchain requires high computation, it tends to have very high latency. And if you have a storage business and you have an hour and a half to save a file and retrieve a file synchronously, that’s not a thing that that many people can use well. But if you’ve got sub-second latency, and you’ve got high throughput, then you have a product that addresses a broad range of use cases,” and not just archiving.

Storj asked itself, Gleeson said: “Could you actually use some of the primitives of decentralized systems but without a distributed ledger, without a true blockchain, without a high energy consuming proof of work component? Just tap into what is ultimately a system of thousands and millions of hard drives all around the planet that are 10 or 20 percent full, and aggregate that under-utilized capacity in a way that you can take advantage of some of the benefits of proven distributed systems like Gluster, and Ceph?”

You would need to create a an incentivized system of participation with zero trust architecture and layers of strong encryption, and have easy to use, but powerful access management capabilities to deliver such cloud storage product.

And that is what Gleeson says Storj did. “You just you take a fundamentally different approach, which is the Airbnb approach. Right? You can and try and build hotels or, or you can aggregate the excess capacity of people’s rooms all around the world.”

He said: “We’re delivering an enterprise grade service. We offer we’re the only decentralized project offering an SLA: 11 nines of durability, 99.97 percent availability.”

Cost

The storage cost is $0.004/GB/month and bandwidth (egress) costs $0.007/GB. Multi-region capability is included at no charge. This is cheaper than AWS, by far, and also Wasabi and Backblaze.

Gleeson said: “Because we’re not building buildings and stuffing them full of servers and hard drives, we’re able to to really capitalize on the unit economics here, and offer a product that is 1/5 to 1/40th of the price of Amazon depending on your use case.”

Storj is “tapping into any datacenter, any computer anywhere that has excess hard drive capacity. And when you can share that with the network, we’re able to aggregate all of that capacity as one logical object store and present that to applications to store data. It allows individuals to monetize their unused capacity. … And it gives us the the edge in terms of security, pricing and performance.”

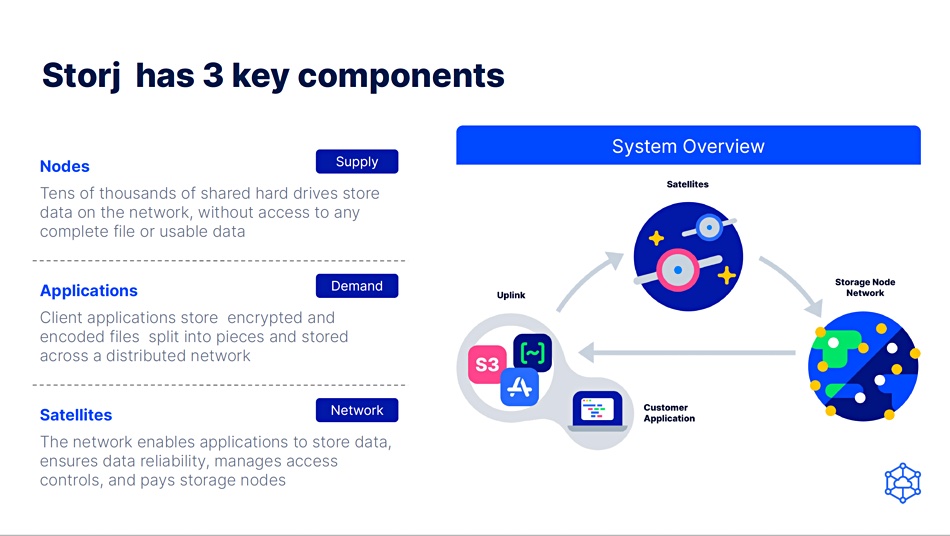

Customer applications talk via an uplink to do-called satellite nodes which, in turn, link to the storage capacity-providing nodes. Storage node operators are paid for storage and egress. We could think of a node as a virtual storage drive and a satellite as the rough equivalent of a filer (metadata) controller, which knows which drives (nodes) store which data shards.

Incoming data files or objects are split into >80 sections (shards), encrypted, erasure-coded and spread across a subset of the available nodes. Any 29 shards can be used to reconstruct lost data.

Performance and test

Storj offers performance better than S3 for many workloads, measured in milliseconds, and says it is content delivery network class. It writes data from and presents data to clients (read I/O) using parallel fetches to/from the shard-storing nodes and claims a typical laptop can achieve 1Gbit/sec transfer speed with a supporting internet connection while more powerful servers can exceed 5Gbit/sec downloading (reads) and in excess of 5Gbit/sec uploading (writes). Extremely powerful servers on strong networks can achieve transfer speeds of large datasets in excess of 10Gbit/sec.

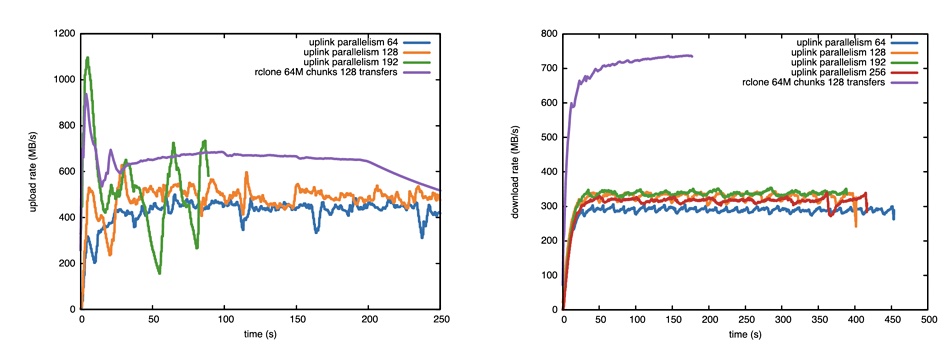

A University of Edinburgh academic, Professor Antonin Portelli, has written a paper about Storj performance when storing HPC data. A simulated 128GB HPC file filled with random data was uploaded to Storj’s decentralized cloud storage (DCS) from the DiRAC Tursa supercomputer at the University of Edinburgh. The server used had a dual-socket AMD EPYC 7H12 processor with 128 cores and 1TB of DRAM.

The files were then downloaded from a number of supercomputing centers in the USA and the download speed measured. All the upload and download sites had multi GB/sec access to the internet. Here are the results:

Upload rates were in the 400 to 600MB/sec range with download rates in the 280 to 320MB/sec area. Splitting the files manually achieved a 700MB/sec download rate (purple line) and >600MB/sec upload rate. Portelli comments: “It is safe to state that these rates are overall quite impressive, especially considering that thanks to the decentralized nature of the network, there is no need for researching an optimal network path between the source and destination.”

This is a level of performance greater than Filecoin or other blockchain-centric dStorage networks can achieve.

Storj says its performance is fast enough for it to be used for video storage and streaming, cloud-native applications, software and large file distribution, and as a backup target. Gleeson said performance should improve further in 2023 due to better geographic placement and seeking algorithms, and Reed Solomon (erasure coding) adjustments.