CXL memory pooling could drastically increase the amount of memory available to in-memory applications, such as Redis with its database. But what does Redis itself think?

Memory pooling with CXL uses the Computer eXpress Link protocol, based on the PCIe 5 bus, to enable servers to access larger pools of memory than they could if they only used local, socket-accessed DRAM.

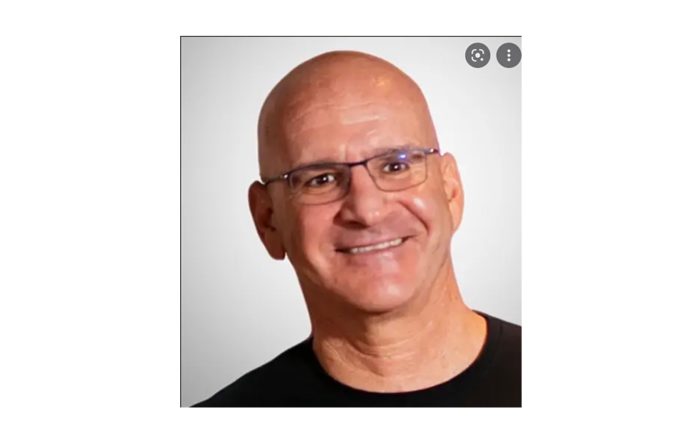

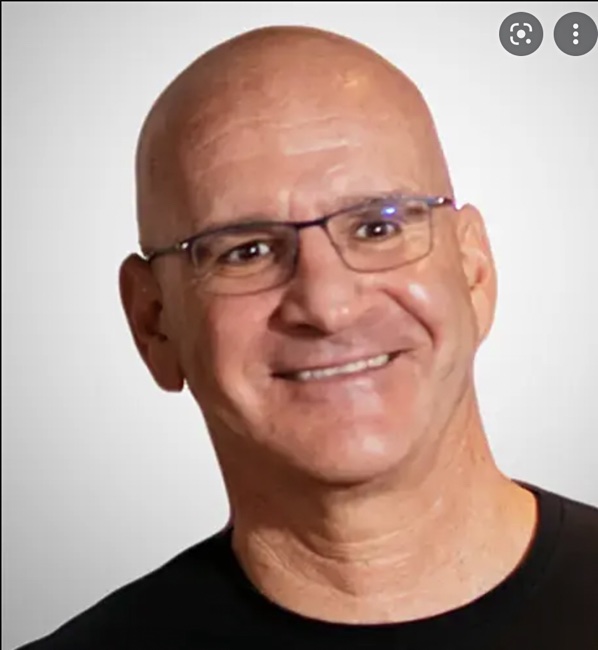

We spoke to Redis CTO and co-founder Yiftach Shoolman, who thinks CXL memory pooling is a positive development.

Blocks and Files: How do you see CXL 2.0 memory pooling affecting Redis?

Yiftach Shoolman: Redis is an in-memory real-time datastore (cache, database, and everything in between); as such, CXL enables Redis to support larger datasets at a lower price per GB as it extends the capacity of each node in the Redis cluster with more cost-effective DRAM.

Furthermore, CXL enables the deployment of more memory per core (or virtual cores) in each node in the Redis cluster. This is great for users who use Redis Enterprise (our commercial software and cloud service) as they can now deploy more databases or caches on the same infrastructure and reduce their deployment costs using the built-in multi-tenant capability of Redis Enterprise.

Blocks and Files: How could Redis manage the access speed discrepancy between a server’s directly connected DRAM and the CXL memory pool?

Yiftach Shoolman: Redis Enterprise uses its Redis on Flash technology to enable memory tiering capabilities, in which hot objects are stored in DRAM, and warm objects are stored in SSD. CXL provides another (middle) tier that can potentially extend this hierarchy, i.e. hot objects will be stored in DRAM, the warm objects in CXL (cheap memory or Persistent Memory), and cold objects in SSD.

Blocks and Files: Is there a role for storage class memory in a CXL memory pool?

Yiftach Shoolman: Yes, the CXL standard supports DRAM memory and NAND flash memory like Intel’s Persistent Memory. And it’s up to the infrastructure providers to decide the ratio between the different memory technologies that are (or will be) available for the users.

Blocks and Files: Will CXL memory pooling be more relevant to customers of hyperscale cloud providers or enterprises?

Yiftach Shoolman: I believe we are still in the early stages of adoption. That said, and theoretically speaking, enterprises have more flexibility to decide about the type of hardware they will use in their infrastructure. But, on the other hand, if the hyperscale cloud providers find CXL attractive, the adoption of this technology for mainstream use cases will be much faster.

Blocks and Files: How might such Redis customers differ in their use of CXL memory pooling? Will it just or mostly be the sheer size of the memory pool?

Yiftach Shoolman: Sizing is just one parameter. The others are the type of memory they would like to use with CXL. Is it regular DRAM, or is it slower but cheaper DRAM or Persistent Memory?

Blocks and Files: How could the data in Redis in a CXL memory pool be persisted and then protected?

Yiftach Shoolman: From the Redis perspective, CXL is just another pool of memory. Therefore each one of the Redis persistent mechanisms will continue to operate as if CXL were a regular DDR memory.

Blocks and Files: Are there other points relevant to Redis and CXL memory pooling?

Yiftach Shoolman: I believe the success of CXL memory pooling is mainly dependent on the adoption of the hyperscale cloud providers. Without it, CXL might be just a niche solution for specific use cases.

Comment

Hyperscaler adoption of CXL memory pooling could be a tipping point in Shoolman’s thinking. Such large-scale adoption would encourage CXL hardware development and also facilitate cloud bursting of CXL memory-pooled applications by enterprises – giving them access to potentially much larger memory pools than they could afford themselves.

A note about CXL and storage-class memory: Simon Thompson of Lenovo has written: “We eagerly await the arrival of Compute Link Express (CXL) storage class systems!”