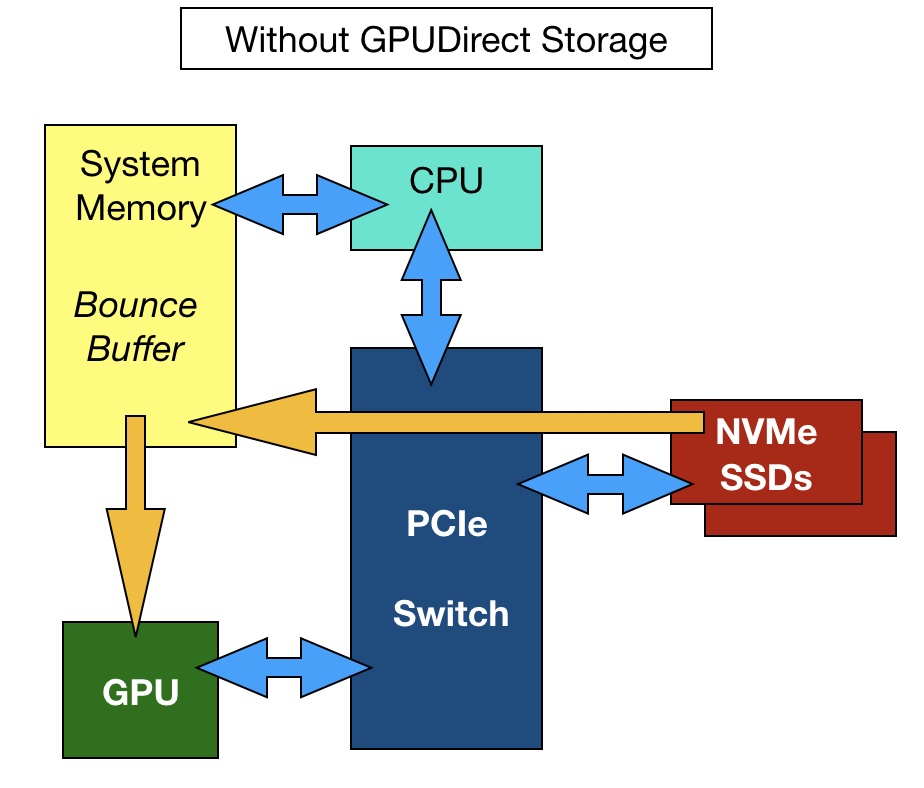

GPUDirect – GPUDirect enables DMA (direct memory access) between GPU memory and NVMe storage drives. Typically, data transfers to a GPU are controlled by the storage host server’s x86 CPU. Data flows from storage that is attached to a host server into the server’s DRAM and then out via the PCIe bus to the GPU. Nvidia says this process becomes IO bound as data transfers increase in number and size. GPU utilisation falls as it waits for data it can crunch.

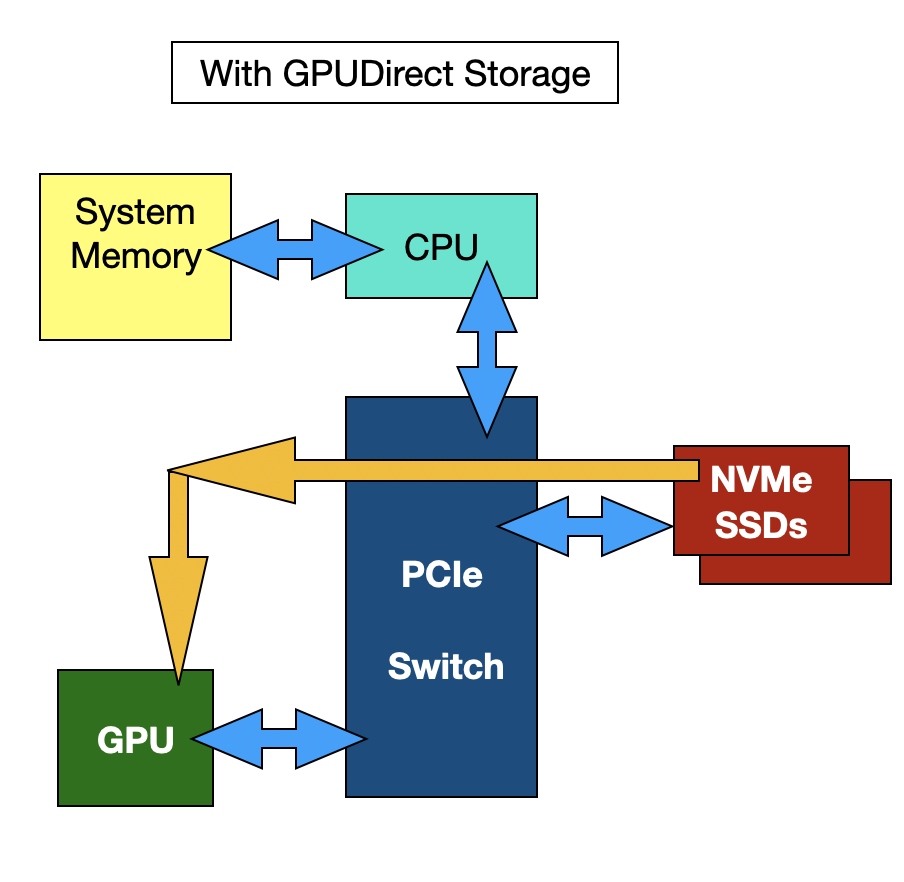

With GPUDirect architecture, the host server CPU and DRAM are no longer involved, and the IO path between storage and the GPU is shorter and faster. The storage drives may be direct-attached or external and accessed by NVMe-over-Fabrics.