Larger enterprises are storing multiple petabytes of data already and many are heading towards storing exabytes. This presents significant economic and management challenges, according to Infinidat, the high-end storage array maker.

‘Traditional hardware-based storage arrays are expensive, hard to manage, and orders of magnitude too small for the coming data age. They must, therefore, evolve into something new: software-defined on-premises enterprise storage clouds,” Infinidat proclaimed in a white paper published this month.

“The next generation of applications cannot be built on traditional enterprise infrastructure, because the economics and user experience required in the multi-petabyte enterprise are so radically different from what was offered in the past by traditional IT in the terabyte era.”

According to Infinidat the solution is to build huge scale-out capability for storage, moving away from single storage arrays towards a large cluster or private cloud of arrays.

To accomplish this, Infinidat is developing the Elastic Data Fabric, a concept based on ‘Availability Zones’ and built atop Infinibox array clusters.

Elastic Data Fabric will combine dozens to hundreds of storage systems across multiple on-premises data centres and data in public clouds into a single, global storage system scalable up to multiple exabytes.

It is based on Infinidat’s existing arrays and software and the company will deliver elements of the Availability Zone framework in mid-2020. AZs pools storage with seamless data mobility inside a data centre and the public cloud, and offer near infinite scalability, according to Infinidat.

Public Cloud influence

According to Infinidat petabyte-scale computing “on premises is lower cost than in the public cloud,” but customers need public cloud attributes when handling such large amounts of storage. In its white paper, the company lists the user requirements:

- Fast, reliable, virtualised data storage on demand that is API-driven and seemingly infinite in supply. Think in terms of regions and availability zones, not arrays and filers

- Storage services must be tightly integrated with compute tiers such as VMware and Kubernetes

- Data must be secured 24×7

- Customer business units should not be subjected to change control procedures and other hindrances related to data migration activities executed by the cloud operator.

Infinidat aims to fulfil this brief, courtesy of the InfiniVerse management tool.

EDF control plane

InfiniVerse acts as the control and data plane for Elastic Data Fabric. The software clusters up to 100 InfiniBoxes per data centre into a scale-out storage cloud.

Individual arrays, delivered in racks, are invisible to end-users in an availability zone. All storage objects including pools, volumes, and file systems are provisioned at the AZ level. AZs support heterogeneous InfiniBox configurations, such as capacity and performance-optimised racks, and multiple generations of InfiniBox hardware.

Data placement and mobility decisions within the cluster can be executed manually or by an AI agent that monitors and automatically responds to quality of service policy compliance. This is all controlled and monitored by InfiniVerse’s UI and REST API.

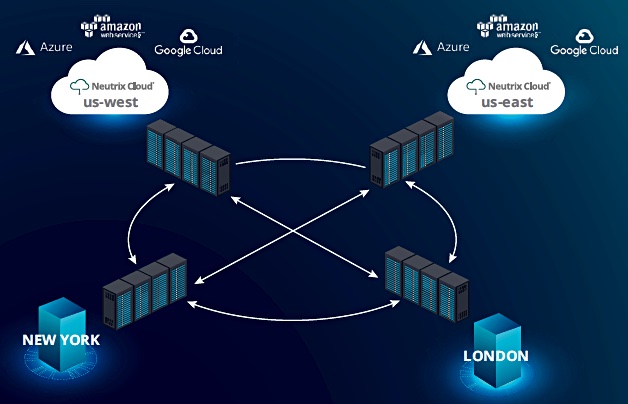

An Infinidat EDF diagram shows Elastic Data Fabric embracing multiple geographically separate data centres.

AZs are defined as operational within data centres, and as yet there is no management facility to handle multiple AZs across data centres. It seems reasonable to infer that Infinidat will add this capability in due course.

EDF components

Six Elastic Data Fabric components are available today:

- InfiniBox arrays

- Neutrix Cloud – Infiniboxes in a data centre connected directly to public cloud operators

- InfiniBox FLX – InfiniBox as a service

- InfiniVerse SaaS storage monitoring and analytics and non-disruptive box-to-box data mobility

- InfiniGuard data protection

- InfiniGuard FLX – InfiniGuard as a service

Two components are planned for mid-2020:

- InfiniVerse storage AZs

- InfiniVerse transparent data mobility (TDM) with no server or application awareness

Data mobility

Elastic Data Fabric scales as data sets grow or temporary workloads are created, and shrinks as they retire, morph, or move closer to the applications and users over time, Infinidat said.

There is no need for data migration change control procedures due to hardware refresh.

Non-disruptive data mobility (NDM) is live today and enables workload migration of workload from one InfiniBox to another without application downtime. It uses InfiniBox Active/Active replication and Host Power Tools to provide a scriptable data migration process between any two InfiniBox systems within metro distance.

Transparent data mobility (TDM) will provide workload mobility from rack to rack within an Availability Zone, with no downtime and no involvement or awareness at server level. It will use a distributed peer-to-peer function on the target port drivers and does not need in-band virtualization appliances.

Exabyte-scale physical SAN versus hyperconverged system

Infinidat provides shared external storage arrays accessed by applications running in connected servers. This contrasts with the hyperconverged approach which combines servers and storage in connected nodes running as a virtual SAN. There can be compute and storage nodes in such a scheme but the virtual SAN concept is still used.

We note that an InfiniBox F6300 has up to 4PB of usable capacity and up to 10PB of effective capacity, after data reduction. A hundred systems means an AZ could have up to 400PB usable and 1,000PB effective capacity.

We think storing 400PB usable capacity in a hyperconverged system would be a huge challenge. With Nutanix, for example, this requires 5,000 NX-6035C-G5 storage-only nodes, each with 80TB raw capacity. And you still need compute nodes, resulting in a substantial system management task to handle multiple thousands of nodes.

That is a different order of complexity compared with handling 100 Infinibox arrays as an entity.