Astera Labs is sampling CXL-connected memory expansion and pooling controllers with customers and strategic partners after interoperability testing with various CPUs, GPUs, and DRAM modules.

Computer Express Link (CXL), based on PCIe, is becoming an industry standard for connecting an intelligent host with external devices that have local memory needing integration with the host’s memory.

It can therefore provide access to external memory and bypass host multi-core CPU bandwidth and capacity limitations with CPU socket-connected DRAM. To do that it needs controller devices (FPGAs or ASICs) and other electronics that look after interconnectivity functions across the CXL physical cabling. Astera Labs is a startup building such controllers.

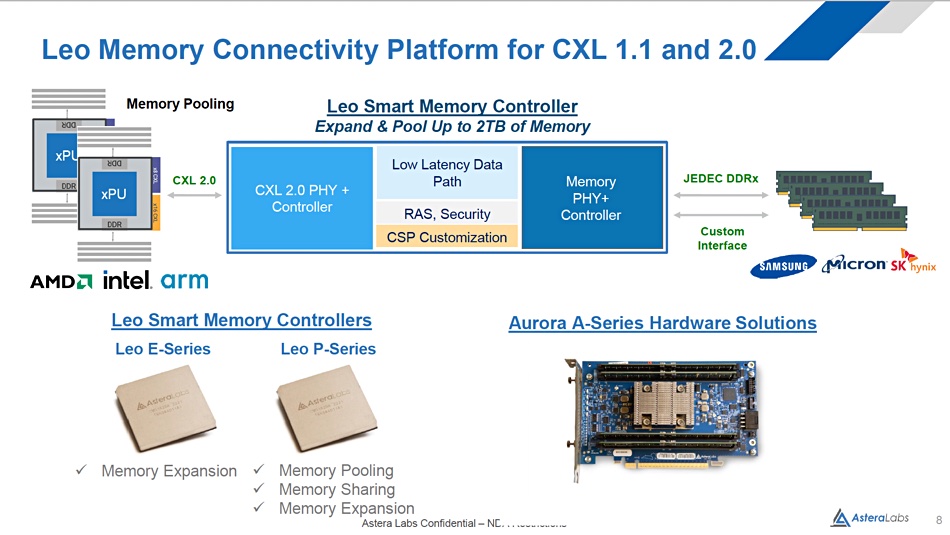

CEO Jitendra Mohan said in an announcement: “Our Leo Memory Connectivity Platform for CXL 1.1 and 2.0 is purpose-built to overcome processor memory bandwidth bottlenecks and capacity limitations in accelerated and intelligent infrastructure.”

Astera has developed two types of Leo Smart Memory Controllers, and an Aurora A-Series add-in-card (AIC) to carry them. The E-Series is a memory expansion controller while the P-Series provides memory pooling, sharing, and expansion.

The controllers implement the CXL memory protocol to enable a host CPU to access and manage CXL-attached memory in support of general-purpose compute, AI training and inference, in-memory databases, memory tiering, multi-tenant use cases, and other application-specific workloads.

There can be up to 2TB of memory per controller and up to 5600 MT/s per memory channel. This speed is required to fully utilize the bandwidth of the CXL 1.1 and 2.0 interfaces. CXL 1.1 supports memory expansion with a direct host to memory expansion device connection. CXL 2.0 adds memory pooling and sharing by having a CXL switch between the memory expansion device and multiple hosts.

Astera Lab’s Leo devices support the standard JEDEC DDR DRAM interface and other vendor-specific interfaces for more flexibility. It says its Leo controllers have been developed in partnership with leading processor and memory vendors, strategic cloud customers, system OEMs, and the CXL Consortium to ensure they meet their specific requirements and interoperate across the ecosystem.

The Leo controllers support end-to-end data path security and have telemetry features and software APIs for fleet management to make it easier to manage, debug, and deploy them at scale.

The CXL semiconductor plumbing infrastructure is being put in place so that CXL-supporting processors, such as Intel’s coming Sapphire Rapids Xeons, have a way to use CXL-connected memory. Hopefully, when they do arrive, a CXL plumbing infrastructure should be ready and waiting for them to use and customers can enjoy big memory computing with less time-wasting storage IO.

Find out more about the Leo controllers and Aurora AIC here. SemiAnalysis has more detailed information as well.