It would be great if software could automatically detect incoming data of a specific type and route it to the right analysis and processing routines to present up-to-date information to workers. Komprise has released Smart Data Workflows that it says can do just this.

Manually setting up a processing workflow to inspect incoming unstructured data, pick out significant parts of it, and then send it on to analytic processes is time-consuming and complex. There’s always the option of hiring coders to customize software to do this, but Komprise’s pitch is that its Intelligent Data Management Platform software – plus no-code type functionality – is a much less onerous way of making the workflow admin job easier.

Jay Smestad, senior director of information technology at PacBio, said: “Komprise has [already] delivered a rapid way to visualize our petabytes of instrument data and then automate processes such as tiering and deletion for optimal savings. Now, the ability to automate workflows so we can further define this data at a more granular level and then feed it into analytics tools to help meet our scientists’ needs is a game changer.”

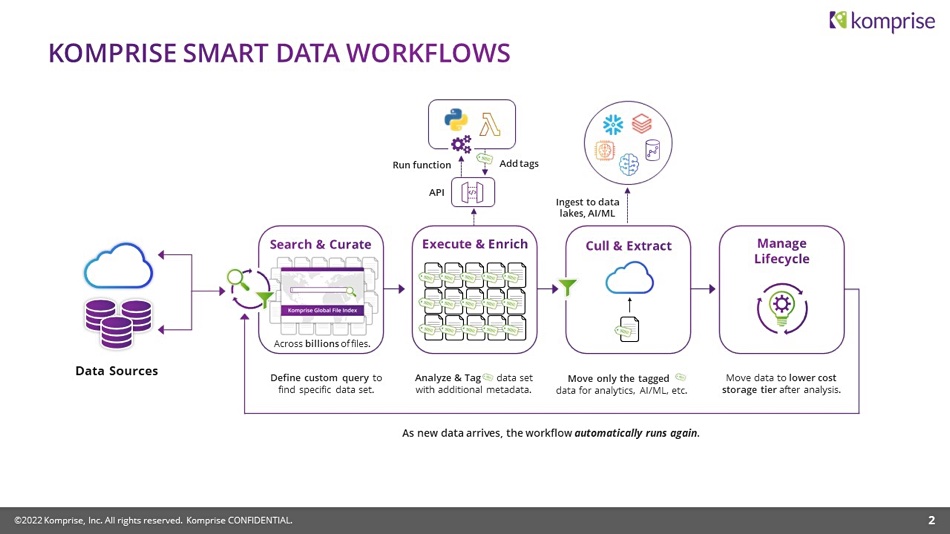

The basic idea is to scan and tag incoming data and then select specific data using these metadata tags for automatic delivery to analysis routines for instant processing or data lakes for after-the-event processing. It is an extension of the tiering in Komprise’s existing lifecycle management software, which selects data based on age and access rate and moves it to lower and cheaper storage tiers. More metadata tags can be added based on an analysis of incoming data such as indicating data relevant to coronavirus processing, genomics research, daily/weekly/monthly sales analysis, or production machinery operations.

Then data can be selectively found by looking for these tags and moved on to the next stage of its progress through an organization’s IT systems. But more than this, next-stage processing can be automated as well with function calls.

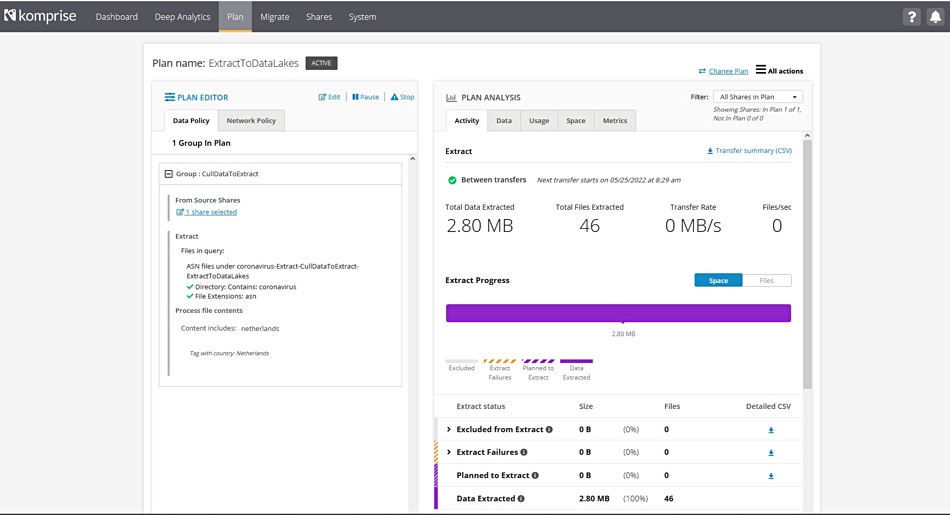

Komprise Smart Data Workflows discovers relevant file and object data across cloud, edge, and on-premises datacenters then feeds that data in native format to AI and machine learning (ML) tools and data lakes, the company says. It uses Komprise’s Deep Analytics Queries and Actions for copy and confine operations, has expanded global tagging and search, and added the ability to execute external functions such as running natural language processing functions via API on selected datasets.

Smart Data Workflow functionality is generic and can apply to life sciences, financial markets, legal processes, autonomous vehicle development, and more. Here is a genomics example from a Komprise blog:

- Search: Define and execute a custom query across on-premises, edge and public cloud data silos to find all data for Project X with Komprise Deep Analytics and its Global File Index

- Execute an external function on Project X data to look for a specific DNA sequence for a mutation and tag such data as “Mutation XYZ”

- Move only Project X data tagged with “Mutation XYZ” to the cloud using Komprise Deep Analytics Actions for processing there

- Move the data to a lower storage tier for cost savings once the analysis is complete

An autonomous vehicle development use of Smart Data workflows could involve finding crash test data related to abrupt stopping of a specific vehicle model and copying this data to the public cloud for further analysis. It would be accomplished by executing an external function to identify and tag data with <Reason = Abrupt Stop> then moving only the relevant data to a public cloud data lakehouse.

Example external functions include Snowflake, Amazon Macie, and Azure machine learning.

Komprise says it is helping to automate repetitive and complex data workflows, from ingest through to automatically applying identifying descriptors (tags), selecting subsets of data using these tags, and executing processes on the selected data. The time needed between data arrival (ingest) and having analysis results ready for inspection and use can be shrunk.

Komprise CEO Kumar Goswami said: “Whether it’s massive volumes of genomics data, surveillance data, IoT, GDPR or user shares across the enterprise, Komprise Smart Data Workflows orchestrate the information lifecycle of this data in the cloud to efficiently find, enrich and move the data you need for analytics projects.”

Workflows can, in effect, be set up and edited through the Komprise GUI, and then modified as needs dictate. Goswami’s company is making it much easier to manage and move massive volumes of unstructured data for faster analysis as well as for the traditional virtues of cost reduction and compliance. Komprise has more on the product in its blog.