Object storage powerhouse MinIO has decided to use Apache Arrow to gets its stored data into GPUs rather than Nvidia’s GPUDirect scheme.

MinIO founder and CEO AB Periasamy thinks that GPUDirect, which avoids server CPUs copying data from storage drives into its DRAM before sending it to GPUs – a so-called bounce buffer technique – is a poor idea. Also, Nvidia’s GPU Direct is a custom connector and there are other GPUs out there than just Nvidia ones.

He told us “Long ago, I fell for such tricks and added IB-verbs RDMA in GlusterFS to only prove the point that it can be implemented quite easily. Even during the early days of MinIO, we experimented with MNM (MinIO NVMe Memory) over RDMA and abandoned it.”

Why was that? “I see no point in implementing GPUDirect because, in almost all of MinIO’s AI/ML high-performance deployments, the real bottleneck is either the 100GbitE network or the NVMe drives and definitely not bounce buffers. Besides, GPUDirect Storage is a poorly thought-out design.”

He thinks vendors using RDMA (Remote Direct Memory Access) and/ NVME over Fabrics (NVMeoF) have made the wrong choice. “These HPC filesystem startups never fail to amaze me. They should learn from Lustre and Panasas history books.

“RDMA repeatedly failed storage startups. SRP, iSER, NFSoRDMA, and recently NVMeoF RDMA all failed against TCP-based alternatives. You can also add GPU Direct Storage to this list. NVMe raw block storage interface with a control channel for metadata is terribly complicated for the AI/ML community.”

Arrow

He thinks a better idea is to use Arrow. “In today’s times, raw TCP/IP sockets are considered old-school. AI/ML workloads and large-scale databases in the cloud are built on object storage over RESTful and gRPC (Google Remote Procedure Call) APIs. A meaningful development in this space for in-memory computing and zero-copy data transfers is Apache Arrow.”

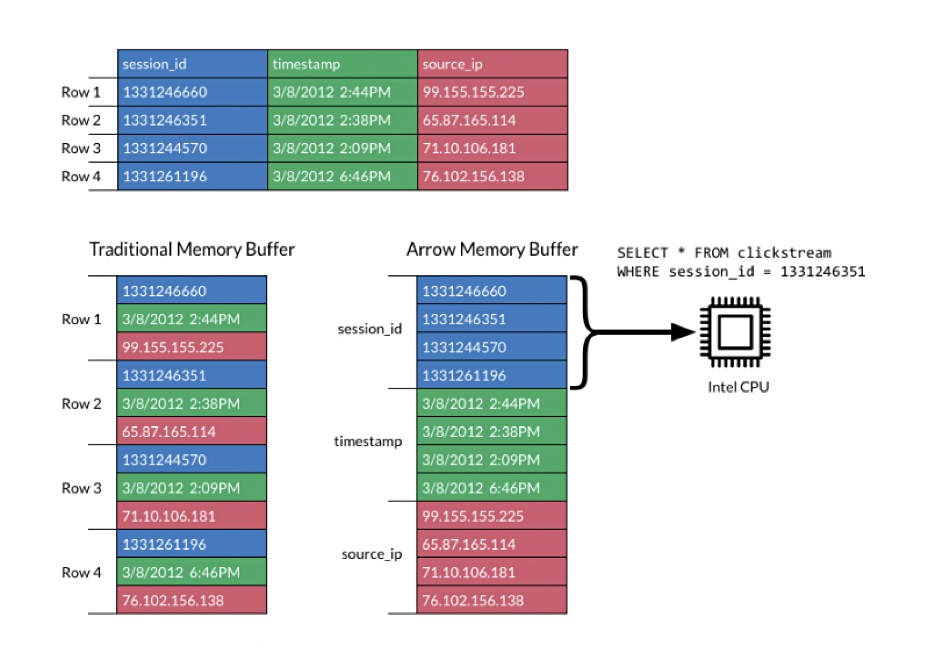

This has an in-memory contiguous columnar format for representing table-like datasets in memory which can include nested and user-defined data types. It’s said to be designed with the needs of analytical database systems and data frame libraries in mind, and is language agnostic.

The contiguous format enables vectorisation and the use of Single Instruction, Multiple Data (SIMD) operations. The use of Arrow as a standard format obviates the need for custom GPU connectors like GPUDirect.

A blog by MinIO technology evangelist Ravishankar Nair discusses how the AI/ML community can use Arrow with MinIO. “Arrow uses memory mapped I/O and avoids serialisation/deserialisation overheads when you convert between most of the formats while leveraging the columnar data format.”

Arrow is standard and generic for GPUs, not customised to just one and, MinIO says, enables a data lake data to travel across systems without experiencing any “conversion” time thus putting less strain on the system than GPUDirect.