ScaleFlux has launched a product suite based on its third generation computational storage hardware with its own system-on-chip (SoC) hardware and up to eight Arm cores.

Computational storage puts compute directly into drives so that data can be processed in situ without having to be moved from the drive to a server host’s main memory for processing. This saves time and frees up host CPU cycles for other work.

Hao Zhong, ScaleFlux co-founder and CEO, said in a statement: “With this new suite of products, we are making it easier than ever for organisations to adopt and realise the benefit of Computational Storage at scale.”

Mohamed Awad, VP of IoT and embedded at Arm, said: “ScaleFlux’s new product suite will open up opportunities for software and system developers to deploy computational storage across a range of new applications from machine learning to edge computing.”

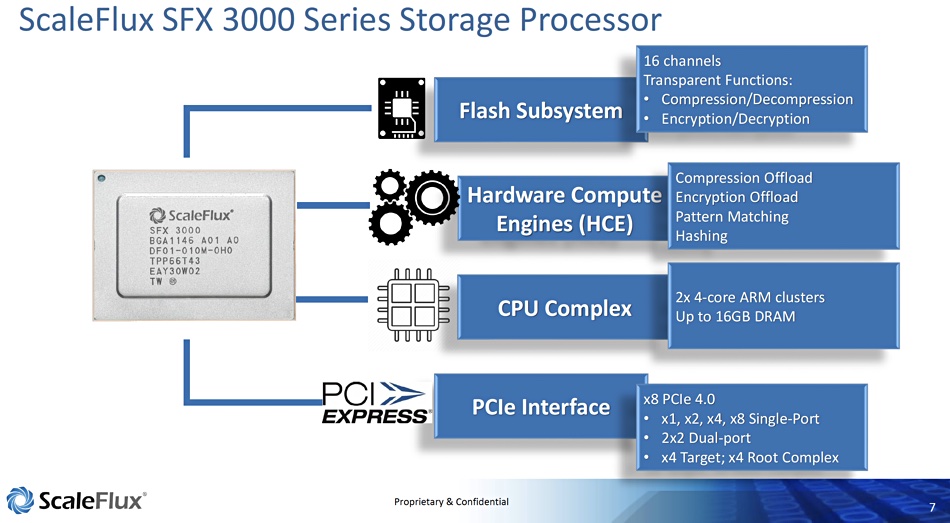

The company introduced its CSD 2000 in May and this used an FPGA processing unit. We now have the SFX 3000 SoC which has taken three years to develop and is available to other drive vendors as a SoC plus firmware.

This SoC has eight Arm A53 cores and 16GB of DRAM plus hardware engines for compression, encryption, hashing and pattern matching. It has a PCIe Gen-4 interface with 1, 2, 4 dual-port lanes and an eight-lane single-port capability. There can be an optional co-processor or FPGA.

The SFX 3000 products

ScaleFlux is using it in three products:

- CSD 3000 NVMe computational storage drives;

- CSP 3000 computational storage processing system with no on-board flash storage;

- NSD 3000 NVMe SSDs.

All three have NVMe drivers and encryption.

The CSD 3000 can, ScaleFlux claims, cut storage costs by 3x, double application performance and increase Flash endurance as much as 9x compared to ordinary SSDs. Customers can also deploy application-specific, distributed compute functions by programming up to four Arm cores in the SoC.

It comes in U.2, E1.x and add-in card (AIC) form factors with 4, 8 and 16TB raw capacities based on TLC or QLC NAND. The compression facility can make that a four times larger effective capacity. The CSD 3000 can deliver up to 1.5 million sustained random read IOPS using four PCIe lanes and 2 million using eight, and 350,000 sustained random write IOPS at the four-lane level and 500,000 with eight lanes. Read bandwidth is 7GB/sec with four lanes and 8GB/sec with eight.

The latency numbers are <10μs for random write and <70μs for read.

The CSP 3000 has the SoC’s compression, encryption, and programmable functions but not the CSD 3000’s flash storage. It is an accelerator-only product and all eight Arm cores are available for programmed functions.

The NSD 3000 is claimed to achieve twice the endurance and twice the performance on random write and mixed read/writes over other NVMe drives. With a 3:1 compression ratio it can provide up to 9x more endurance than a standard NVMe SSD. It has no programmable Arm cores and no hardware acceleration engines.

The four products are accompanied by CSware software that includes a software RAID function, “KallaxDB” key-value software and sample B-tree code optimisation. Other CSware applications are in development and should be open-sourced.

ScaleFlux’s four new products are available in beta today and will be generally available in Spring 2022.

Comment

An edge IT environment can use the CSD 3000 to augment the main system processor and include up to four times the raw capacity of other SSDs, so reducing physical space requirements. Thus, in a video capture application, the drive’s processors and software capability could be used to scan, filter and analyse video content and enable the edge system to make decisions based on the results without needing human intervention or transmission to a central site.

ScaleFlux is working with hyperscaler service providers and others to develop applications that use its new products. Indeed the product development has been directly influenced by comment from such potential customers. We might expect to hear more about applications using these drives in the future.