Rockport Networks has exited stealth with a switchless datacentre network product that it claims can carry data traffic faster and with better latency than switched networks, yet is a drop-in replacement for Ethernet and InfiniBand cabling and switches with no application software changes.

It says switch-based networks are reaching a dead-end of increased complexity and cost. Matt Williams, Rockport’s CTO, said in a statement: “When the root of the problem is the architecture, building a better switch just didn’t make sense. With sophisticated algorithms and other purpose-built software breakthroughs, we have solved for congestion, so our customers no longer need to just throw bandwidth at their networking issues. We’ve focused on real-world performance requirements to set a new standard for what the market should expect for the fabrics of the future.”

Doug Carwardine, Rockport Networks CEO and co-founder, said: “Rockport was founded on the fact that switching, and networking in general, is extremely complicated. … We made it our mission to get data from a source to a destination faster than other technologies. Removing the switch was crucial to achieve significant performance advantages in an environmentally and commercially sustainable way.”

How it works

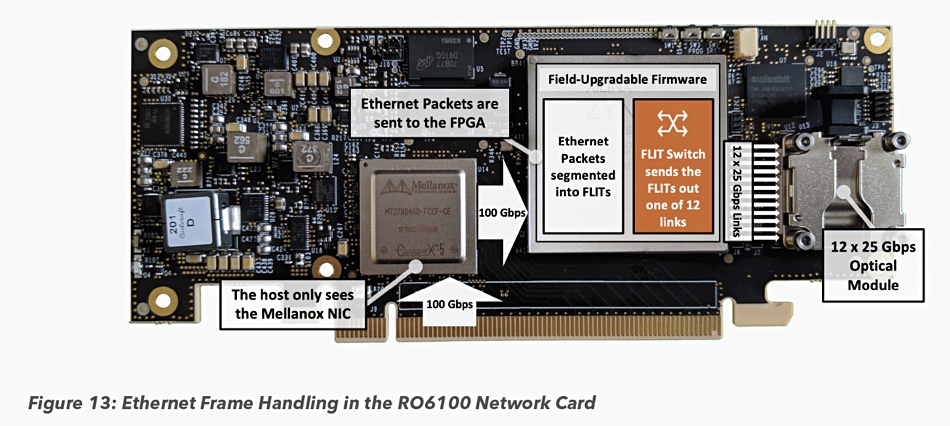

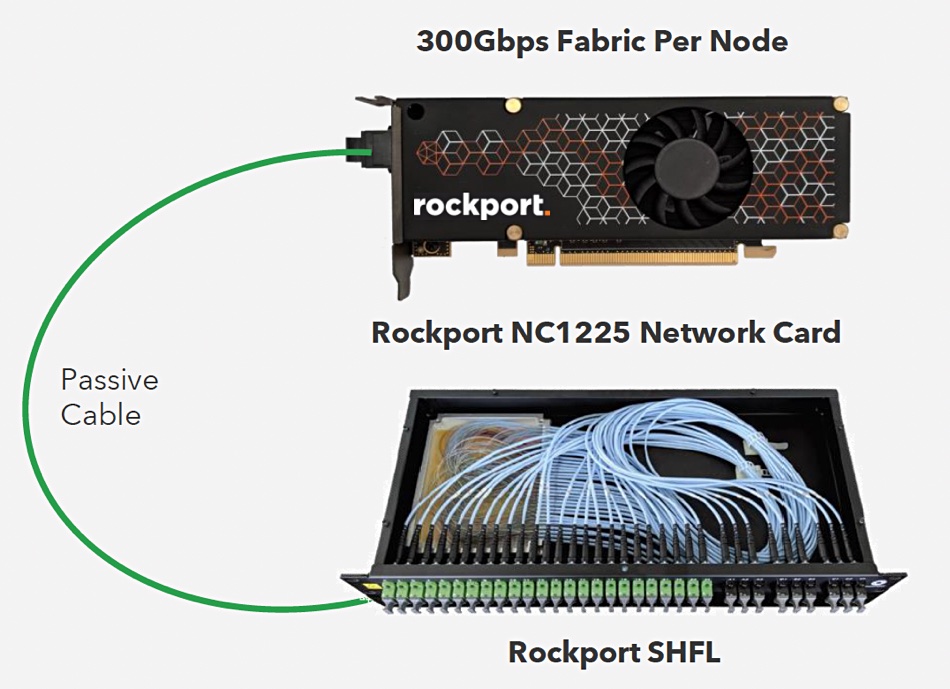

Network switch functionality has been distributed to intelligent endpoint network cards — most definitely not called NICs or even SmartNICs — which have an FPGA and connect up to 24 endpoints to a dedicated 1U SHFL (pronounced “shuffle”) optical device using passive cabling. SHFLs need no power or cooling and can be linked together to scale out the network. Ethernet and InfiniBand traffic can be carried over the Rockport network. The network cards are standard, low-profile half height, half length (HHHL) cards fitting in a PCIe slot.

CTO Matt Williams told us: “People can remove their Ethernet NICs and InfiniBand cards, use the Rockport Network Card, and it just works.”

The Network Card contains a Mellanox ConnectX unit, and that’s what Ethernet and InfiniBand-using applications in servers and other devices see: a Mellanox ConnectX-5 Ethernet NIC, providing verbs (RoCE) and sockets (TCP/UDP) API support. In effect Rockport provides OSI layer 1 and 1.5 networking. As Williams explains: “We transport Ethernet frames but don’t switch them.”

Traffic is divided into small chunks called FLITS by the network card’s Rockport Network Operating System (rNOS) software. These are interleaved and transmitted together so that large frame or packet network transfers don’t hold up smaller ones, eliminating the noisy neighbour problem and resulting tail latency. Rockport’s NC1225 network card has 300Gbit/sec bandwidth and enables each endpoint or node to be connected to 12 other nodes.

This switchless network is a fully distributed direct interconnect system based on supercomputer topologies. It supports small and large scale clusters with greater bandwidth efficiency and lower power requirements than a comparable traditional switching environment.

Network technology

We had a look at Rockport’s 6D torus-based networking technology back in 2015 and reported: “Its idea is that storage and server nodes in a network should be directly interconnected using a Direct Interconnect (torus mesh) of optical cables. A torus mesh is a geometric shape analogy of their interconnection scheme, being a ring with a circular cross-section and a hole inside the middle of the ring — think doughnut.”

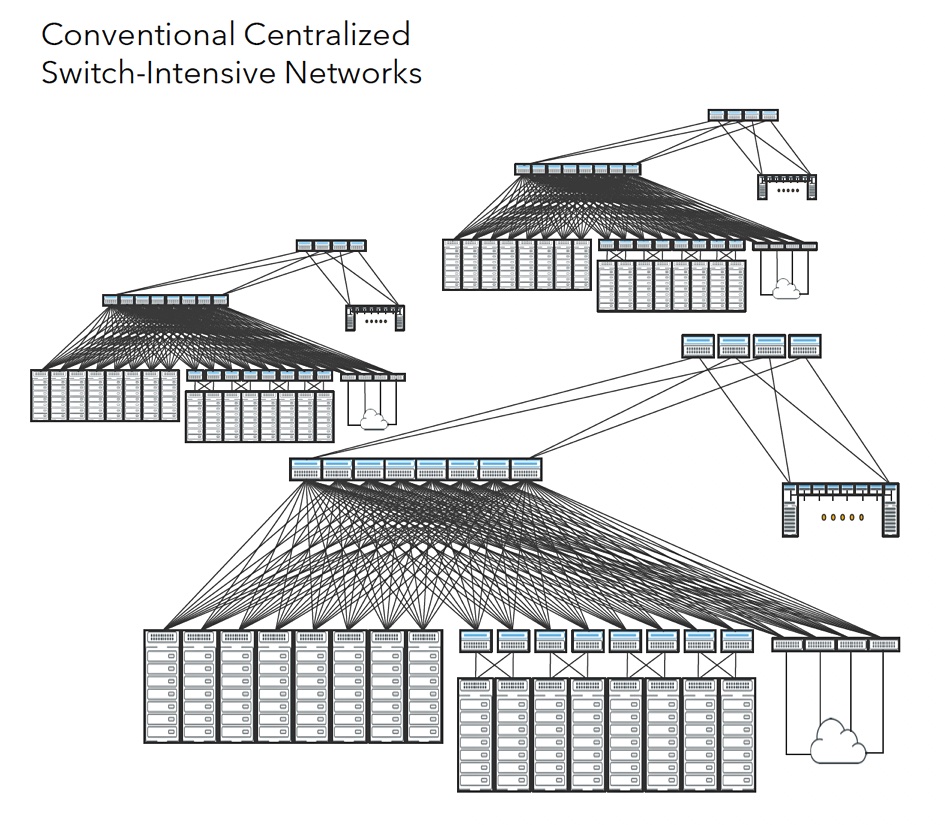

This design favours east-west network traffic in a datacentre as much as north-south traffic, unlike a traditional switch-based network. Rockport says a hierarchical switch-based scheme rapidly becomes excessively complex as it scales.

It contrasts this with its relatively simple Network Card and SHFL scheme;

Network admins use the Rockport Autonomous Network Manager (ANM) user interface (UI) to configure, manage, and troubleshoot the Rockport Switchless Network. The ANM continuously captures all aspects of the network (health status, performance metrics, error counters, events, alarms, and the UI status) in a temporal or a time-series database. It stores 30 days of historical data, with the last seven days of history stored at high fidelity within the temporal database, available from the timeline. This lets network administrators scroll back in time to insert themselves into that context for troubleshooting.

Nodes autonomously detect failed links and automatically avoid the impacted paths in real time at hardware speed. A downloadable Rockport technology primer document explains more.

Benefits

Rockport says that its users are seeing an average 28 per cent reduction in workload completion time because of its faster networking with lower and more consistent latency. It claims a one per cent improvement in workload completion times translates to approximately one per cent improvement in overall AI/ML/HPC cluster utilisation.

It also argues that eliminating centralised switches and using its scheme reduces power and resulting cooling requirements of network equipment by over 60 per cent, with overall datacentre energy savings of 12 per cent or better. Cabling is reduced by up to 75 per cent. The larger the infrastructure, the greater the savings in both material and personpower to install and manage networks.

This means Rockport’s gear can provide, it says, benefits for customers with one to many switches.

Users

There are 396 Rockport Network Card nodes in production at TACC, the Texas Advanced Computing Centre, is both a customer and a Rockport Centre of Excellence. TACC houses “Frontera” — the tenth most powerful supercomputer in the world — and Rockport’s switchless network is part of the system.

Dr Dan Stanzione, director of TACC and associate VP for research at UT-Austin, said: “We’re excited to work with innovative new technology like Rockport’s switchless network design. Our team is seeing promising initial results in terms of congestion and latency control. We’ve been impressed by the simplicity of installation and management. We look forward to continuing to test on new and larger workloads and expanding the Rockport Switchless Network further into our data centre.”

You can watch a video session with Stanzione here (but you might need to get a Vimeo login to do so).

Alastair Basden of DiRAC/Durham University and technical manager of the COSMA HPC Cluster, said: “Based on a 6D torus, we found the Rockport Switchless Network to be remarkably easy to setup and install. We looked at codes that rely on point-to-point communications between all nodes with varying packet sizes where — typically — congestion can reduce performance on traditional networks. We were able to achieve consistent low latency under load and look forward to seeing the impact this will have on even larger-scale cosmology simulations.”

Rockport background

Rockport was founded in 2012 by CEO Doug Carwardine, initial CTO and now Chief Scientist Dan Oprea and COO Michael McLay. It has taken in just $18.8 million of VC, grant and debt financing according to Cunchbase, with about $15 million of that raised in 2019.

The company has 165 employees, up from around 80 a year ago, with 100 of them being engineers, and it has filed around 80 patents. It is talking to potential OEMs and tier-1 channel partners and we might expect announcements about partnerships in the future.