Dutch high-performance computing (HPC) researchers have reported a Fungible storage node delivering 6.55 million random read IOPS to a single server. Fungible claims this is the world’s highest performance between a single server reading data and a single storage target.

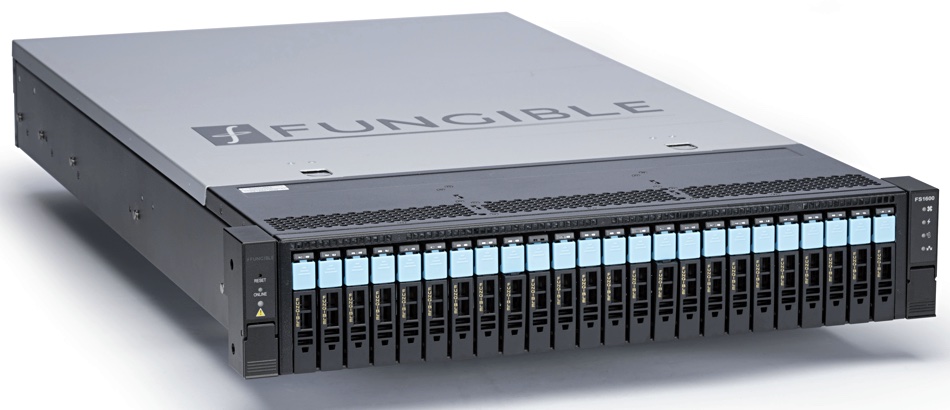

Fungible has developed DPU (Data Processing Unit) chips and used them to power its FS1600 storage node. This is a scale-out, block storage server in a 2U, 24-slot NVMe SSD box with two F1 DPU controllers and access over an NVMe-over Fabrics TCP link. In the Dutch test it was hooked up to a 64-core AMD-powered server across a Jupiter Networks set up.

Pradeep Sindhu, CEO and co-founder of Fungible, said in a prepared quote: “What we are achieving in the lab … can be deployed throughout the world. We believe customers, partners and research institutions can push innovation boundaries with the Fungible Storage Cluster to radically reduce processing time and advance experimental activities in accelerator-based particle physics and astro-particle physics. Ultimately, it is revolutionising the performance, economics, reliability and security of scale-out data centres.”

Fungible says its IOPS number is nearly 2x better performance than its nearest competitor (which it declined to identify).

The test was run by two organisations: Nikhef, a partnership between the Institutes Organisation of the Dutch Research Council and six universities; and SURF, the collaborative ICT association of Dutch educational and research institutions. These two are focussed on exploring high-performance storage for physics and related HPC science.

Testing was carried out at Nikhef’s computing centre in Amsterdam. Nikhef is looking for fast and affordable data processing with a view to multiplying the data flows from experiments at CERN in Geneva when the intense high-luminosity LHC accelerator comes online there after 2026.

Tristan Suerink, IT Architect at Nikhef, said: “The Fungible FS1600 will be recognised as the start of a new era in storage, thanks to its unique DPU capabilities. We see a bright future for these devices and DPUs in general.”

Raj Yavatkar, CTO at Juniper Networks, said: “The results achieved are truly ground-breaking and will have far-reaching implications for data centres across the globe.”

Fungible claims that, as a result of this performance, data-centric workloads can be consolidated, leading to an increase in utilisation of storage media and cost per IOPS decrease compared to existing software-defined storage solutions. It claims its technology scales linearly to 300 million IOPS in a single 40 RU rack, and can extend further to many racks. The FS1600 enables data services such as data durability, data reduction, data security in-line and at line-rate — all selectable on a per-volume basis.

Comment

The Fungible FS1600 is basically a proprietary external SAN array and, as demonstrated here, with high read performance. How does it compare to computing products?

According to the Evaluator Group, Pure Storage’s FlashArray//X m70 model runs at 300,000 32K IOPs and 9GB/sec bandwidth. We don’t have a bandwidth number for the FS1600, but would guess it is substantially higher than 9GB/sec.

A single VAST Data enclosure provides 400,000 IOPS from its QLC flash drives shipping out file data.

Dell EMC’s high-end PowerMax systems are much faster than this, with the 4U, 2-brick PowerMax 2000 providing up to 2.7 million IOPS.

DDN’s SFA18K appliance is billed by DDN as the world’s fastest appliance, pumping out 3.2 million IOPS from its 4U box holding NVMe and SAS SSDs and HDDs.

But a 4U Pavilion Data HyperParallel array can deliver up to 20 million IOPS — which would cast doubt on Fungible’s claim that the FS1600 delivers the world’s highest performance between a single server reading data from a single storage target. Hopefully more details will be revealed about the Fungible test to provide a fuller picture.

We are also curious about how an FS1600 would perform using GPU-Direct and pumping data out to Nvidia GPU servers.