Las Vegas NetApp today pumped out a big hardware release centred on flash object and SAN storage with hybrid disk array action.

At NetApp Insight, the company also announced NetApp Keystone, a subscription billing service, and lots of software simplification and cloud storage-related announcements. We’ll focus on the StorageGRID hardware in this story.

NetApp made three StorageGRID announcements today, along with three all-flash ONTAP arrays and two hybrid arrays.

NetApp’s hardware range is anchored on the ONTAP operating system arrays, either all-flash AFF systems or FAS hybrid disk/flash arrays. These are accompanied by E-Series arrays with a stripped down software environment; StorageGRID object storage systems; SolidFire flash arrays and Elements hyperconverged systems using SolidFire flash storage.

StorageGRID

Last month NetApp introduced the storage industry’s first all-flash classic object storage system for workloads needing high concurrent access to many small objects. (We wrote about it here.)

A manufacturing customer developed the prototype when it found a disk-based StorageGRID array was too slow to handle sensor data from their production equipment line. NetApp, in response to this use case, developed the SGF6024 in six months.

The StorageGRID range starts with an 8-CPU core SG571 with 144TB raw capacity from its 12 x 12TB disk drives, and continues with the SG5670 (720TB, 60 drives, 8-core CPU) and SG6060 (696TB, 58 disk drives, 2 caching SSDs, 40-core CPUs).

The new SGF6024 is built from two components: a 1U compute blade, and an EF570 storage shelf with 24 slots for 2.5-inch SSDs.

All the EF570 SSDs are supported, with capacities from 800GB up to 15.36TB. These are mainstream TLC SSDs and not the new, denser QLC flash as used by VAST Data and Pure Storage.

Maximum raw capacity per chassis with 7.6TB drives is 182.4TB The EF570 supports latencies of <300µs with up to 1,000,000 4K random read IOPS.

In a briefing with Blocks & Files, Duncan Moore, the head of NetApp’s StorageGRID software group, said the SGF6024 has about 50 per cent lower latency than SG6060. There is a moderate increase in data access speed – and room for more.

Moore said the StorageGRID software stack has been improved and there is scope for more improvement as it becomes more efficient. There was no need for such software efficiency before; the software had the luxury of operating in disk seek time periods.

Filers are an alternative option for Edge deployments such as manufacturing process line sensor handling. Moore said filers are better suited to transaction workloads with lots of file updates. There is no such thing as an update in the object world – all objects are unique. If the workload is not transaction-based then object storage is a good choice.

He said direct comparison between flash object storage and filer performance is not practical apart from comparing latencies. This is because filers are rated in MB/sec while object system are measured in objects/sec or Gets or Puts/sec.

AI workloads can feature concurrent access to small objects and NetApp says the SG6024 is suited for this use case. It can be added to existing StorageGRID systems as a high-performance island.

Clearly the SGF6024 is faster than disk-based object storage systems and competing object storage hardware suppliers will look to develop their own all-flash object storage.

NetApp today also introduced SG1000 compute nodes providing high-availability load balancing and improved grid administration by hosting the admin node for the namespace on a single set of nodes.

The existing SG6060 gets expansion nodes and offers up to 400PB in a single namespace. This makes it suited for high density, high-capacity, large object workloads.

V11.3 of the StorageGRID software is needed for the new hardware, and this also provides the ability to tier off objects to Azure Blob Storage, supporting up to 10 Cloud Storage Pools per grid.

AFF ONTAP arrays get more active

NetApp’s ONTAP hardware range consists of all-flash FAS (AFF) arrays and hybrid flash/disk FAS arrays. There’s a range of AFF systems, from the new C190 entry level, through the A220, A300, A320 to the A700 top end model.

NetApp today announced an A400, a C190 and an All-Flash SAN, which seems like an oddity at first. It is an ONTAP system with a SAN-only (block) personality. All the file services have been made unavailable.

But it also has newly-developed active:active controller technology with automated failover. Until now NetApp ONTAP arrays have had active:passive controllers with a slower recovery from controller hardware failure. V9.7 ONTAP is required for this active:active capability.

The all-flash All SAN array (ASA) uses A220 or A700 hardware and a new A400 will be supported in coming months. Initially this All SAN system is not clusterable, as it is only a single dual-controller system.

NetApp positions this system for mission-critical applications running on databases such as Oracle and SQL,

Octavian Tanese, NetApp’s head of ONTAP software and systems group, told Blocks & Files that NetApp aimed to gain market share in SAN areas where customers don’t want to deal in file stuff.

A400

With a data acceleration capability that offloads storage efficiency processing, the A400 is a new departure in AFF arrays. NetApp has been tight-lipped about this feature but the implication is that the A400 uses a hardware assist for some or all of ONTAP’s compression, deduplication and compaction processes.

According to NetApp, the A400 incorporates end-to-end NVMe design and offers extremely low latency – no numbers supplied – at a mid-range price point for enterprise applications, data analytics, and artificial intelligence workloads.

The system has a dual-controller chassis and up to two NVMe-oF (100GbitE link) or SAS expansion shelves. The host connectors include 100GbitE and 32Gbit/s Fibre Channel with NVMe/FC supported.

There are up to 480 NVMe or SAS SSDs per HA controller pair. The NVMe SSD capacities are 1.7TB, 3.8TB, 7.6TB and 15.3TB, making the maximum raw capacity 7.3PB. The SAS SSDs range in capacity from 960GB to 30.6TB, doubling the maximum raw capacity. NetApp provided no effective capacity measures before announcement time.

The existing A320 supports 576 SSDs and has a maximum effective capacity (after data reduction) of 35PB, implying 60.8TB drives, which are not available. However, with a 4:1 data reduction ratio applied, we get to 15TB drives, which are available.

Applying a 4:1 data reduction ratio to the A400 gives it a maximum effective capacity of 29.4PB in NVMe guise and 58.8PB with SAS SSDs.

That 29.4 to 58.8PB straddles the A320’s capacity. NetApp told us that the A400 delivers up to 50 per cent higher performance than its predecessor, without the predecessor system being identified. It could be the A320.

AFF C190

The C190 came in to life because NetApp saw there was market room under for a less powerful system than the A220. The C190 is not expandable and has 24 x 990GB SAS SSDs in its 2U cabinet, giving23.76 TB raw capacity. After deduplication and compression effective capacity is 50 TB. THat’s a 2:1 data reduction rate, which is different from the one we have estimated for the A400. We are asking NetApp to clarify this situation.

Two FAS additions

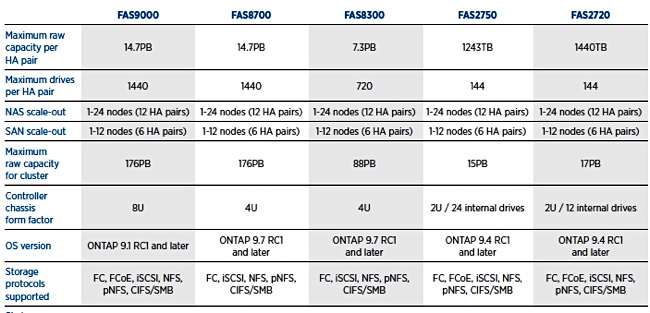

The FAS line starts with the FAS2720 and passes through the FAS2750, FAS8200 and on up to the FAS9000. There are two new models; the FAS8300 and the FAS8700.

NetApp has a table positioning these systems:

The FAS8200 gets replaced by these systems, with the 8700 having twice the capacity of the 8300.

NetApp has not detailed any availability or pricing information.